Jump to section:

TL;DR / Summary

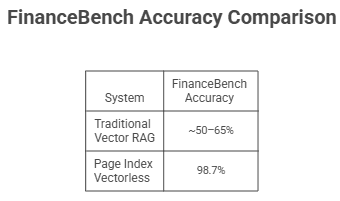

Page Index vectorless databases are redefining document retrieval in 2026 by replacing traditional vector-based RAG systems with a reasoning-first approach. Instead of relying on embeddings and similarity search—which often fail on complex, cross-referenced documents—Page Index structures content as a hierarchical JSON tree that an LLM navigates like a human reading a table of contents. This enables highly accurate, context-aware retrieval (demonstrated by ~98.7% accuracy on FinanceBench vs. ~50–65% for vector RAG), making it especially valuable in finance, legal, healthcare, and technical domains. However, this accuracy comes with trade-offs: higher latency, greater cost per query, and limited scalability for large document collections. As a result, Page Index is not a universal replacement for vector databases but a specialized solution for deep, high-stakes document intelligence where correctness, traceability, and reasoning matter more than speed or cost.

Ready to see how it all works? Here’s a breakdown of the key elements:

- Why AI Document Retrieval Is Failing — And What It Is Costing the Tech Industry

- What Is Page Index Vectorless Database? A Plain-Language Definition

- The Origin of Page Index Vectorless Database — Where It Came From and Why It Was Built

- Five RAG Failures That Made Page Index Vectorless Database Necessary

- FinanceBench Results — When Page Index Vectorless Database Proved Itself at Scale

- How Page Index Vectorless Database Works — The 6-Stage Retrieval Architecture Explained

- Real-World Industry Use Cases for Page Index Vectorless Database in 2026

- How Ruh AI Is Adapting Page Index Vectorless Database for Enterprise AI Agents

- Key Advantages of Using Page Index Vectorless Database Over Traditional Vector RAG

- Limitations and Cons of Page Index Vectorless Database You Should Know Before Deploying

- Production Risks of Page Index Vectorless Database — What Can Go Wrong

- Frequently Asked Questions About Page Index Vectorless Database

Why AI Document Retrieval Is Failing — And What It Is Costing the Tech Industry

There is a problem that most AI teams do not talk about loudly, but every team working with complex documents eventually runs into it. You build a Retrieval-Augmented Generation (RAG) system. You set up your vector database, generate embeddings for your documents, tune your similarity thresholds, and run your first round of tests. The system works beautifully on simple queries.

Then someone asks it a question that requires reading across three sections of a 200-page document. Or asks a follow-up that references something from a minute ago. Or asks a question whose answer is buried in an appendix that another section points to. And the system — despite all the engineering effort behind it — returns something that sounds correct but is factually wrong.

This is not a tuning problem. It is not a prompt engineering problem. It is a structural problem with how traditional RAG systems retrieve information — a problem that researchers have documented extensively across academic benchmarks. The gap between what enterprises need from AI and what traditional retrieval delivers is precisely why platforms like Ruh AI have invested in next-generation document intelligence architectures. And it is exactly the problem that the Page Index vectorless database was built to solve.

This blog takes a comprehensive look at Page Index: where it came from, why the industry needed it, how it works under the hood, how it is reshaping document intelligence across finance, law, medicine, and engineering — and where, even with all its strengths, it still falls short.

What Is Page Index Vectorless Database? A Plain-Language Definition

Before going into the history and the industry impact, it is worth establishing a precise definition — because "vectorless database" is a term that confuses people the first time they encounter it.

Page Index Vectorless Database: A document retrieval architecture that completely eliminates vector embeddings, vector databases, and arbitrary text chunking. Instead of converting text into numerical vectors and retrieving chunks based on mathematical similarity, it organises documents into a JSON-based hierarchical tree — a structured, machine-readable Table of Contents — and uses a large language model's own reasoning to navigate that tree and locate precisely the information that answers a given question.

The "database" in the name is a slight misnomer in the traditional sense. There is no external database being queried. There are no embedding vectors being compared. The entire index is a lightweight JSON structure — containing section titles, summaries, node IDs, and metadata — that lives directly inside the LLM's active reasoning context. The LLM reads it like a human expert reads a table of contents: not exhaustively, but strategically.

The raw text of each document section is stored separately and fetched on demand, only when the LLM reasons that a particular node is relevant to the question being asked.

The result is a retrieval system that does not guess based on linguistic similarity. It thinks through document structure and retrieves information based on logical relevance — the same way a senior analyst, a seasoned attorney, or an experienced physician would navigate a complex report. This is the same reasoning-first philosophy that Ruh AI builds into its AI employee platform: agents that navigate context deliberately rather than searching by surface-level keyword similarity. You can explore the open-source framework directly on the PageIndex GitHub repository.

The Origin of Page Index Vectorless Database — Where It Came From and Why It Was Built

Retrieval-Augmented Generation (RAG) started as an elegant fix for a real problem: LLMs cannot know what they were not trained on. Give the model access to external documents at query time, retrieve the most relevant passages, and let it answer from there. For simple use cases — FAQ bots, news search, short documents — it worked well enough. The 2024 comprehensive survey on RAG architectures maps this evolution thoroughly, from early naive RAG through to modular and agentic designs.

The cracks showed when practitioners in finance, law, and medicine pushed it against real professional documents. The system would retrieve something that looked relevant, and the model would produce an answer that was plausible but factually wrong — not because it hallucinated, but because the retrieved fragment had been torn from its context and was missing the surrounding logic that gave it meaning. This is the precise failure mode that makes AI deployment in financial services so difficult: at scale, a 50% accurate retrieval system is not a productivity tool, it is a liability.

PageIndex was built as a direct response. Its core navigation philosophy draws from AlphaGo's strategic planning: AlphaGo did not brute-force every possible move on the board — it used a learned strategy to evaluate which branches of the game tree were worth exploring and which could be safely ignored. PageIndex applies the same principle to documents. Instead of scanning every text chunk, the LLM reasons over a hierarchical tree of section titles and summaries, deciding strategically where to look — and following internal references like "see Appendix G" the way a human expert would. The LlamaIndex community discussion where VectifyAI first presented PageIndex captures the exact practitioner frustrations that motivated the framework.

Five RAG Failures That Made Page Index Vectorless Database Necessary

Page Index was born from five concrete failure modes that practitioners consistently hit on production RAG systems. A thorough academic treatment of these failure categories is available in the RAG survey by Gao et al. (2024) and in the broader agentic RAG survey (2025). These are also the same structural problems that organisations implementing AI at scale must address before results can be trusted.

Similarity ≠ Relevance. Vector RAG assumes semantically similar text contains the answer. It does not. A question about "operating margin trends" may share little vocabulary with the "Segment Performance Analysis" section that actually holds the answer. Vector search ranks it low; reasoning-based navigation goes there directly.

Hard Chunking Destroys Context. Splitting documents into fixed-size chunks (e.g., 512 tokens) is arbitrary — it severs tables, splits arguments mid-sentence, and disconnects clauses from the exceptions that qualify them. The model then reasons from fragments and fills in gaps it cannot see. PageIndex retrieves whole, coherent sections instead.

Blind to Cross-References. When a document says "see Appendix G," a vector database cannot follow that instruction — it only matches words to words. PageIndex treats cross-references as navigation cues, locating the referenced node in the tree and fetching its content automatically.

No Conversational Memory. Traditional RAG is stateless — every query starts from scratch. PageIndex uses prior conversation history to refine navigation, so a follow-up about "liabilities" correctly revisits the same financial section where "assets" were just discussed. This mirrors how well-designed AI agents that refuse bad commands maintain awareness of session context rather than resetting blindly.

Context Window Constraints Spiral. Longer documents force more aggressive chunking, which destroys more context, which degrades retrieval further. PageIndex sidesteps this: the compact JSON tree fits in the context window regardless of document length, and raw text is fetched selectively on demand.

FinanceBench Results — When Page Index Vectorless Database Proved Itself at Scale

The moment that moved Page Index from an architectural experiment to a legitimised production approach was its performance on FinanceBench — a rigorous benchmark specifically designed to test AI systems on multi-step financial reasoning that is trivially easy for a human analyst but historically punishing for automated systems. The full benchmark paper is available on arXiv (Islam et al., 2023) and the dataset itself is open-sourced on GitHub by Patronus AI.

FinanceBench presents questions about SEC filings — 10-K annual reports, 10-Q quarterly filings, and earnings disclosures — that require synthesising information across multiple sections of long, dense, highly structured documents. Questions about year-over-year trends, segment-level comparisons, margin calculations, and risk factor analysis — questions that a vector similarity search, by design, struggles to answer correctly.

The benchmark results were stark:

A gap this large — nearly doubling accuracy on a complex, domain-specific benchmark — is not a marginal improvement. It is a paradigm shift. It is the difference between a system that is occasionally useful and a system that is genuinely reliable.

This benchmark result demonstrated conclusively that the vector-based retrieval assumption was not just imperfect but fundamentally inadequate for complex professional document analysis, and showed that reasoning-based retrieval could achieve the kind of precision that high-stakes industries actually require. For teams exploring how LLMs handle structured data and complex reasoning tasks, FinanceBench results are one of the clearest signals that architecture matters as much as model selection.

How Page Index Vectorless Database Works — The 6-Stage Retrieval Architecture Explained

PageIndex runs in six coordinated stages, each building on the last. A hands-on notebook walkthrough of this workflow is available in the official PageIndex cookbook.

Stage 1 — Document Segmentation. Rather than splitting at arbitrary token counts, an LLM reads the document and maps its genuine logical boundaries: chapters, subsections, appendices, and self-contained tables. This produces a structural map, not output yet.

Stage 2 — Tree Construction. For each identified section, the LLM generates a JSON node containing a unique Node ID (for raw text retrieval on demand), a Title, a Summary of the section's content, recursive Sub-nodes for nested subsections, and Metadata such as page ranges and document type. Together, these nodes form the "in-context index" — a compact, lightweight map of the entire document that fits inside the LLM's context window because it holds only summaries, not full text.

Stage 3 — Query Reasoning. When a question arrives, the LLM reviews the full tree and reasons logically about which branches are most likely to hold the answer — not by matching keywords, but by inferring where that type of information would structurally live in this document. This is analogous to how effective prompt engineering for autonomous AI systems must embed structural reasoning rather than relying on keyword instruction.

Stage 4 — Hierarchical Retrieval. The LLM traverses from broad top-level sections down into specific sub-nodes, ignoring irrelevant branches. Raw text is fetched from each promising node on demand.

Stage 5 — Iterative Exploration. If a retrieved node is insufficient, or contains a cross-reference like "see Table 5.3 in Appendix G," the LLM loops back to the tree, locates the referenced node, and retrieves it. This continues until the LLM judges the gathered content complete. This iterative, self-improving loop is structurally similar to how self-improving AI agents use RLHF to refine their decision paths over time.

Stage 6 — Answer Generation. All retrieved content is assembled and the LLM generates the final answer with full citations — specific page numbers and section titles — creating a transparent, auditable reasoning trace with every response.

Real-World Industry Use Cases for Page Index Vectorless Database in 2026

Financial Services. Analysts working with SEC filings — 10-Ks, 10-Qs, earnings disclosures — need answers that pull from income statements, footnotes, and segment discussions simultaneously. At 50–65% accuracy, a traditional RAG system requires manual verification of every output, eliminating its own value. PageIndex's 98.7% accuracy on FinanceBench makes it usable as a genuine first draft, with a built-in audit trail that satisfies regulated-environment documentation standards. For a broader view of how AI is reshaping financial services workflows, see Ruh AI's deep dive on AI employees in financial services.

Healthcare. Clinical researchers and compliance teams navigating dense medical literature, patient protocols, and regulatory submissions face the same cross-reference problem as finance. A vectorless retrieval system that follows internal document logic — rather than approximating by keyword proximity — is essential for decisions where the wrong section can produce a dangerous answer. Ruh AI explores the same theme in its guide to AI employees augmenting human excellence in healthcare.

Legal. Contracts reference themselves deliberately — terms defined in Section 2 are qualified in Section 8, with exceptions in Schedule B. Vector RAG returns the sections that mention the queried phrase, missing the carve-outs that change its meaning. PageIndex follows the document's own internal logic, reading the contract the way a lawyer does: by navigating the argument, not scanning for keywords.

Technical Documentation. Engineering manuals often bury the exception that overrides the general rule three subsections deep. Traditional RAG retrieves the rule and misses the exception. PageIndex's hierarchical traversal surfaces both, navigating from general procedure to specific edge case the same way a senior engineer would. This connects directly to how MLOps and AI infrastructure teams are rethinking retrieval as a first-class engineering concern, not an afterthought.

Academic Research. Long papers require synthesis across methodology, results, limitations, and supplementary data — often in response to a single question. Vector RAG returns whichever section most resembles the query. PageIndex gathers from all relevant sections, preserving the logical relationships between them.

Local and Private Deployment. Via LiteLLM and Ollama, the full PageIndex pipeline — tree generation, retrieval, answer generation — can run entirely on-premises with open-source models at the 4–8B parameter range (e.g., Gemma). No data leaves the organisation's infrastructure, which is essential for healthcare, legal, and government environments with strict data sovereignty requirements. The shift toward capable small language models for private, efficient AI makes on-premises PageIndex deployments increasingly viable for resource-constrained teams.

AI Agent Integration. PageIndex exposes an MCP server, allowing agents like Claude and Cursor to call reasoning-based retrieval natively within their workflows — no custom integration code required. The Model Context Protocol, introduced by Anthropic in November 2024, provides the open standard that makes this plug-and-play composability possible.

How Ruh AI Is Adapting Page Index Vectorless Database for Enterprise AI Agents

Ruh AI is an enterprise AI platform purpose-built for deploying AI employees — autonomous agents that handle end-to-end workflows across sales, operations, finance, and customer engagement. Its flagship products include the AI SDR (Sarah), an AI sales development representative that handles prospecting, qualification, and meeting booking around the clock, and the Ruh Work-Lab, a platform for deploying preset agents and custom workflows across any business function.

At the core of Ruh AI's architecture is a challenge that Page Index vectorless database directly addresses: enterprise AI agents need to reason over complex, structured documents — not just retrieve text that looks similar to a query. When an AI employee at Ruh AI is navigating a compliance document, an SEC filing, a legal contract, or a technical product specification, keyword-based retrieval produces the wrong result too often to be trusted at scale.

Where Ruh AI Meets Page Index

Ruh AI's approach to document intelligence is shaped by the same philosophy that drives PageIndex: retrieval must be grounded in reasoning, not approximation. Here is how the adaptation plays out across Ruh AI's core use cases:

Enterprise Search with Structural Navigation. Ruh AI's Enterprise Search product is designed to unify organisational knowledge across tools like Slack, Gmail, Jira, Confluence, and Salesforce. When documents in those systems are long and structured — product roadmaps, compliance policies, customer contracts — a vectorless tree index means the search agent navigates the document's actual hierarchy rather than pulling the fragment that happens to share vocabulary with the query. The result is an answer that is complete, contextually grounded, and traceable to the exact section it came from.

AI SDR Sarah — Reading Documents Like a Human. When Sarah, Ruh AI's AI SDR, engages with a prospect, she draws on account research, product documentation, and previous interaction history. In competitive or technical sales, the documents involved — detailed product specs, competitor analyses, pricing sheets, compliance requirements — are exactly the kind of structured, cross-referenced material where vector similarity fails. By integrating a vectorless retrieval approach into her reasoning stack, Sarah can navigate these documents to produce personalised outreach that is factually grounded, not statistically probable. For a deeper look at how AI SDRs are transforming pipeline generation, see Ruh AI's analysis of cold email and AI outreach in 2026.

Agent Workflows Across Finance and Healthcare. Ruh AI's Work-Lab enables teams to deploy preset and custom AI agents across departments. In finance, these agents may be tasked with extracting and comparing figures across quarterly filings. In healthcare, they navigate clinical protocols and regulatory submissions. In both cases, the failure mode of vector RAG — retrieving similar text rather than logically relevant sections — produces outputs that cannot be trusted without human review, which defeats the efficiency purpose of the agent. PageIndex-style vectorless retrieval changes that equation: the agent navigates the document the way a domain expert would, producing auditable answers that can be acted on directly.

Customer Journey and Marketing Operations Intelligence. Ruh AI's agents supporting customer journey mapping and marketing operations often need to synthesise insights from long research documents, campaign briefs, and multi-section strategy documents. Vectorless retrieval enables these agents to pull complete, contextually coherent sections — not fragmented chunks — when building campaign strategies or analysing customer behaviour data.

Why This Matters for Enterprise AI Adoption

The broader implication of Ruh AI's adoption of vectorless retrieval principles is a shift in how enterprise AI is evaluated. The standard for AI agent performance is no longer "does it retrieve something plausible?" It is "does it retrieve the right information, from the right place, with a traceable path showing how it got there?" This is particularly critical in regulated industries where an AI-generated answer must be verifiable, not just believable.

For organisations considering how to implement AI responsibly at scale, Ruh AI's complete AI implementation guide outlines the governance and architecture principles that underpin trustworthy AI deployment — including the kind of retrieval precision that vectorless indexing enables. If you are ready to explore what this looks like for your organisation, you can contact the Ruh AI team directly to discuss deployment options.

Key Advantages of Using Page Index Vectorless Database Over Traditional Vector RAG

Near-Perfect Accuracy. 98.7% on FinanceBench against 50–65% for traditional vector RAG. On documents with internal structure, cross-references, and multi-step logic, this gap is the difference between a system that is occasionally useful and one that is genuinely reliable.

No Vector Database Required. No Pinecone, no Weaviate, no embedding pipelines, no vector index maintenance. The entire index is a JSON file. For teams already managing complex infrastructure, removing this layer has real operational and economic value.

Full Traceability. Every answer comes with a complete reasoning trace — which nodes were visited, which pages were read, in what order, and why. In finance and law, this auditability is not optional; it is what makes an AI-generated answer defensible.

Cross-Reference Following. PageIndex navigates internal document pointers automatically. When retrieved text says "see Appendix G," the system finds Appendix G in the tree and fetches it. Traditional vector RAG cannot do this at all.

Conversational Memory. Prior conversation turns inform navigation. A follow-up question is handled in context rather than restarted from scratch, making iterative document analysis coherent and efficient.

Preserved Document Integrity. Whole sections are retrieved, not arbitrary chunks. Tables stay intact. Arguments stay connected to their conclusions. The model always reasons from complete, coherent information.

Flexible Deployment. Via LiteLLM, PageIndex runs against cloud frontier models (e.g., GPT-4) or fully local open-source models via Ollama — the same framework, configurable to any privacy or cost requirement. The growing maturity of small language models in 2026 makes local deployment increasingly practical without sacrificing the reasoning quality the tree navigation depends on.

Open-Source Foundation. No vendor lock-in. Teams can inspect the code, modify the indexing logic, and self-host the entire pipeline directly from the PageIndex GitHub repository.

Limitations and Cons of Page Index Vectorless Database You Should Know Before Deploying

High Query Latency. Multiple sequential LLM inference calls are required to traverse the document tree. Where vector search takes milliseconds, PageIndex retrieval takes 30 seconds or more per query. This makes it incompatible with any application requiring near-instantaneous responses. This latency penalty is a well-documented trade-off in agentic RAG architectures.

High Cost Per Query. Each navigation step consumes tokens. A complex query traversing five or six nodes costs five or six times what a single vector retrieval followed by one generation call would. At scale, this difference is significant.

Expensive Document Indexing. Building the tree index requires an LLM to read the entire document and summarise every section — substantially more intensive than generating embeddings. For documents queried only once or twice, the upfront cost is hard to justify. PageIndex pays off on documents analysed repeatedly.

Not Built for Large Corpus Search. PageIndex is designed for deep retrieval within a specific structured document, not for searching across thousands of diverse, unrelated files simultaneously. For broad corpus search, vector RAG is still the right tool. The RAG and Beyond survey (2024) provides a useful framework for choosing between retrieval strategies depending on task type.

Model Capability Minimum. The quality of both tree construction and tree navigation depends on the reasoning ability of the underlying LLM. Models below 4–8 billion parameters may generate inaccurate summaries or navigate incorrectly — silently degrading retrieval quality without throwing errors.

Slower Document Onboarding. Adding a new document requires running the full LLM-based tree generation pipeline. In environments where documents are ingested continuously, this per-document overhead accumulates quickly compared to the simpler embedding-and-insert workflow of vector RAG.

Production Risks of Page Index Vectorless Database — What Can Go Wrong

Beyond the documented cons — which are trade-offs you know about going in — there are genuine production risks that teams should evaluate carefully before committing to PageIndex as a core infrastructure component.

Silent Quality Degradation from Inadequate Local Models

When running PageIndex with local models (via Ollama), a model that is too small or insufficiently capable will generate tree summaries that are technically complete but semantically inaccurate. The tree is built. Queries run. Answers are returned. But the tree navigation is wrong because the summaries misrepresent what the sections actually contain, and the LLM navigates to the wrong nodes.

This failure is silent — the system does not error, it simply retrieves from the wrong sections and generates confidently wrong answers. Teams using local models should rigorously benchmark retrieval accuracy with their specific documents before deploying to production. The recommendation of 4–8 billion parameter models is a floor, not a guarantee.

Latency-Driven User Experience Collapse

A 30-second response time is acceptable in a batch analysis workflow where a document is processed overnight and the results are reviewed the next morning. It is unacceptable in an interactive Q&A interface where a user is waiting in real time. Teams that deploy PageIndex in interactive contexts without understanding the latency profile risk building a system that users find unusable — not because the answers are wrong, but because they arrive too slowly to feel like a conversation.

Cost Overruns in High-Query-Volume Environments

The high per-query token cost of PageIndex scales linearly with query volume. A system that processes 10,000 queries per day at a cost-per-query that is five times higher than a vector RAG alternative generates five times the infrastructure cost. Teams should model the total cost of ownership carefully, particularly if the query volume is expected to grow. PageIndex is economically designed for quality-over-volume scenarios — using it in volume-over-quality scenarios will lead to budget surprises.

Indexing Pipeline as a Single Point of Failure

The quality of every retrieval query depends entirely on the quality of the tree index generated during preprocessing. If the tree generation LLM call fails midway through a large document, produces low-quality summaries for a key section, or misidentifies the document's hierarchical structure, every downstream query is compromised. Unlike vector RAG — where a poor embedding for one chunk degrades performance on queries related to that chunk — a poorly constructed tree can affect retrieval across the entire document. Robust indexing pipelines need validation steps before any index is committed to production.

LiteLLM Ollama Configuration Is Not Fully Documented in PageIndex Sources

Teams building local PageIndex deployments should be aware that the framework's own documentation acknowledges the specific environment variable syntax for configuring Ollama through LiteLLM is not fully specified in PageIndex's own sources. Teams should verify the current LiteLLM provider documentation directly for correct Ollama integration syntax, rather than relying on assumed defaults. Misconfiguration here can result in a system that appears to run but is silently using a different model.

Not a Drop-In Replacement for Vector RAG

PageIndex solves a different retrieval problem than vector RAG. Teams that approach it as a drop-in replacement — swapping it in wherever they currently use vector RAG — will find it is the wrong choice in some of those contexts. It is not better than vector RAG for broad corpus search. It is not faster. It is not cheaper. It is better for deep, precise, reasoning-intensive retrieval within complex structured documents. Using it outside that context means paying the costs without receiving the benefits.

Frequently Asked Questions About Page Index Vectorless Database

What is a Page Index vectorless database in simple terms?

Ans: It is a document retrieval system that does not use embeddings or vector search. Instead, it structures a document as a hierarchical JSON tree (like a table of contents) and lets an LLM navigate that structure using reasoning to find the correct information.

How is Page Index different from traditional vector RAG?

Ans: Traditional RAG retrieves chunks based on similarity between embeddings. Page Index retrieves full sections based on logical relevance, following document structure, cross-references, and context like a human reader would.

Why does Page Index achieve higher accuracy than vector RAG?

Ans: Because it avoids the core failure of similarity-based retrieval. It does not guess based on matching words—it reasons about where the answer should exist within the document and retrieves complete, context-rich sections.

What is FinanceBench and why is it important here?

Ans: FinanceBench is a benchmark designed to test AI systems on complex financial documents. Page Index achieved about 98.7% accuracy on it, compared to roughly 50–65% for traditional vector RAG, demonstrating a major improvement in reliability.

Does Page Index completely replace vector databases?

Ans: No. It is not a universal replacement. Vector databases are still better for fast, large-scale search across many documents. Page Index is best for deep analysis within complex, structured documents.

What types of documents benefit most from Page Index?

Ans: Highly structured and cross-referenced documents such as:

- Financial filings (10-K, 10-Q)

- Legal contracts

- Medical research papers

- Technical documentation

- Academic studies

Can Page Index handle follow-up questions in a conversation?

Ans: Yes. It maintains conversational context, so follow-up queries refine the same document navigation instead of starting from scratch.

Why is Page Index slower than vector RAG?

Ans: Because it involves multiple reasoning steps. The LLM must analyze the tree, traverse nodes, fetch content iteratively, and then generate an answer—rather than performing a single similarity search.

What is the future of Page Index in AI systems?

Ans: It is likely to coexist with vector RAG in hybrid architectures—where vector search handles broad retrieval and Page Index handles deep reasoning within selected documents.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure