Jump to section:

TL;DR / Summary

The Model Context Protocol (MCP) is an open standard introduced by Anthropic in November 2024 that defines how AI systems connect to external tools, data sources, and software in a consistent, structured way. Built on JSON-RPC, MCP enables AI applications to move beyond static responses by accessing real-time information and executing actions through standardized components like resources (read-only data) and tools (executable functions). Its core innovation is reducing the complexity of integrations—from many custom, fragile connections to a universal, reusable interface—while enabling capabilities like tool discovery and multi-step agent workflows. Widely adopted across platforms supported by organizations such as OpenAI, Google, and Microsoft, and later contributed to the Linux Foundation, MCP is rapidly becoming a foundational layer for building practical, connected AI systems—though it requires careful implementation of security controls like authentication, authorization, and input validation.

Ready to see how it all works? Here’s a breakdown of the key elements:

- Why AI Needs a Universal Integration Standard in 2026

- The Broken Reality of AI Integration Before MCP

- What Is the Model Context Protocol and When Was It Created?

- MCP's Core Definition: The Open Standard Powering AI Connectivity

- How the Model Context Protocol Architecture Works Step by Step

- MCP's Five Core Primitives Explained: Resources, Tools, Prompts, Roots, and Sampling

- MCP vs Traditional REST APIs: A Technical Comparison for Developers

- How MCP Is Directly Solving Enterprise AI Adoption Problems Today

- The Complete MCP Ecosystem: 20+ Real-World Server Integrations

- 7 Key Advantages of Using the Model Context Protocol in Production

- 5 Serious Risks and Cons of MCP Every Developer Must Know

- MCP Security Vulnerabilities Deep Dive: Protecting Your Deployment

- How to Build Your First MCP Server: A Developer's Practical Guide

- How Ruh AI Is Bringing MCP to Traditional Businesses Without a Tech Team

- MCP's Industry Trajectory: From Anthropic's Lab to the Linux Foundation

- Frequently Asked Questions About the Model Context Protocol

- Conclusion: Why MCP Is No Longer Optional for AI Builders

Why AI Needs a Universal Integration Standard in 2026

There is a revolution happening in AI right now, and it isn't happening on the surface. It's not in the chat interfaces, the image generators, or the headline-grabbing benchmarks. It's happening underneath — in the infrastructure that connects AI models to the real world.

For years, the most powerful AI systems in the world suffered from the same foundational limitation: they were islands. Brilliant, capable, sophisticated islands — but islands nonetheless. They could reason about your database, but not read it. They could suggest an email response, but not send it. They could discuss your codebase, but only if you manually pasted the relevant files into a chat window. Every interaction with live data required a human in the loop, doing the tedious work of bridging the gap between what the AI could do and what the AI could actually access.

That gap is now closing. And the tool closing it is the Model Context Protocol — or MCP.

Introduced by Anthropic in November 2024 and open-sourced for the broader developer community, MCP is a standardized communication protocol that allows AI models to connect, in real time, to databases, APIs, file systems, productivity tools, developer platforms, and virtually any digital system. It transforms AI assistants from isolated reasoning engines into active participants in real workflows.

This guide covers everything you need to know: where MCP came from, why it was built, how it works under the hood, where it's already reshaping the industry, how platforms like Ruh AI are making it accessible to every business, and — critically — what the legitimate risks are that demand your attention.

The Broken Reality of AI Integration Before MCP

Before MCP, every AI-to-tool connection required custom code — tightly coupled to a specific model version and API contract. One API change could break the entire pipeline. The result was the "N × M integration problem": N AI applications connecting to M tools required N × M individually maintained codebases. Fragile, expensive, and impossible to scale.

This wasn't a niche frustration. As Ruh AI's guide to using AI without a tech team illustrates, traditional businesses — roofing contractors, legal practices, accounting firms — were locked out of real AI connectivity by the same fragmentation that plagued enterprise engineering teams.

What Is the Model Context Protocol and When Was It Created?

MCP was formally introduced by Anthropic on November 25, 2024, created by David Soria Parra and Justin Spahr-Summers. The origin was practical: Parra's frustration with constantly copying integration code between Claude Desktop and his own IDE sparked the project.

The driving insight — AI integration is a standardization problem, and the industry has solved those before. USB-C replaced incompatible cables. HTTP unified the early internet. MCP does the same for AI: any compliant client connects to any compliant server automatically. As Anthropic's official announcement frames it, MCP is the "USB-C port for AI" — implement the standard once on each side, and the integration burden drops from N × M to N + M.

By December 2025, the open-source bet had paid off: 97 million monthly SDK downloads, 10,000+ active servers, and first-class support across Claude, ChatGPT, Cursor, Gemini, and Microsoft Copilot, per Anthropic's Linux Foundation donation announcement.

MCP's Core Definition: The Open Standard Powering AI Connectivity

The Model Context Protocol is an open-source specification that defines how AI applications communicate with external data sources and tools. Built on JSON-RPC 2.0, MCP provides a standardized "language" that allows Large Language Models to move beyond their static training data and interact with the real-time outside world: databases, APIs, local files, SaaS platforms, and beyond.

The key word is standardized. MCP is not a specific product, a specific tool, or a specific integration. It is a specification — a set of rules any developer can implement on either side of an AI-to-tool connection. The official MCP documentation at modelcontextprotocol.io defines its scope as covering: data ingestion and transformation, contextual metadata for AI reasoning, bidirectional connections between data sources and AI tools, and the core primitives (Resources, Tools, Prompts, Roots, Sampling) that govern every interaction.

Because MCP is a specification rather than a product, security is not baked in — it depends entirely on how each deployment implements the protocol. This characteristic is both a strength (maximum flexibility) and a meaningful risk that responsible deployments must address head-on. For a broader view of how organizations should structure their approach to AI implementation, the complete AI implementation guide on the Ruh AI blog offers a practical framework that applies directly to MCP-powered deployments.

How the Model Context Protocol Architecture Works Step by Step

MCP operates on a client-server architecture composed of three distinct layers. Understanding how these layers interact is fundamental to understanding both the power and constraints of the protocol. For a detailed technical reference, the official MCP architecture overview provides the authoritative specification.

The Three Components of MCP Architecture

The MCP Host

The Host is the primary AI application the user interacts with directly — the interface layer. Examples confirmed in the specification include Claude Desktop, VS Code, Cursor, and Windsurf. The Host manages the overall conversation, coordinates which servers are available, and presents the AI's final responses to the user.

The MCP Client

The Client lives inside the Host and is invisible to the end user. It is the protocol translator: it takes the LLM's intent and converts it into structured JSON-RPC messages that MCP Servers can understand. Each Client maintains a one-to-one connection with a single MCP Server and handles authentication and response parsing.

The MCP Server

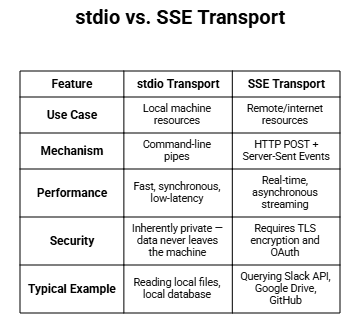

Servers are lightweight programs that expose specific capabilities — like searching GitHub or querying a database — to the Client through the standardized protocol. Servers can run locally on the same machine (using stdio transport) or remotely on external infrastructure (using SSE transport). As the official GitHub repository for MCP documents, servers are now maintained in all major programming languages through official and community SDKs.

The Five-Step Operational Flow

When a user asks their AI assistant, "Find the latest sales report and email it to the team," this sequence occurs:

Step 1 — Request & Discovery: The Host/Client queries available MCP Servers to discover what tools exist, such as database_query and email_send. Step 2 — Model Reasoning: The user's question plus the tool manifest is sent to the LLM. The model decides which tools to invoke and in what order. Step 3 — Tool Invocation: The Client calls the selected tool on the appropriate MCP Server. Step 4 — Action & Result: The Server executes the action — running a SQL query, fetching a file — and returns formatted data to the Client. Step 5 — Final Response: This new context is passed back to the LLM, which synthesizes a human-readable answer.

Transport Layers: stdio vs. SSE

MCP supports two transport mechanisms. All communication uses JSON-RPC 2.0 — a lightweight message format supporting Requests, Responses, and Notifications. The MCP specification at modelcontextprotocol.io defines these transport methods in full:

Every connection begins with an initialization phase where client and server agree on protocol versions before message exchange starts — ensuring compatibility before any real work is done.

MCP's Five Core Primitives Explained: Resources, Tools, Prompts, Roots, and Sampling

MCP's capabilities are defined by five core building blocks called primitives, divided between those provided by the server and those provided by the client. As InfoQ's detailed technical coverage explains, servers support Prompts, Resources, and Tools; clients support Roots and Sampling.

The protocol specification also includes elicitation — a sixth mechanism that allows a server to initiate information requests directly from the user.

Server-Side Primitives

1. Prompts — Standardized Workflow Templates for AI Behavior

Prompts are reusable templates or instructions injected into the LLM's context to guide how it approaches specific tasks. They function as a "standardized rule book" — telling the model what tools are available, how to use them, and what workflow to follow. Getting prompt architecture right is fundamental to reliable agentic behavior. For a deep dive into this, the Ruh AI guide to prompt engineering for autonomous AI agents is one of the most practical resources available on production-ready prompt design.

2. Resources — Read-Only Data for Grounding AI Responses

Resources are read-only structured data objects — files, database rows, product catalogs, documentation — that provide the model with context to reference. The critical characteristic: Resources must never have side effects. They are informational only, analogous to a GET request. When a model reads a Resource, it pulls authoritative, up-to-date context that directly reduces hallucinations.

3. Tools — Executable Functions That Let AI Take Action in the World

Tools are executable functions designed specifically for side effects — sending emails, writing to databases, booking flights, executing calculations. These are the POST requests of MCP: the model does something in the world. The model generates a structured call defined by JSON Schema, the server executes the underlying code, and the result is returned. Understanding how to design AI agents that handle tool execution safely and predictably is critical — the Ruh AI breakdown of fatal design flaws in AI agents that refuse commands covers precisely the edge cases where tool design breaks down in production.

Client-Side Primitives

4. Roots — Secure Filesystem Boundaries for AI Agents

Roots allows an AI application to interact with specific local files — reading a codebase, analyzing documents — without granting unrestricted access to the user's entire file system. It defines explicit, bounded filesystem access zones. This is a critical security primitive: AI agents operate within defined boundaries rather than with open-ended filesystem access.

- Sampling — The Feature That Makes AI Truly Bidirectional

Sampling is one of MCP's most architecturally distinctive features. It allows a server to initiate a request back to the LLM, inverting the typical direction of communication. If an MCP Server is analyzing a complex database schema, for example, it can use Sampling to ask the LLM to help formulate a relevant SQL query. As Palo Alto Networks' Unit 42 research on MCP sampling attack vectors notes, this bidirectional capability "allows servers to leverage LLM intelligence for complex tasks" — and simultaneously introduces new security considerations. For context on how self-improving agent loops relate to this, see the Ruh AI guide to RLHF and self-improving AI agents.

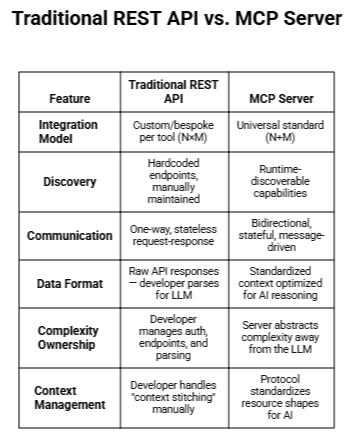

MCP vs Traditional REST APIs: A Technical Comparison for Developers

One of the most important questions for developers and architects evaluating MCP is: How does this differ from just building REST API integrations? The answer goes deeper than most people initially expect.

The deepest architectural difference is conceptual, not technical. A REST API is a way for one program to talk to another. An MCP Server is a way for a system to declare its capabilities to an AI, so the model can autonomously decide how to use them. That shift from prescriptive integration to autonomous capability discovery is what makes MCP architecturally distinct — not just incrementally better at the same task.

The emergence of hidden inter-agent communication layers built on top of MCP is another dimension worth understanding. The Ruh AI article on silent protocols and hidden AI agent communication networks explores how these invisible orchestration layers are reshaping the way agentic systems talk to one another — with implications for both capability and security.

How MCP Is Directly Solving Enterprise AI Adoption Problems Today

MCP is addressing four concrete barriers that have slowed enterprise AI adoption since 2022.

Integration complexity. The biggest blocker was never model capability — it was the cost of connecting models to real data. One MCP server, built once, works across every compliant AI client, eliminating bespoke integration cycles. As Ruh AI's analysis of AI in MLOps shows, this is reshaping the entire machine learning operations pipeline.

Autonomous agents. MCP's bidirectional communication — Tools for taking actions, Sampling for mid-workflow model requests — enables agents to plan and execute multi-step tasks across multiple systems. The GitHub Blog notes developers now routinely build agents with access to hundreds of tools across dozens of MCP servers.

Hallucination reduction. MCP Resources pull live, authoritative data at query time, eliminating the stale-context problem behind most enterprise AI failures. In AI-driven customer journey mapping, this difference between live CRM data and training-set guesses is the line between useful and unreliable.

Vendor decoupling. MCP separates the model entirely from the tools. Swap AI providers, upgrade models, or evaluate alternatives without touching a single MCP server — a strategic advantage as Ruh AI's marketing operations analysis confirms is now standard practice for AI-native teams.

The Complete MCP Ecosystem: 20+ Real-World Server Integrations

Since Anthropic's launch, a rapidly expanding ecosystem of pre-built servers has emerged — shared in open-source repositories including the "Awesome MCP" repo on GitHub — making "build once, integrate everywhere" increasingly real.

Enterprise and Communication — Slack (read and post to team channels), Google Drive (access and analyze cloud files), GitHub/Git (manage repos, PRs, and issues), Salesforce (live CRM data queries and updates), Notion and Google Calendar (scheduling and task automation).

Data and Infrastructure — PostgreSQL and MongoDB (live SQL queries and record updates), Google BigQuery (warehouse-scale data analysis), Stripe (payment and invoice access), Kubernetes and AWS/GCP cloud infrastructure (natural language infrastructure management).

Developer and Productivity — Obsidian (read/write private note vaults), Figma (access UI designs to generate web applications), Context7 and OpenAI Docs MCP (live technical documentation to cut coding hallucinations), Sentry (error log access for autonomous debugging), Docker (orchestration of containerized MCP servers).

Web, Search, and Specialized Utilities — Puppeteer and Playwright (browser automation), DuckDuckGo/Brave Search/Google Search (live internet queries), YouTube Transcripts (fetch and summarize video content), Kali Linux (ethical hacking tooling), Windows Ecosystem connectors for File Explorer and Settings.

7 Key Advantages of Using the Model Context Protocol in Production

1. Eliminates the N × M Integration Tax — A server built once works with any MCP-compliant client. The Linux Foundation's AAIF announcement cites this as the core reason MCP became the universal standard for AI-to-tool connectivity.

2. Runtime Discovery — No Hardcoded Endpoints — MCP servers expose a machine-readable capability surface that clients query dynamically at runtime. No manual endpoint maintenance, no broken integrations when APIs change.

3. Real-Time Data Access Reduces Hallucinations — Resources pull live, authoritative data at query time. A model with access to live GitHub issues or current CRM records answers from fact, not from training memory.

4. Bidirectional Sampling Enables Agentic Loops — Through Sampling (servers requesting LLM help mid-workflow) and elicitation (servers requesting user input), MCP enables multi-turn agentic orchestration that is architecturally impossible in one-directional REST APIs.

5. Full Vendor Decoupling — The abstraction layer separates the model entirely from the tools. Switch AI providers or upgrade models without touching a single MCP server.

6. AI-Optimized Context Improves Reasoning Quality — MCP standardizes resource shapes specifically for AI reasoning — not for generic software consumption. Better-formatted context means better output and more efficient use of the context window.

7. A Growing Pre-Built Ecosystem — OpenAI's Agents SDK confirms MCP adoption across all major AI platforms. Ruh AI's cold email analysis shows MCP-connected outreach achieving 12–18% response rates versus the 2–3% industry average — because the AI has live prospect data, not stale templates.

5 Serious Risks and Cons of MCP Every Developer Must Know

Because MCP is a specification, not a product, none of these risks are handled automatically. Every one requires deliberate engineering.

1. No Native Authentication, Authorization, or Encryption — The protocol has zero built-in security controls. As Red Hat's technical analysis documents, many implementations run over plain HTTP, leaving systems exposed to impersonation and man-in-the-middle attacks unless TLS is explicitly implemented.

2. Arbitrary Code Execution Surface — MCP Tools let the LLM execute real functions with real consequences. A compromised server can misrepresent what a tool does, tricking an agent into destructive actions. As Checkmarx's research warns, an over-privileged agent could accidentally or intentionally destroy critical data.

3. Over-Privileged AI Agents — Connecting agents to CRMs, financial systems, and local file systems without strict permission scoping creates serious data exposure. MCP does not enforce least-privilege — you must build it in deliberately. This has direct regulatory consequences in financial services and healthcare deployments.

4. Prompt Injection and Tool Poisoning Are Active Threats — Unit 42's research and Lakera's analysis document live attack classes: hidden instructions embedded in data sources, manipulated tool metadata, and XSS via unsanitized LLM output. The timeline of real MCP breaches includes exploits in GitHub's official MCP server and Anthropic's own Filesystem-MCP server. The Ruh AI guide to prompt engineering for production agents covers the design-level defenses that matter most.

5. Untrusted Third-Party Servers — Thousands of community-built servers exist with no standardized security audit or certification process. Organizations connecting to third-party servers have no inherent guarantee the server behaves as documented or hasn't been compromised after initial adoption.

MCP Security Vulnerabilities Deep Dive: Protecting Your Deployment

Understanding the risks is only valuable if it leads to concrete mitigations. The following practices are drawn from Red Hat's MCP security guidance, Checkmarx's MCP security research, and OWASP's LLM Security Top 10.

Mandatory User Consent Before Any Tool Execution

Systems must require explicit user approval before an AI agent accesses data or invokes a tool. Anthropic's official MCP documentation specifies that this human-in-the-loop control is intentional by design.

Scoped Credentials and the Principle of Least Privilege

Provide AI agents with only the minimum necessary credentials and permissions for their specific task. The MDPI research paper on prompt injection in LLM systems confirms that robust input validation, strong authentication, and least-privilege design are the foundational AppSec principles that secure MCP ecosystems.

Mandatory TLS for All Remote Transport Connections

Implement Transport Layer Security (TLS) for all SSE transport connections to ensure encryption and authentication, preventing impersonation and on-path attacks.

Strict Input Validation on All Tool Parameters

All tool inputs must be strictly validated via JSON Schema before execution. As Practical DevSecOps's 2026 MCP security analysis documents, unvalidated inputs create SQL injection, command injection, and parameter manipulation vulnerabilities that can be triggered by AI-generated tool parameters.

Comprehensive Audit Logging of All MCP Interactions

Maintain robust logs of every interaction — every data access, every tool invocation, every piece of data that moves through the system. Without logging, you are running a powerful, action-capable AI system with no visibility into what it is doing.

How to Build Your First MCP Server: A Developer's Practical Guide

The official MCP Python SDK and architecture docs have the full spec. Here is the practical path.

Languages: TypeScript/JavaScript, Python, Java, Kotlin, C# (with Microsoft), Go, PHP, Ruby, Rust, and Swift — all at the official MCP GitHub org.

Manual SDK path: (1) Import MCPServer from your language's SDK. (2) Define Resources with a name, URI, and fetch callback. (3) Define Tools with a name, description, and Zod or JSON Schema validation — this prevents the model from hallucinating incorrect arguments. (4) Set transport: stdio for local, SSE for remote.

Vibe coding path: Give an LLM a build prompt describing what you want (e.g., "a PostgreSQL connector"). It outputs server.py, requirements.txt, and a Dockerfile. Containerize with Docker for isolated deployment.

Connect to a client: Edit the Host's config — Claude uses config.json, Codex uses config.toml. The Host prompts for explicit user consent on first tool invocation.

Test with MCP Inspector: A dedicated visual testing tool. A green "active" status in Claude Desktop confirms your server is live. Always use the latest version — earlier releases had documented remote code execution vulnerabilities.

How Ruh AI Is Bringing MCP to Traditional Businesses Without a Tech Team

The discussion of MCP so far has been largely technical — architecture, primitives, transport layers, security. But there is a parallel story that is equally important: what happens when MCP-powered AI becomes accessible to businesses that have no engineering team, no developer resources, and no appetite for protocol specifications?

That is the story Ruh AI is telling — and it is one of the most significant real-world demonstrations of what MCP infrastructure unlocks when abstracted into a practical product.

The "Conductor Not Coder" Approach to MCP

Ruh AI's core philosophy — what they call the "Conductor Not Coder" mindset — is a direct response to the same fragmentation problem MCP was designed to solve, translated for a non-technical audience. As their guide to using AI without a tech team in 2026 explains:

"Think of yourself less like a developer and more like an orchestra conductor. Your job isn't to play every instrument. Your job is to direct the right specialists to the right tasks at the right time."

For traditional businesses — roofing contractors, legal practices, accounting firms, regional service companies — this framing removes the single biggest psychological barrier to AI adoption: the assumption that real AI connectivity requires technical expertise.

Ruh AI's Ruh Developer platform explicitly supports Custom Tools (MCPs) as a first-class product feature, allowing businesses to define, build, test, and deploy custom MCP servers through a visual interface — not raw code. Their Ruh Work-Lab then orchestrates those MCP-connected tools into complete agentic workflows: preset agents, custom workflows, and a marketplace of pre-built integrations that any business can activate without a single line of configuration.

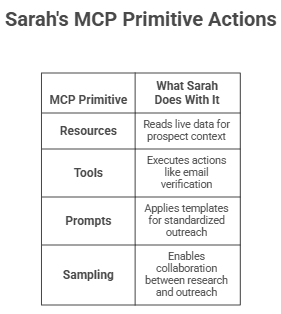

Sarah: An AI SDR Built on MCP-Connected Agents

The most concrete example of Ruh AI's MCP adoption is Sarah, Ruh AI's AI SDR — a multi-agent AI system that handles the entire sales development workflow: prospecting, research, personalization, outreach, qualification, and meeting booking. Sarah is not a chatbot. She is an always-on, 24/7 sales development representative whose underlying architecture depends entirely on the kind of real-time, live-data connectivity that MCP enables.

Here is how MCP primitives map directly to what Sarah does in production:

The result, per Ruh AI's published deployment numbers: 3x increase in qualified leads, 15% improvement in win rates, 80% reduction in sales development costs, and 55% improvement in response rates compared to manual outreach. Compare that to the 2–3% industry average for cold email — an improvement that is only possible because the AI has real-time access to prospect data through MCP-style tool connections, not just templates loaded from training.

MCP for Traditional Business Verticals

Ruh AI's MCP-powered approach is not limited to sales. Their platform extends the same MCP infrastructure philosophy to multiple business functions that traditional companies run on:

Financial Services — As covered in Ruh AI's analysis of AI employees in financial services, MCP-connected AI agents can query live financial data, compliance databases, and client records to provide accurate, contextually relevant responses — eliminating the hallucination risk that makes generic AI unsuitable for regulated industries.

Healthcare — The Ruh AI guide to AI augmenting healthcare workflows demonstrates how MCP-connected agents read live patient scheduling data, referral records, and appointment systems to automate administrative workflows that previously required human staff — while keeping clinicians in the loop for any action requiring professional judgment.

Marketing Operations — In Ruh AI's analysis of AI eliminating campaign bottlenecks, MCP tool connections to ad platforms, CRMs, and analytics dashboards allow marketing agents to read live campaign performance, generate optimization recommendations, and execute changes — replacing the manual reporting cycles that slow most marketing teams down.

Customer Journey Mapping — The Ruh AI guide to AI-driven customer journey mapping shows how MCP Resources connected to CRM data, support ticket systems, and behavioral analytics enable AI to map real customer journeys from live data — not from hypothetical personas or static spreadsheets.

Why Ruh AI's Approach to MCP Matters Beyond the Platform Itself

Ruh AI's implementation of MCP is significant not just because it makes a sophisticated protocol accessible to non-technical businesses — but because it demonstrates the complete arc of what MCP was designed to enable.

Anthropic built MCP so that AI models could stop being islands. Ruh AI built a platform so that businesses could stop needing developers just to get those connections working. The result is a stack where a roofing contractor or a solo attorney can deploy a multi-agent AI system that reads their CRM, personalizes outreach based on live prospect data, books meetings on their calendar, and updates records automatically — all without writing a single line of code, and all powered by the same MCP primitives that enterprise engineering teams are implementing at scale.

That is the full promise of MCP realized at every level of the market.

If you are exploring how your organization should structure its AI adoption — not just the technical infrastructure, but the organizational and workflow design decisions — the complete AI implementation guide on the Ruh AI blog is one of the most practical frameworks available. And if you are ready to see what MCP-powered AI looks like for your specific business, the Ruh AI team is available to walk through your situation directly.

MCP's Industry Trajectory: From Anthropic's Lab to the Linux Foundation

November 2024: Anthropic releases MCP as open source. March 2025: OpenAI adopts it across the Agents SDK, Responses API, and ChatGPT desktop. Google DeepMind and Microsoft Copilot Studio follow. Within nine months, what started as a developer's frustration with copy-pasting integration code became what TechCrunch described as "the de facto protocol for connecting AI systems to real-world data and tools."

December 2025: Anthropic donates MCP to the Agentic AI Foundation (AAIF) under the Linux Foundation, co-founded with Block and OpenAI, backed by Google, Microsoft, AWS, Cloudflare, and Bloomberg. Per InfoQ's coverage, MCP joined goose and AGENTS.md as a founding project — vendor-neutral, community-governed infrastructure by design.

At donation: 97 million monthly SDK downloads, 10,000+ active servers. Co-creator David Soria Parra put the ambition plainly in TechCrunch: "Build something once as a developer and use it across any client." For where agent infrastructure heads next, the Ruh AI RLHF guide offers useful context.

Conclusion: Why MCP Is No Longer Optional for AI Builders

MCP is infrastructure — and infrastructure is precisely what AI was missing to move from impressive demo to reliable, real-world utility.

Anthropic released it in November 2024 to replace fragile, custom integrations with a universal standard. One year later: 97 million monthly SDK downloads, 10,000+ active servers, industry-wide adoption from OpenAI, Google, and Microsoft, and governance transferred to the Agentic AI Foundation under the Linux Foundation.

The most telling signal of MCP's maturity is not technical — it's market-level. Platforms like Ruh AI are deploying MCP-powered agents for traditional businesses with no engineering team. When a roofing contractor can run a live-data AI SDR that books meetings automatically, MCP has crossed from "developer infrastructure" to "business infrastructure."

The risks remain real. Red Hat, Checkmarx, and documented breach history confirm: MCP deployed carelessly creates real exposure. Deploy it deliberately and it is one of the most powerful tools in modern AI infrastructure.

The standard is here, it is growing, and it is being adopted at every level of the market. Understanding it is no longer optional. Explore what MCP-powered AI looks like for your business →

Frequently Asked Questions About the Model Context Protocol

Q: Who created the Model Context Protocol and when?

Ans: MCP was created by David Soria Parra and Justin Spahr-Summers at Anthropic, officially released as an open-source standard on November 25, 2024. In December 2025, Anthropic donated MCP to the Agentic AI Foundation under the Linux Foundation.

Q: Is MCP specific to Claude or Anthropic's products?

Ans: No. MCP is an open-source specification. OpenAI, Google DeepMind, Microsoft, and thousands of independent developers have all adopted it. The protocol is model-agnostic and vendor-neutral. Platforms like Ruh AI build MCP-powered agents that are not tied to any single model provider.

Q: What programming languages can I use to build an MCP server?

Ans: MCP supports official and community SDKs for TypeScript/JavaScript, Python, Java, Kotlin, C#, Go, PHP, Ruby, Rust, and Swift. See the official MCP GitHub organization for all SDKs.

Q: What is the difference between an MCP Resource and an MCP Tool?

Ans: Resources are read-only data objects that provide context — they never have side effects (like a GET request). Tools are executable functions that perform actions and can change the state of external systems (like a POST request).

Q: Is MCP secure by default?

Ans: No. MCP is a specification with no native authentication, authorization, or encryption. Security depends entirely on how the protocol is deployed. Production deployments must implement TLS for remote transport, scoped credentials, strict input validation, mandatory user consent, and comprehensive audit logging. See OWASP's LLM Top 10 for the broader security framework that applies.

Q: Do I need a technical team to benefit from MCP-powered AI?

Ans: Not necessarily. Platforms like Ruh AI abstract MCP complexity into visual, no-code interfaces. As their guide to using AI without a tech team demonstrates, traditional businesses can deploy MCP-powered agents for sales, marketing, customer service, and operations without writing any code. Contact Ruh AI to understand what is possible for your specific situation.

Q: What is MCP Sampling and why does it matter?

Ans: Sampling allows an MCP Server to initiate a request back to the LLM — inverting the typical direction of communication. It enables the bidirectional "agentic loop" that underlies genuinely autonomous AI agents. However, as Unit 42's security research documents, Sampling also introduces new attack vectors that must be explicitly mitigated.

Q: How does MCP differ from a REST API?

Ans: A REST API is a way for one program to talk to another. An MCP server is a way for a system to declare its capabilities to an AI so the model can autonomously decide how to use them. MCP also supports bidirectional communication, runtime capability discovery, stateful sessions, and AI-optimized context formats.

Q: Where can I find pre-built MCP servers and official documentation?

Ans: The official MCP documentation is at modelcontextprotocol.io. Pre-built servers are available in the "Awesome MCP" repository on GitHub. For no-code MCP deployment, explore the Ruh AI platform and the Ruh AI blog for practical guidance on real-world deployment.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure