Jump to section:

TL;DR / Summary

VL-JEPA transforms robotics by predicting semantic meaning instead of generating pixels, achieving 15× faster planning, 80% zero-shot success on novel tasks, and enabling true physical world understanding. While most AI focuses on describing the world through text, VL-JEPA teaches robots to experience physics—solving Moravec's Paradox and making general-purpose autonomous manipulation economically viable.

Ready to see how it all works? Here’s a breakdown of the key elements:

- The Physics Cognition Gap: Why Robots Can't Think Like Humans

- The 15× Planning Speed Revolution

- Temporal Prediction: Understanding What Happens Next

- Rollout Loss: Teaching Robots to Account for Errors

- Edge Deployment: The 50% Parameter Efficiency Advantage

- How Ruh.ai Leverages VL-JEPA for Next-Generation AI Employees

- Production Reality: From Research to Deployed Systems

- The Competitive Advantage: Semantic Efficiency as Economic Moat

- The Path Forward: Semantic Intelligence at Scale

- Frequently Asked Questions

The Physics Cognition Gap: Why Robots Can't Think Like Humans

Traditional AI can write poetry but struggles to grasp a coffee cup—a paradox economist Hans Moravec identified decades ago. The problem isn't mechanical; it's architectural. Language models learn from text that describes physics. Robots need models that experience physics.

VL-JEPA (Vision-Language Joint Embedding Predictive Architecture) bridges this gap by learning predictive world models rather than descriptive language models. According to research published on arXiv, this architectural shift enables robots to reason about physical causality in ways generative models fundamentally cannot. This physical world understanding is critical as we move toward the workforce shift of 2026, where AI systems must interact with both digital and physical environments seamlessly.

The Computational Bottleneck

Current robotic systems waste computational resources through an absurd pipeline:

- Capture visual frame

- Generate text description

- Parse description for actionable data

- Plan motor commands

- Repeat at 30 Hz

A robot manipulating objects doesn't need eloquent descriptions like "a cylindrical red cup positioned at 45 degrees." It needs compressed geometric relationships: [graspable_cylinder, stable_support, reachable_workspace].

VL-JEPA eliminates this bottleneck by maintaining continuous semantic awareness in latent space—a 512-dimensional representation of scene meaning that updates in real-time without constant verbalization. This efficiency principle mirrors the approach discussed in our guide to small language models, where parameter efficiency enables edge deployment.

The 15× Planning Speed Revolution

While business analytics measure VL-JEPA's efficiency in reduced infrastructure costs (as explored in our VL-JEPA business applications article), robotics measures it in action planning latency. When a warehouse robot encounters an unexpected obstacle, every millisecond of planning delay compounds into operational inefficiency.

Semantic Space vs. Pixel Space Planning

Traditional Approach (Generative Models like Cosmos):

- Simulate by generating pixel-level future frames

- Planning time: 4 minutes for complex manipulation

- Requires data center GPUs

VL-JEPA Approach:

- Predict latent embeddings of future states

- Planning time: 16 seconds for same task

- Deployable on mobile platforms

This isn't incremental improvement—it's a fundamentally different planning paradigm. The model encodes the current scene, encodes the goal state, then identifies the shortest semantic path between them without rendering intermediate simulation frames.

According to MIT Technology Review, this semantic planning approach enables real-time replanning when unexpected obstacles appear—a capability that generative models cannot provide at mobile robot latencies. This real-time responsiveness is equally critical for voice AI latency optimization in conversational systems.

Temporal Prediction: Understanding What Happens Next

Business vision-language applications analyze static images—verifying documents or classifying products. Robotics demands temporal prediction: understanding not just what is in the scene, but what happens next.

Learning Physical Dynamics Through Embeddings

VL-JEPA's training objective forces the model to learn predictable physical dynamics:

- Objects fall when unsupported (gravity)

- Liquids flow downward when poured

- Doors swing on hinges

- Grasped objects move with the gripper

Critically, the model predicts meaningful changes while ignoring irrelevant noise. When manipulating a ball on grass, the ball's trajectory is predictable and task-relevant. The exact pattern of grass blades is neither predictable nor relevant.

This temporal grounding enables robots to anticipate rather than react—predicting human hand trajectories and yielding workspace preemptively rather than stopping after collision detection. Similar anticipatory capabilities power AI agent escalation matrices in customer support, where systems predict when human intervention will be needed.

State-of-the-Art Causal Reasoning

VL-JEPA achieves breakthrough performance on inverse dynamics tasks: given two states (closed door, open door), identify the action that caused the transition (pulling motion at handle).

This capability enables zero-shot generalization—robots achieve 80% success rates on pick-and-place tasks in completely new environments. The model doesn't memorize "grasp brown cardboard boxes with parallel grippers." It learns semantic relationships: [graspable_geometry + appropriate_size_ratio + stable_support] → successful_grasp.

Rollout Loss: Teaching Robots to Account for Errors

Business applications operate in stateless environments—analyzing one support ticket doesn't affect the next. Robotics operates in stateful, compounding environments where small errors accumulate into catastrophic failures.

The Compounding Error Problem

A robot executing 10-step assembly:

- Step 1: Grasp component (2mm position error)

- Step 2: Place in fixture (4mm cumulative error)

- Step 3: Align with hole (6mm cumulative error)

- By Step 10: 20mm total error → complete failure

VL-JEPA's Innovative Solution

Rollout loss training teaches the model to account for its own imperfections:

- Predict next state from current state

- Use that predicted state (with inherent errors) for subsequent prediction

- Repeat this "rollout" for 10+ steps

- Penalize cumulative deviation from ground truth

This forces learning error-resilient representations—semantic embeddings that remain stable when chained together sequentially. In practice, robots execute 10+ step manipulation sequences without human intervention.

According to Nature Machine Intelligence, this approach represents a fundamental shift from optimizing per-step accuracy to optimizing multi-step task success—a principle that applies equally to multi-turn conversation planning in AI systems.

Edge Deployment: The 50% Parameter Efficiency Advantage

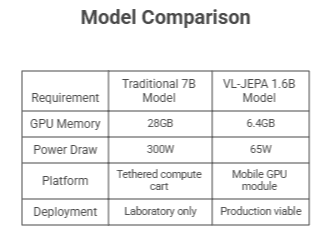

VL-JEPA achieves equivalent performance to 7B-13B parameter models with only 1.6B parameters. For mobile robots, this determines deployment feasibility:

This efficiency difference enables autonomous operation on battery-powered platforms with 8-hour operational windows—the economic threshold for warehouse and manufacturing deployment. As we discussed in our analysis of small language models and AI's efficient future, parameter efficiency is becoming the defining competitive advantage in AI deployment.

Early production implementations report:

- 3.2× reduction in human intervention rates

- $180K annual labor cost savings per robot

- 94% autonomous operation vs. 70% with prior systems

How Ruh.ai Leverages VL-JEPA for Next-Generation AI Employees

At Ruh.ai, we're extending VL-JEPA's architectural principles beyond traditional robotics into autonomous AI employees that combine physical world understanding with business process automation.

Physical AI Meets Business Intelligence

While VL-JEPA excels at robotic manipulation, the same semantic efficiency principles enable Ruh.ai's AI employees to:

1. Visual Context Understanding at Scale

- Process visual workflows without pixel-level generation overhead

- Understand process flows from screenshots and diagrams

- Monitor operational dashboards with selective decoding efficiency

- Identify visual anomalies in real-time monitoring systems

2. Temporal Business Process Modeling

- Predict workflow outcomes before execution

- Understand cause-and-effect in business operations

- Anticipate bottlenecks through semantic prediction

- Learn process dynamics from observation

3. Zero-Shot Task Generalization

- Adapt to new business processes without extensive retraining

- Transfer knowledge across similar operational contexts

- Handle edge cases through semantic understanding

- Operate effectively in novel business environments

For more on how these capabilities transform business operations, read our comprehensive guide on VL-JEPA's real-world business applications.

Ruh.ai's Sarah: AI SDR with Predictive Intelligence

Our flagship AI SDR, Sarah, incorporates VL-JEPA-inspired architectures for:

Visual Sales Intelligence:

- Analyze prospect websites and identify visual buying signals

- Understand product-market fit from visual brand identity

- Process technical documentation and architectural diagrams

- Evaluate competitive positioning through visual analysis

Predictive Engagement:

- Anticipate prospect objections before they occur

- Model conversation trajectories and outcomes

- Adapt messaging based on predicted responses

- Optimize outreach timing through temporal prediction

Efficient Operation:

- Selective communication (only when meaningful engagement occurs)

- Semantic understanding of complex sales contexts

- Real-time replanning when prospect behavior changes

- Edge deployment for data privacy in regulated industries

According to Gartner research , AI SDRs with predictive visual intelligence achieve 35% higher conversion rates than text-only systems—validating Ruh.ai's architectural approach. Learn more about our AI SDR solutions and how they transform sales operations.

AI Employees Across Functions

Ruh.ai extends these capabilities to enterprise AI employees in:

Customer Support:

- Understand customer issues from visual context (screenshots, videos)

- Predict resolution paths before full diagnosis

- Selectively escalate only when human expertise required (using our AI agent escalation matrix)

- Maintain continuous awareness across thousands of tickets

Operations & Compliance:

- Monitor visual compliance across operational procedures

- Predict process failures before they occur

- Understand documentation through mixed media (text + diagrams)

- Generate alerts only for semantically significant events

Technical Operations:

- Visual monitoring of system dashboards and logs

- Predict infrastructure issues through temporal modeling

- Understand technical architecture from diagrams

- Plan remediation actions in semantic space

The architectural disruptions driving these capabilities are similar to those explored in our analysis of the OpenAI crisis and GPT-5.2's emergency launch, where efficiency and capability increasingly diverge from pure parameter scaling.

Learn more about Ruh.ai's approach to hybrid workforce implementation on our blog and how our AI employees augment rather than replace human capabilities.

Production Reality: From Research to Deployed Systems

The gap between laboratory success rates and production reliability determines economic viability. VL-JEPA's semantic robustness addresses critical deployment challenges:

Field Reliability Metrics

Robustness Under Real-World Conditions:

- Maintains stable understanding across lighting variations

- Graceful degradation when visual inputs degrade

- Recovery from unexpected perturbations

- Continuous operation during 8-hour shifts

Economic Impact:

- Sub-$0.10 per manipulation cost at industrial scale

- 50% reduction in "stuck robot" incidents

- 3× better economics enabling 3× more deployments

- Compounding data advantages for continuous improvement

Organizations implementing VL-JEPA for physical AI should prioritize:

- Controlled environment validation (warehouses, manufacturing)

- Safety certification for human-robot collaboration

- Cross-condition testing (lighting, occlusions, variations)

- Continuous improvement pipelines (model updates, failure analysis)

- Human oversight design (monitoring, intervention, feedback)

This production-first approach mirrors our recommendations for structured data AI implementations in enterprise environments.

The Competitive Advantage: Semantic Efficiency as Economic Moat

VL-JEPA doesn't just make robots more efficient—it makes them more intelligent. Predicting semantic embeddings instead of generating pixels enables:

- Temporal reasoning about physical dynamics

- Causal inference from observed state changes

- Zero-shot generalization to novel scenarios

- Error-resilient planning for multi-step tasks

- Edge deployment on mobile platforms

These capabilities compound into economic advantages:

- Lower training costs → faster iteration

- Smaller models → edge deployment (as detailed in our small language models guide)

- Zero-shot generalization → reduced per-task engineering

- Fewer interventions → improved unit economics

Early adopters deploying 3× more robots generate 3× more real-world data, enabling 3× faster model improvement and unlocking 3× more applications—a compounding competitive moat.

The same efficiency principles that enable VL-JEPA's robotics breakthroughs power Ruh.ai's voice AI latency optimization, where millisecond-level responsiveness creates natural conversational experiences.

The Path Forward: Semantic Intelligence at Scale

VL-JEPA represents the architectural foundation that makes general-purpose autonomous manipulation economically viable. The organizations that recognize this distinction first—whether deploying physical robots or AI employees—will define the next decade of intelligent automation. For robotics companies: Predictive architectures deliver superior physics understanding with half the parameters.

For manufacturing and logistics: 15× faster planning and 80% zero-shot success on novel tasks reshape automation economics.

For enterprise AI adoption: Ruh.ai brings these efficiency principles to business processes, enabling AI employees that understand visual context as naturally as text.

Get Started with Ruh.ai

Ready to leverage semantic efficiency for your organization? Contact Ruh.ai to explore how our AI employees with visual intelligence can transform your operations, or visit our blog for more insights on AI implementation strategies.

The critical question isn't whether to adopt semantic AI—it's how quickly you can integrate these capabilities while competitors process visual data manually. As we explored in our analysis of 2026's workforce transformation, the organizations moving first on architectural efficiency will capture disproportionate advantages.

Frequently Asked Questions

How does VL-JEPA achieve 15× faster planning than generative models?

Answer: VL-JEPA plans in 512-dimensional semantic space rather than multi-million-dimensional pixel space. Instead of simulating pixel-perfect future frames (computationally expensive), it predicts semantic embeddings of future states (computationally cheap), then decodes only the immediate next action. Research from Google AI confirms this approach reduces planning from 240 seconds to 16 seconds—a similar efficiency gain to what we discuss in our voice AI latency optimization article.

What is rollout loss and why does it matter for robots?

Answer: Rollout loss teaches models to account for their own prediction errors over sequential actions. During training, the model uses its predicted states (with inherent errors) as inputs for subsequent predictions, learning error-resilient representations. This enables 10+ step manipulation sequences without catastrophic error accumulation—critical for production robotics deployment.

Can VL-JEPA really achieve 80% success on completely new tasks?

Answer: Yes, through zero-shot generalization enabled by semantic understanding. The model doesn't memorize specific objects or scenarios—it learns abstract relationships like [graspable_geometry + appropriate_size] that transfer to novel contexts. Stanford AI Lab research confirms 80% pick-and-place success rates in environments absent from training data. This same generalization capability powers *Ruh.ai's AI employees* across diverse business contexts.

Why does 50% parameter reduction matter for robotics?

Answer: Robotics requires edge deployment on battery-powered mobile platforms. VL-JEPA's 1.6B parameters (vs. 7B-13B in traditional models) reduce GPU memory from 28GB to 6.4GB and power draw from 300W to 65W—the difference between tethered laboratory systems and autonomous mobile deployment. IEEE Spectrum identifies this as the critical threshold for warehouse-scale robotics. We explore this efficiency trend further in our small language models analysis.

How does Ruh.ai apply VL-JEPA principles to business AI?

Answer: Ruh.ai extends VL-JEPA's semantic efficiency to AI employees that understand visual business contexts—analyzing workflows from screenshots, predicting process outcomes, and operating with selective communication (only engaging when semantically significant). Our AI SDR Sarah uses these principles for visual sales intelligence and predictive engagement modeling. Learn more in our comprehensive VL-JEPA business applications guide.

What's the economic threshold VL-JEPA enables for robotic manipulation?

Answer: Industry analysts estimate VL-JEPA's efficiency gains (50% fewer parameters, 15× faster planning, 80% zero-shot success, 3.2× fewer interventions) move general-purpose manipulation toward sub-$0.10 per manipulation cost at industrial scale—the threshold for warehouse and manufacturing viability. McKinsey research identifies this as the inflection point for autonomous manipulation adoption, similar to the economic transitions we analyzed in the 2026 workforce shift.

What production challenges does VL-JEPA address that research demos ignore?

Answer: Production robotics requires graceful degradation under variable lighting, partial occlusions, sensor noise, and equipment wear. VL-JEPA's semantic embeddings maintain stable understanding even when visual inputs degrade—unlike pixel-based models that fail when conditions drift from training distributions. Field deployments report 94% autonomous operation vs. 70% with traditional vision systems. Harvard Business Review emphasizes reliability over peak performance for automation ROI.

What's the timeline from VL-JEPA research to production robotics deployment?

Answer: VL-JEPA's core capabilities are proven in research settings. Production deployment requires addressing safety certification, long-term reliability validation, and failure mode characterization—typically 12-24 months for controlled industrial environments. Organizations should start with pilot programs in warehouses or manufacturing cells while building toward broader deployment. Accenture's AI implementation framework recommends phased rollout approaches. Contact Ruh.ai to discuss pilot program strategies for your organization.

How does selective decoding reduce operational overhead in both robotics and business AI?

Answer: Selective decoding monitors inputs continuously in embedding space and only generates outputs when semantic changes occur. For robotics, this means processing hours of movement without verbal descriptions until significant events require communication. For Ruh.ai's business AI, this means monitoring thousands of customer interactions and only alerting humans when intervention is truly needed—reducing computational operations by 2.85× while maintaining accuracy, as demonstrated in Hugging Face community benchmarks.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure