Jump to section:

TL;DR / Summary

Shadow AI refers to employees using AI tools without IT approval, driven by the gap between rapid AI innovation and slow enterprise governance. It is growing quickly because these tools are accessible, useful, and often outperform sanctioned alternatives, leading to real productivity gains, faster development, and grassroots innovation across teams. However, it introduces serious risks—data leakage, compliance violations, biased or incorrect outputs, lack of auditability, and, increasingly, autonomous agentic actions that can operate without oversight. Traditional security approaches are not sufficient, and banning AI often backfires by pushing usage underground. The solution is not restriction but governance: organizations must combine clear policies, employee training, visibility tools, and secure, enterprise-grade AI alternatives to harness the benefits while controlling the risks.

Ready to see how it all works? Here’s a breakdown of the key elements:

- The AI Revolution Nobody Officially Approved

- What Is Shadow AI? Definition, Examples, and Where It Lives

- Shadow AI vs. Shadow IT: Key Differences Every Security Team Must Know

- Why Shadow AI Emerged: The 5 Root Causes Behind Unsanctioned AI Adoption

- Why Employees Keep Using Shadow AI: Productivity, AI Shame, and the Generational Gap

- 5 Ways Shadow AI Is Genuinely Helping the Tech Industry Move Faster

- Shadow AI Data Breaches and Real-World Incidents That Already Happened

- The 4 Risk Pillars of Shadow AI: Security, Compliance, Ethics, and Reputation

- Agentic Shadow AI: When Unauthorized AI Stops Talking and Starts Acting

- AI Hallucinations and Shadow AI: The Silent Reputational Risk No One Talks About

- Shadow AI Pros and Cons: A Balanced Enterprise Assessment

- 6 Shadow AI Myths Debunked — What Security Teams Keep Getting Wrong

- How to Govern Shadow AI: A 6-Step Enterprise Playbook

- The Shadow AI Governance Lifecycle: Discover, Assess, Govern, Secure, Audit

- How Ruh AI Turns the Shadow AI Problem Into a Governed Advantage

- Key Takeaways on Shadow AI

- Frequently Asked Questions About Shadow AI

The AI Revolution Nobody Officially Approved

There is a revolution happening inside corporate networks right now. It is not being led by CTOs or AI strategy committees. It is being driven by a marketing intern who discovered that a public chatbot could draft a month's worth of email campaigns in forty minutes, a developer who found that an AI coding assistant cut her debugging time in half, and a product manager who realized he could summarize a fifty-page strategy document in seconds.

None of these employees filed an IT request. None of them went through procurement. None of their tools were security-reviewed. And none of their organizations knew it was happening.

This is Shadow AI — and it is one of the most significant, fastest-growing, and least understood forces in enterprise technology today. Platforms like Ruh AI are emerging specifically to address this gap — providing sanctioned, enterprise-grade AI infrastructure that gives employees the productivity tools they need without the governance risks of going rogue.

The numbers tell a story that should concern every IT leader and security professional. AI adoption is outpacing governance at a 4:1 ratio. Approximately 38% of employees admit to sharing confidential work information with AI platforms without their employer's permission. One in five UK companies has already experienced data leakage directly attributable to employees using generative AI. According to IBM's 2025 Cost of a Data Breach Report, having a high level of Shadow AI added an extra USD $670,000 to the global average breach cost — and 97% of organizations that experienced AI-related breaches lacked proper access controls for the AI tools involved.

Yet the story of Shadow AI is not purely one of risk and failure. It is also a story of genuine innovation, competitive urgency, and the very human desire to work smarter. To understand Shadow AI fully — where it came from, what it has built, and what it has broken — you need to hold both sides of that story in view at the same time.

This blog does exactly that.

What Is Shadow AI? Definition, Examples, and Where It Lives

Shadow AI is the use of artificial intelligence tools, models, or features within an organization without formal approval or oversight from the IT and security departments.

It is not a single tool. It is not a single type of employee. And it is not, in the vast majority of cases, a deliberate act of sabotage. Shadow AI is a systemic phenomenon — a structural gap between how fast AI technology is moving and how quickly organizations can govern it.

The term is frequently described as the "rebellious cousin" of Shadow IT, but this framing undersells how different Shadow AI actually is. Traditional Shadow IT — an employee using a personal Dropbox account to share files, for example — involves data moving through an unapproved channel. Shadow AI goes further: it involves data being processed by a system that makes probabilistic decisions, generates outputs, influences business choices, and may retain what it was told in ways the organization cannot audit, retrieve, or delete.

When an employee pastes a customer list into a public chatbot to draft outreach emails, that data does not simply pass through a pipe. It may be stored. It may be used to train future model versions. It may surface in responses to entirely different users — users who may be competitors. That is a qualitatively different kind of risk from a file stored in an unapproved cloud drive.

Common Sources of Shadow AI in Enterprise Environments

Shadow AI does not only live in the obvious places. It includes:

- Public consumer chatbots — tools like ChatGPT and Claude accessed through personal or work browsers without IT approval

- Browser extensions and plugins — AI writing assistants, summarizers, and grammar tools that silently process whatever text is on screen

- Unauthorized API integrations — developers building internal tools that interface with external AI providers without a security review

- Open-source models — tools like Llama that individuals download and run independently, entirely outside IT visibility

But it also includes something far more insidious: embedded AI features inside already-approved SaaS platforms. Microsoft Teams, Salesforce, and Tableau are documented examples of platforms that have integrated AI-powered automation and analytics through standard software updates — without notifying IT or requiring a new security review. An organization that carefully vetted a platform when they first adopted it may now be running AI on sensitive internal data without a single person in the security team being aware.

This is Shadow AI by accident. And it may be the hardest kind to detect.

Shadow AI vs. Shadow IT: Key Differences Every Security Team Must Know

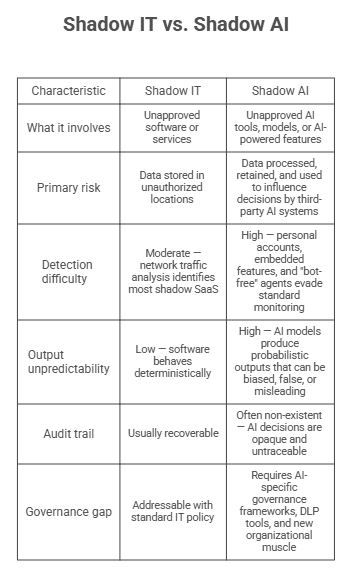

Shadow AI is a branch of Shadow IT, but it carries risks that go well beyond what traditional Shadow IT frameworks were designed to handle.

The critical distinction is in how AI models handle data and generate outputs. Unlike deterministic software that does what it is coded to do, AI models make statistical inferences. They can be wrong. They can be biased. And in the case of systems with persistent memory, they can share what they have been told with people who were never supposed to have access to it.

Why Shadow AI Emerged: The 5 Root Causes Behind Unsanctioned AI Adoption

Shadow AI did not emerge from a single moment. It emerged from five converging forces that made unsanctioned AI adoption practically inevitable.

1. Accessibility. Powerful AI tools became free, browser-based, and zero-installation — making them, in the documented phrasing, "too easy to use to ignore." The cost and infrastructure barriers that once gave IT a natural checkpoint disappeared entirely. According to a 2025 report by Proofpoint, more than 60% of users rely on personal, unmanaged AI tools rather than enterprise-approved ones.

2. Policy lag. Corporate approval processes are built for a world where technology evolves quarterly. AI tools evolve weekly. When formal sign-off takes four to six months and a deadline is tomorrow, employees don't wait — they use what works. Ruh AI's guide on how organizations should implement AI outlines exactly how to compress that approval cycle without sacrificing governance.

3. Silent SaaS rollouts. AI didn't only enter through employee initiative. Microsoft Teams, Salesforce, and Tableau integrated AI-powered features through standard software updates, often without IT notification. Organizations running security-reviewed platforms suddenly had AI processing sensitive internal data inside tools they already trusted.

4. The AI Utility Gap. Enterprise-sanctioned AI consistently fails to meet the day-to-day needs of marketing, HR, design, and operations teams. When the official tool doesn't fit, employees find one that does. A 2025 study cited by CIO Magazine found that despite half of employees having access to approved AI tools, only a third said those tools fully met their on-the-job needs.

5. Decentralized purchasing. Individual business units now control approximately 85% of SaaS spending. Departments buy AI-native tools on their own budgets, bypassing the centralized procurement review that would trigger a security assessment.

Why Employees Keep Using Shadow AI: Productivity, AI Shame, and the Generational Gap

Understanding why employees use Shadow AI — and why they keep using it even when they know it carries risk — requires looking at both practical and psychological drivers.

Speed Is the Primary Currency of Modern Work

The most straightforward driver is competitive pressure. Employees are measured on output. Deadlines are real. The AI tool that can automate hours of repetitive work in minutes is not an abstract convenience — it is the difference between hitting a target and missing it. When the choice is between a slow-approved tool and a fast-unapproved one, and the deadline is tomorrow, fast wins.

AI Shame: The Underground Adoption Nobody Discusses

One of the more counterintuitive drivers of Shadow AI is a psychological dynamic documented as "AI Shame." Some employees hide their AI use not because they believe they are doing something harmful, but because they fear judgment: being seen as lazy, being perceived as replacing their own skills, or being viewed as taking shortcuts. This shame drives AI usage underground — not just past IT governance, but past managers and colleagues — making it invisible on multiple levels simultaneously.

The irony is that the employees most likely to hide their AI use are often among the most productive and innovative. They are using AI because it works. But the fear of stigma transforms what could be a visible, governable practice into a hidden one.

The Generational AI Adoption Divide

Shadow AI adoption is not evenly distributed across the workforce. Research shows that nearly 4 in 10 workers under 30 use AI at work, compared to fewer than 2 in 10 workers over 50. Younger employees who have grown up with accessible AI tools treat them as natural extensions of their workflows. They reach for AI the way earlier generations reached for a search engine. The governance infrastructure that organizations have built was designed for a workforce with different habits.

The Workforce Readiness Gap in AI Literacy

A significant proportion of employees who use Shadow AI do not fully understand the risks they are taking. The workforce readiness gap — the lack of basic AI literacy across most organizations — means that an employee who pastes proprietary source code into a public chatbot for debugging may genuinely not know that the input is stored, potentially used for training, and could resurface in responses to other users. The intent is entirely benign. The exposure is entirely real.

5 Ways Shadow AI Is Genuinely Helping the Tech Industry Move Faster

The part of the Shadow AI story that gets lost in risk discussions: it is genuinely working. Here is how.

1. Productivity compression at the individual level. Developers debug faster, product managers summarize documents in minutes, writers produce first drafts in hours. Multiplied across a department, this shifts what a team of a given size can actually attempt — reducing headcount requirements and compressing timelines that once required trade-offs.

2. Accelerated software development. Developers using AI coding assistants — even unsanctioned ones — produce code faster, catch errors earlier, and spend less time on boilerplate. The gain is real; so is the exposure, since proprietary code submitted to unreviewed platforms may be retained.

3. Unofficial innovation engine. In organizations with slow official AI procurement, Shadow AI is often where experimentation happens at all. Employees have built internal chatbots, automated pipelines, and proof-of-concept tools that eventually made it into production. Shadow AI is not just a risk to manage — it is a signal of where genuine organizational need exists. As MIT Sloan Management Review's 2025 Agentic Enterprise report notes, organizations that thrive are those that harness employee AI curiosity rather than suppress it.

4. Democratization across non-technical teams. Marketing, HR, operations, and customer service teams are capturing AI productivity gains that a centralized IT rollout would never have prioritized for them. The capability is real; the governance infrastructure to make it safe has not kept pace. Ruh AI's work on AI employees in financial services and AI employees in healthcare illustrates how regulated, non-technical teams can harness AI capability with proper guardrails already built in.

5. A forcing function for governance. The documented reality that 38% of employees are already sharing confidential data with AI platforms has compressed governance timelines that might otherwise have stretched for years. Shadow AI made the urgency impossible to ignore. According to ISACA's 2025 industry analysis, organizations are increasingly developing AI governance roadmaps directly in response to Shadow AI pressure.

Shadow AI Data Breaches and Real-World Incidents That Already Happened

The risks are not theoretical. These incidents are documented.

The Electronics Company Source Code Leak (2023). Employees fed proprietary source code into ChatGPT for debugging. The tool stored user inputs by default, meaning the sensitive code could resurface in responses to entirely different users. Trade secrets were exposed. The incident triggered enterprise-wide AI policy reviews and outright bans across multiple major corporations. The lesson: tools built for consumers are not built for organizations with data governance obligations.

Corporate data leakage at scale. The problem is systemic, not isolated. One in five UK companies has experienced data leakage due to employees using generative AI. Approximately 38% of employees globally admit to sharing confidential work information with AI platforms without permission. IBM's research shows 13% of organizations have experienced AI-related breaches — and 97% of those lacked proper access controls. Separately, IBM's analysis of Shadow AI and hidden data risk highlights how IP theft directly tied to unsanctioned AI use has risen 26.5% year-over-year.

Department-level scenarios. Shadow AI looks different by function:

- Marketing — customer contact lists pasted into public chatbots, leaking PII to third-party servers. Ruh AI's breakdown of how AI eliminates marketing campaign bottlenecks shows how the same productivity gains can be captured inside a governed, enterprise-approved pipeline — without the data exposure.

- Product Management — internal strategy decks summarized via AI tools, leaving permanent prompt histories on servers the organization doesn't control

- Software Development — unauthorized APIs like OpenRouter used to build chatbots interfacing directly with customer data repositories

- HR — unvetted resume screening tools introducing hidden bias into hiring decisions with no audit trail to defend them

Reputation incidents. Sports Illustrated published articles attributed to AI-generated fictional authors. Uber Eats used AI-generated food imagery that misled customers. Both cases demonstrate that Shadow AI's blast radius extends well beyond the IT department — into editorial credibility, customer trust, and brand integrity.

The 4 Risk Pillars of Shadow AI: Security, Compliance, Ethics, and Reputation

Security & Data Risks from Unsanctioned AI

- Data leakage — PII, source code, and trade secrets transmitted to third-party AI servers the organization cannot audit or compel to delete

- Prompt injection attacks — adversaries tricking AI models into revealing sensitive data or executing unintended actions, a vulnerability class catalogued by the OWASP Top 10 for LLM Applications 2025

- Model memory exposure — AI systems retaining user inputs for training, meaning today's confidential submission may appear in tomorrow's response to a competitor

- Opaque behavior — no ability to audit why an AI produced a given output, creating a permanent governance blind spot

Compliance & Legal Risks

- Regulatory violations — Shadow AI bypasses GDPR, HIPAA, and CCPA data governance requirements entirely. Under GDPR Article 83, fines can reach 4% of global annual revenue if EU customer data is leaked without consent.

- Customer trust — regulated industries face compounding exposure when sensitive data is processed without proper agreements in place

Operational & Ethical Risks

- Biased outputs — unvetted resume-screening or decision-support tools can produce discriminatory results with no audit trail to defend them

- Hallucinations — AI-generated misinformation treated as fact, corrupting financial reports, strategic decisions, and customer communications

- Excessive agency — agentic AI tools with broad permissions acting autonomously — filing tickets, moving data, spending budget — with no human in the loop

Financial & Reputational Risks

- Ungoverned costs — consumption-based AI pricing with no central visibility produces fragmented, duplicated spend

- Public-facing failures — when AI-generated errors reach customers or media (as with Sports Illustrated and Uber Eats), reputational damage outlasts any regulatory fine. IBM's 2025 breach data confirms Shadow AI breaches lead to broad operational disruption — from halted sales orders to supply chain failures.

Agentic Shadow AI: When Unauthorized AI Stops Talking and Starts Acting

Everything discussed so far about Shadow AI assumes that the AI tools involved generate outputs — text, code, images, recommendations — that a human then acts upon. A new and far more dangerous category of Shadow AI is now emerging that eliminates the human from that equation entirely.

Agentic AI — AI systems that do not just generate outputs but take actions — represents a qualitative escalation in Shadow AI risk. Unlike a chatbot that writes a draft email for a human to send, an agentic AI tool books the meeting, sends the email, files the ticket, updates the database record, and triggers the next workflow step autonomously. The OWASP GenAI Security Project's Top 10 for Agentic Applications identifies excessive agency and over-privileged agent access as among the most critical emerging threats. Governed agentic platforms like Ruh AI's SDR Sarah demonstrate what this looks like when built right — with defined scopes, audit trails, and access controls embedded by design rather than bolted on after the fact.

Velocity Without Oversight: How Agentic AI Outruns Security Controls

Agentic AI can, in the documented phrasing, "outrun your oversight in seconds." A shadow agent operating with broad access permissions can move data, spend budget, modify records, and trigger external API calls before any human in the organization is aware it is operating. The speed advantage that makes agentic AI so attractive is the same property that makes it so dangerous in an ungoverned context.

Excessive Agency: The House Keys Problem

To perform their tasks, shadow agents are often granted broad permissions. The documented analogy is precise: it is like giving a friend the keys to your entire house just to water one plant. If the agent encounters a bug, misinterprets an instruction, or is hijacked by a bad actor, those broad permissions become the scope of the potential damage.

The principle of least privilege — granting any system only the access it needs for its specific task — is foundational to security. The NIST AI Risk Management Framework and its Generative AI Profile specifically emphasize access controls and governance as pillars of trustworthy AI deployment. Shadow agents, by definition, have never been through the review process that would apply these principles.

The Traceability Gap in Agentic Shadow AI

Over two-thirds of organizations cannot clearly distinguish between an AI agent's actions and human actions in their logs. This is not primarily a technical failure — it is a governance failure. Shadow agents operate through untracked identities and over-permissioned OAuth scopes that were never designed with auditability in mind. When a shadow agent causes a problem, the investigation begins from zero: who set this up, what data did it touch, and how large is the impact? Ruh AI's deep-dive on AI agents that refuse commands and fatal design flaws explores exactly why poorly scoped agents — shadow or otherwise — become governance liabilities rather than productivity assets.

Invisible AI Agents Running in Plain Sight

Some shadow agents are specifically designed to be undetectable. Certain meeting assistant tools can record, transcribe, and screenshot sessions without appearing as a visible participant — running silently in the background without appearing in the meeting's participant list. These tools are far harder to detect than standard unauthorized software because they leave no visible signature of their presence.

AI Hallucinations and Shadow AI: The Silent Reputational Risk No One Talks About

AI hallucinations — instances where AI models generate confident, plausible, but factually incorrect outputs — represent a category of Shadow AI risk that operates entirely at the level of content rather than data security. They are the risk that takes Shadow AI from an IT problem to a business problem.

Because AI models are probabilistic systems making "educated guesses" based on statistical patterns, their outputs can be wrong in ways that are not flagged, are not obvious, and are not easily detectable without domain expertise. When these outputs are generated by unvetted, unsanctioned tools and submitted without verification into corporate workflows, the error propagates.

The business consequences documented in source material include corrupted financial reporting; strategic decisions made on fabricated AI-generated analysis; customer service communications that are inconsistent or factually wrong; and legal and hiring decisions based on AI recommendations that cannot be explained, audited, or defended. The OWASP Top 10 for LLMs classifies misinformation (LLM09) as a core LLM vulnerability — one that is amplified when tools are used without oversight or verification requirements.

The reputational dimension is equally documented. Sports Illustrated's AI-authored articles and Uber Eats' misleading AI-generated food imagery are examples of what happens when AI-generated content reaches public audiences without adequate oversight. The consumer backlash in both cases was significant and long-lasting.

The specific aggravating factor in Shadow AI contexts is the workforce readiness gap. Employees who use unsanctioned AI tools often do so without a solid understanding of how those tools work, including the concept of hallucination. Without AI literacy training — knowledge of what AI can and cannot do, and why its outputs must be verified — employees become unwitting conduits for AI-generated misinformation into organizational systems.

Shadow AI Pros and Cons: A Balanced Enterprise Assessment

A complete picture of Shadow AI requires an honest account of both what it delivers and what it costs. The following assessment is drawn directly from documented evidence.

The Pros of Shadow AI

Real, measurable productivity gains. Shadow AI is primarily driven by employees who have found that these tools genuinely work. The automation of repetitive tasks, the compression of research and drafting cycles, the acceleration of code development — these are real benefits that organizations with slow governance processes would not otherwise have access to at the same speed.

Ground-up innovation and experimentation. In organizations where official AI procurement is slow, Shadow AI has been the de facto innovation engine. Employees experimenting with unsanctioned tools have built internal chatbots, automated workflows, and proof-of-concept applications that have sometimes made it into production. Shadow AI has served as a live discovery process for where genuine organizational AI need exists.

Democratization of AI capability. Shadow AI has extended AI access to non-technical employees — in marketing, HR, operations, design — who would never have been prioritized in a centralized AI rollout. The productivity gains from this democratization are distributed across the entire organization rather than concentrated in technical teams.

Competitive pressure that accelerates governance. By making the reality of unsanctioned AI adoption impossible to ignore, Shadow AI has forced organizations to confront the AI governance question on a real timeline rather than a theoretical one.

Identification of the AI Utility Gap. Shadow AI reveals, in concrete terms, where official enterprise AI is failing. When an entire department has adopted an unsanctioned tool to fill a gap that sanctioned software should have filled, that is data the organization needs.

The Cons of Shadow AI

Uncontrolled data leakage. The most direct and consequential downside: sensitive organizational data — customer records, source code, trade secrets, internal strategy documents — transmitted to external AI platforms that the organization does not control, cannot audit, and cannot compel to delete. Sales teams are a particularly high-risk group here; Ruh AI's analysis of cold email and AI-powered outreach in 2026 outlines how teams can pursue aggressive AI-driven outreach without feeding sensitive prospect data into unsanctioned channels.

Regulatory and legal exposure. GDPR, HIPAA, CCPA, and a growing body of AI-specific regulations impose strict requirements on how personal and sensitive data can be processed. Shadow AI systematically bypasses every control mechanism designed to ensure compliance, creating fines that can reach 4% of global annual revenue under GDPR Article 83 alone.

Biased and unaccountable decision-making. Unvetted AI tools can introduce biases in hiring, customer segmentation, and risk assessment that the organization cannot detect, explain, or defend. When challenged legally, the absence of an audit trail is not a technicality — it is an insurmountable obstacle to accountability.

Hallucinations corrupting organizational data and decisions. AI-generated errors that propagate into financial reports, strategic plans, or customer communications can cause compounding harm. The workforce readiness gap means these errors are often not caught before they affect real decisions.

Expanded attack surface. Every unsanctioned AI tool, every unauthorized API integration, every shadow agent with broad OAuth permissions is a new entry point for cyberattacks. Shadow AI creates an attack surface the security team cannot map because it has no visibility into what exists.

Agentic AI acting without oversight. In the emerging frontier of agentic Shadow AI, the consequences of ungoverned deployment move from data leakage to autonomous actions that may be irreversible — data deleted, messages sent, records modified, money spent — before any human knows they happened.

No audit trail. When Shadow AI causes a problem, the organization typically has no record of what the tool did, what data it processed, or who authorized it. This makes recovery harder, regulatory defense impossible, and organizational learning minimal. The IBM 2025 Cost of a Data Breach Report confirms that organizations without AI governance policies face significantly higher breach costs.

6 Shadow AI Myths Debunked — What Security Teams Keep Getting Wrong

The following myths consistently lead organizations to underestimate or mismanage Shadow AI. Correcting them is a prerequisite for effective governance.

Myth 1: Shadow AI only refers to unauthorized tools. Reality: Shadow AI includes any AI use that lacks IT oversight — including AI features silently rolled out inside already-approved SaaS platforms. An employee using an AI capability that appeared in a standard Microsoft Teams update has never made an unauthorized choice. But the organizational risk is identical.

Myth 2: Banning AI tools will stop Shadow AI. Reality: Blanket bans are widely characterized as "security theater." They push Shadow AI underground, onto personal devices and home networks, where the organization has zero visibility. As CIO Magazine's Shadow AI analysis notes, the most effective CIOs frame AI governance as responsible empowerment, not restriction.

Myth 3: Shadow AI is always driven by malicious intent. Reality: The vast majority of Shadow AI use begins with well-meaning employees trying to solve real problems and improve their output. The intent is productivity and innovation. The risk arises from bypassing formal review processes, not from any desire to cause harm.

Myth 4: Shadow AI is easy to detect. Reality: Personal accounts, browser plugins, embedded SaaS features, and "bot-free" meeting agents are specifically designed to require no infrastructure changes and leave no visible signature. Many of the highest-risk Shadow AI deployments are structurally invisible to standard monitoring tools.

Myth 5: Shadow AI only matters in technical roles. Reality: Marketing, HR, design, and operations departments are consistently among the highest-risk areas for Shadow AI. These teams handle sensitive customer and employee data, often have less security training than engineering teams, and have some of the largest AI Utility Gaps.

Myth 6: Traditional security controls are sufficient to manage AI. Reality: Shadow AI introduces risks that standard IT security frameworks were not designed to address: persistent model memory, probabilistic and potentially hallucinating outputs, autonomous agentic action, and training data exposure. The NIST AI RMF Generative AI Profile specifically identifies these as requiring new governance capabilities beyond conventional IT controls.

How to Govern Shadow AI: A 6-Step Enterprise Playbook

The most effective responses to Shadow AI share one orientation: the goal is not to eliminate AI use — it is to govern it. Restriction without enablement simply drives behavior underground.

1. Build an AI Acceptable Use Policy. Classify every tool into Approved, Limited-Use, or Prohibited. Specify which data types — customer records and source code are the documented examples — are off-limits for unvetted tools. Make it role-based: a design team's permissions differ from an engineering team's. Employees should never have to guess whether a tool is acceptable. According to S&P Global research cited by Proofpoint, just over one-third of organizations currently have a dedicated AI policy — meaning most are governing nothing at all.

2. Shift from "No" to "How." Security teams that respond to Shadow AI with prohibition are relocating the problem, not solving it. The documented framing is precise: "Say How, Not No." Offer fast-track approval processes, designate AI champions per department, and create a culture where employees bring new tools to IT rather than around it.

3. Establish an internal AI AppStore. A curated catalog of vetted, enterprise-sanctioned tools eliminates the primary reason employees reach for unsanctioned alternatives. Maintain it actively and promote it as a resource, not a compliance gate. Platforms like Ruh AI function exactly as this kind of sanctioned alternative — offering a governed workspace where AI agents and workflows can be deployed, monitored, and audited without IT needing to evaluate dozens of individual consumer tools.

4. Deploy enterprise-grade alternatives. ChatGPT Enterprise and Amazon Q offer contractual guarantees that data will not be used for model training. For the most sensitive workloads, Retrieval-Augmented Generation (RAG) enables proprietary model building that keeps data on-premises entirely.

5. Invest in AI literacy training. Technical controls cannot close the workforce readiness gap. Employees need to understand how model memory works, what hallucinations are, and why submitting sensitive data to a public chatbot creates lasting exposure. Training must reach everyone — interns to C-suite — and run continuously. The NIST AI RMF Playbook includes specific guidance on building AI literacy and workforce readiness as core governance capabilities.

6. Implement technical controls. Policy without enforcement has limited effect. The core stack: AI-specific DLP to filter sensitive data before it leaves the perimeter; CASB and SWG for network-level visibility into AI service access; AI-SPM to surface unapproved deployments; DSPM to map sensitive data exposure; and identity guardrails enforcing least-privilege on all AI integrations. For a technical deep-dive into how AI infrastructure intelligence connects with operational security, Ruh AI's piece on AI in MLOps and the intelligence revolution offers a useful framework for teams standing up governed AI pipelines.

The Shadow AI Governance Lifecycle: Discover, Assess, Govern, Secure, Audit

The AI Security Posture Management (AI-SPM) framework structures Shadow AI governance as a five-phase continuous loop — not a one-time project.

DISCOVER → ASSESS → GOVERN → SECURE → AUDIT → (repeat)

Discover. Achieve full visibility into every AI asset in your environment — models, platforms, agents, embedded SaaS features, and API integrations. Scan cloud environments, map RAG data sources, and audit existing SaaS tools for silent AI rollouts. You cannot govern what you cannot see. The NIST AI RMF emphasizes MAP as the first function for exactly this reason — you must understand your AI landscape before you can manage it. Understanding how AI interacts with your customer data flows is equally critical; Ruh AI's guide to customer journey mapping with AI offers a practical lens for mapping where AI touches customer data throughout an organization.

Assess. Evaluate the security posture of each discovered asset. Apply least-privilege principles — users should access only the specific AI application they need, not the underlying model or training data. Identify misconfigured APIs and audit agents for excessive permissions. Find the open doors before attackers do.

Govern. Translate findings into structure. Classify tools, assign ownership, build role-based permissions, populate the AI AppStore, and create governance documentation that both security and compliance teams can act on.

Secure. Deploy the technical control stack: DLP, network monitoring, identity guardrails, AI-specific threat protections. For sensitive workloads, deploy local or enterprise-grade LLMs to keep data processing on-premises. The OWASP Top 10 for LLM Applications 2025 provides a directly actionable threat framework for this phase.

Audit. Record what data was accessed, which policy rules fired, which model versions were used, and who authorized each deployment. Tie all findings to a unified risk register. This trail is also the foundation for demonstrating regulatory compliance when authorities ask. One often-overlooked audit dimension is how AI agents improve over time — Ruh AI's guide on self-improving AI agents and RLHF explains why tracking model version changes matters as much as logging actions.

After the audit phase, the cycle restarts. The Shadow AI environment evolves with every software update, every new hire, and every new AI capability. Governance that does not run continuously falls behind continuously.

How Ruh AI Turns the Shadow AI Problem Into a Governed Advantage

Every root cause of Shadow AI points to the same underlying problem: employees need capable AI tools, and organizations have not provided them fast enough or safely enough. The answer to Shadow AI is not less AI. It is better AI — enterprise-grade, governed, auditable, and built to do actual work rather than just generate text.

This is the problem Ruh AI is built to solve.

Sanctioned AI That Employees Actually Want to Use

The reason employees reach for consumer tools is straightforward: consumer tools work. They are fast, accessible, and genuinely useful. The reason Shadow AI proliferates is that the enterprise alternative has historically been neither fast nor useful enough.

Ruh AI addresses this at the core. It is an enterprise AI platform that deploys AI employees — purpose-built agents with defined roles, scoped permissions, and integrated workflows — across functions like sales, marketing, operations, and customer engagement. Employees get the productivity gains they were chasing with Shadow AI. The organization gets the audit trails, access controls, and governance it needs to stay compliant.

The key shift: Ruh AI replaces the motivation to go shadow. When a governed, enterprise-approved AI can do the job better than the unsanctioned tool an employee found on their own, there is no reason to go underground.

Enterprise Guardrails Built Into Every Agent

Where Shadow AI tools operate with broad, undefined permissions and opaque data handling, Ruh AI is designed from the ground up around the principles that governance frameworks require:

- Defined access scopes — every AI agent on the Ruh platform operates with controlled, least-privilege access to specific tools and data sources, not an open door to the entire organizational environment

- Full audit trails — every action, every workflow step, every data interaction is logged and traceable, giving security and compliance teams the evidence trail that Shadow AI deployments systematically lack

- Enterprise-grade security — token-based authentication, role-based permissions, and controlled access across all agents and workflows

- Operational transparency — real-time monitoring and system health visibility so IT can see exactly what AI is doing, to what data, and when

This is the structural opposite of how Shadow AI operates. For a detailed look at how Ruh AI's platform approaches the full implementation lifecycle, see their complete guide to organizational AI implementation.

Closing the AI Utility Gap — The Real Source of Shadow AI

One of the most documented root causes of Shadow AI is the AI Utility Gap: the chronic failure of enterprise AI to meet the day-to-day needs of non-technical departments. Marketing, sales, HR, and operations teams turn to unsanctioned tools because the sanctioned ones simply do not work for their use cases.

Ruh AI closes this gap with purpose-built AI employees across the functions where Shadow AI risk is highest:

Sales and outreach: Sarah, Ruh AI's AI SDR, handles the complete sales development workflow — prospect research, personalized outreach, follow-up sequencing, and meeting booking — entirely within a governed, auditable environment. Sales teams that would otherwise use unvetted AI to draft cold emails or scrape prospect data now have an enterprise-approved alternative that is measurably more effective than consumer tools, without the data governance exposure.

Marketing operations: Rather than pasting campaign briefs and customer data into public chatbots, marketing teams can run AI-assisted campaign workflows inside Ruh AI's governed infrastructure — the same productivity gains, none of the Shadow AI risk. Ruh AI's analysis of how AI eliminates marketing campaign bottlenecks walks through exactly how this looks in practice.

Regulated industries: For teams operating under HIPAA, GDPR, or financial services compliance requirements, unsanctioned AI is not just a governance problem — it is a regulatory one. Ruh AI's deep-dives into AI employees in healthcare and AI employees in financial services outline how AI can be deployed in these high-stakes environments without creating the compliance exposure that Shadow AI guarantees.

Addressing the Agentic AI Risk Before It Becomes a Problem

As covered earlier in this blog, agentic Shadow AI — autonomous agents acting with broad permissions outside IT visibility — represents the next escalation in Shadow AI risk. The design flaws that make agents dangerous are not unique to rogue deployments: they emerge whenever agents are given capabilities beyond what their governance framework can track.

Ruh AI's approach to agentic deployment is built around exactly the principles that prevent these failure modes: defined capability scopes, human-in-the-loop checkpoints for consequential actions, and full execution logging so every agent action can be reconstructed. Teams exploring how AI agents learn and improve over time can also read Ruh AI's guide on self-improving AI agents and RLHF for context on why model governance extends beyond initial deployment.

The Practical Path from Shadow AI to Governed AI

For organizations ready to move from reactive Shadow AI management to proactive enterprise AI deployment, the starting point is understanding what governed AI infrastructure actually looks like in practice. Explore the Ruh AI platform for a full view of how AI employees, workflow automation, and enterprise search can be deployed across your organization — or get in touch with the team directly to discuss your organization's specific Shadow AI exposure and governance requirements.

For further reading on the broader AI landscape, the Ruh AI blog covers topics ranging from MLOps and agent architecture to customer journey mapping and AI adoption across industries — all directly relevant to organizations building the governance infrastructure that turns Shadow AI from a liability into a controlled, competitive advantage.

Key Takeaways on Shadow AI

Shadow AI is not a passing phase or a fringe concern. It is a structural reality of how AI technology has entered the enterprise — faster than governance could follow, distributed across every department and function, driven by genuine productivity need and human ingenuity, and carrying risks that the existing security and compliance infrastructure was not designed to manage.

The organizations that will navigate it best are not the ones that ban the most aggressively. They are the ones that govern the most thoughtfully — who understand that the employees turning to Shadow AI are, in most cases, trying to do their jobs better, and who respond to that reality with frameworks and alternatives rather than restrictions alone.

The central lessons from the documented evidence are these:

The risk is real and already operational. With 38% of employees sharing confidential data with AI platforms, 97% of organizations lacking proper access controls, and documented incidents ranging from source code leaks to public reputational crises, Shadow AI is not a future problem. It is a present one.

Restriction alone does not work. Blanket bans drive Shadow AI underground, onto personal devices and home networks, where organizational visibility goes to zero.

Governance is an enablement problem as much as a security problem. The employees using Shadow AI are not the adversaries. They are the workforce. The governance frameworks that work give employees better options, faster approvals, and the AI literacy to use them responsibly.

Agentic AI raises the stakes dramatically. As AI systems shift from generating outputs to taking autonomous actions, the consequences of ungoverned deployment shift from data leakage to irreversible operational events. The governance infrastructure being built today needs to anticipate this next wave.

The lifecycle is continuous. Shadow AI governance is not a project with an end date. It is an operational discipline that requires continuous discovery, assessment, governance, security, and auditing — because the environment it is managing never stops changing.

Frequently Asked Questions About Shadow AI

What is Shadow AI in simple terms?

Ans: Shadow AI is when employees use AI tools — chatbots, coding assistants, AI-powered features in software they already use — without getting approval from their organization's IT or security team. It happens because AI tools are easy to access, genuinely useful, and faster to adopt than official procurement processes allow.

Is Shadow AI illegal?

Ans: Shadow AI itself is not inherently illegal, but the consequences of Shadow AI can create legal violations. Using AI tools to process EU customer data without appropriate agreements in place can violate GDPR. Using AI in hiring without proper oversight can violate employment discrimination law. The legal risk depends on what data is processed, by which tools, and in what regulatory context.

Why is Shadow AI growing so fast?

Ans: AI adoption is outpacing governance at a 4:1 ratio. The tools are free, require no installation, and deliver real productivity gains. Corporate approval processes take months. Individual business units control approximately 85% of SaaS spending, bypassing centralized IT procurement. And many major SaaS platforms have added AI features through standard updates without notifying IT teams.

How can organizations detect Shadow AI?

Ans: Detection requires a combination of network traffic monitoring (CASB, SWG), AI-specific DLP tools, AI Security Posture Management platforms, regular audits of approved SaaS tools for silent AI feature additions, access log review, and employee interviews. No single tool provides complete visibility.

What is the difference between agentic AI and regular Shadow AI?

Ans: Regular Shadow AI tools generate outputs — text, code, images — that a human then acts upon. Agentic AI takes autonomous actions: booking meetings, filing tickets, updating records, spending budget. In a Shadow AI context, this means ungoverned tools operating with broad permissions can cause operational harm before any human knows the tool is active. See the OWASP Top 10 for Agentic Applications for a detailed threat framework.

What should an organization do first to address Shadow AI?

Ans: The highest-priority first step is visibility: deploying the technical tools needed to discover what AI is actually in use. The second step is policy: establishing a clear AI Acceptable Use Policy with tool classifications, data prohibitions, and role-based permissions. The third step is culture: creating a fast-track approval process and an internal AI AppStore so employees have better alternatives. The NIST AI RMF Playbook provides a structured, freely available governance resource for each of these steps.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure