Jump to section:

TL;DR

Conversational AI and Generative AI were built to solve different problems: one focuses on understanding and managing human dialogue (chatbots, voice assistants), while the other focuses on creating new content (text, images, code). Conversational AI powers interactions like customer support and virtual assistants, while Generative AI powers content creation, automation, and creative workflows.

Today, the real shift isn’t choosing between them — it’s combining them. Modern systems integrate both (often using approaches like RAG and agentic AI) to deliver intelligent, context-aware, and fact-based outputs. Conversational AI handles the interaction layer; Generative AI handles the creation layer.

Ready to break it down? Here's what's covered:

- The Origin Stories — When and Why Each AI Was Born

- When It All Came to Picture — The Timeline of AI's Two Paths

- What They Actually Are — Deep Definitions Beyond the Basics

- The Core Technologies Powering Each

- How Conversational AI and Generative AI Are Transforming the Tech Industry

- Use Cases Across Key Sectors

- The Pros — Why These Technologies Are Genuinely Powerful

- The Cons and Risks — What the Hype Often Leaves Out

- The Honest Comparison — Key Differences Side by Side

- The Hybrid Future — RAG, Agentic AI, and the "AND" Approach

- How Ruh AI Is Putting It All Into Practice

- Responsible Use — Ethical Guardrails the Industry Cannot Ignore

- Final Thoughts

- Frequently Asked Questions

The Origin Stories — When and Why Each AI Was Born

To truly understand the difference between Conversational AI and Generative AI, you have to go back to where each began — not just technologically, but motivationally. They were not built from the same ambition. They were built to solve completely different problems.

The Birth of Conversational AI — Solving the Human-Machine Gap

Conversational AI traces its roots to ELIZA (MIT, 1966) — the world's first chatbot. ELIZA didn't understand language; it used pattern matching to simulate conversation. Yet users still reported feeling heard by it, revealing an enduring truth: humans crave dialogue, even with machines. By 1995, ALICE expanded on this with richer rule sets, but genuine understanding was still absent — both systems were scripted illusions.

Two commercial forces drove continued investment: the customer service scale problem (businesses couldn't afford to staff call centers indefinitely) and the accessibility problem (natural language was the most universal computing interface). The real breakthrough came when NLP, ML, and NLU converged — giving machines the ability to parse language structure, learn from data, and understand semantic meaning rather than just syntax. Add Automatic Speech Recognition (ASR), and the result was Siri (2011), Alexa (2014), and Google Assistant (2016) — voice-powered AI entering hundreds of millions of homes.

Conversational AI had arrived — not in a lab, but in a kitchen.

The Birth of Generative AI — Solving the Creativity and Content Problem

Generative AI began with a deceptively simple question: Can machines create? Early attempts in the 1950s–60s produced grammatically dubious, contextually meaningless text — statistical noise dressed as language. Progress stalled for decades because creating something coherent required pattern recognition at a depth rule-based systems couldn't reach.

Two breakthroughs changed everything. In 2014, Ian Goodfellow introduced Generative Adversarial Networks (GANs) — two competing neural networks (a generator and a discriminator) that iteratively drive each other toward producing photorealistic images indistinguishable from photographs. Then in 2017, Google's landmark paper "Attention Is All You Need" introduced the Transformer architecture — enabling models to process entire sequences in parallel and weigh contextual relationships at unprecedented scale. This gave birth to the LLM era: GPT-1 (2018), GPT-3 (2020), and ultimately ChatGPT (November 2022) — 100 million users in two months, the fastest consumer product adoption in history.

The commercial motivation was clear: human creative capacity doesn't scale. A 500,000-product catalog, a million personalized emails, a continuous stream of marketing assets — these volumes are impossible for human teams alone. Generative AI was the answer to every industry's content bottleneck.

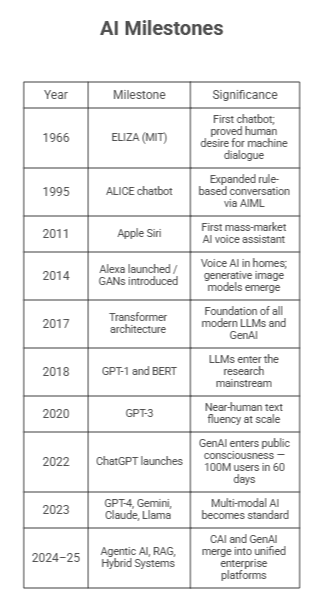

When It All Came to Picture — The Timeline of AI's Two Paths

These technologies developed in parallel for decades before converging. Here's the critical spine of that journey:

The arc is clear: two separate paths, architectural convergence around 2017–2020, and full application-level merger now underway.

What They Actually Are — Deep Definitions Beyond the Basics

"AI that holds a conversation" and "AI that creates content" are accurate — and nearly useless for anyone making real technology decisions. Here is what these systems actually do.

Conversational AI — The Dialogue Architect

Conversational AI is engineered to facilitate two-way, contextually coherent, multi-turn interaction between humans and machines. The key word is multi-turn — unlike a search engine, it maintains context across an entire exchange: remembering what was said earlier, tracking shifting intent, and managing the flow of the conversation itself.

The technical stack:

- NLP: Parses language structure — grammar, syntax, named entities.

- NLU: Goes beneath structure to meaning — understanding what the user intends, not just what they typed.

- Dialogue Management: Tracks conversation state across turns, decides the appropriate next action, maintains coherence.

- ASR: Converts speech to text, enabling voice-based interfaces.

- NLG: Constructs fluent, contextually appropriate responses.

Modern Conversational AI handles ambiguity, follow-up questions, and topic shifts naturally. Its predecessor — the decision-tree chatbot — broke the moment a user stepped outside the script. Today's systems do not.

Generative AI — The Creative Engine

Generative AI is designed to autonomously produce original artifacts — text, images, audio, video, and code — by learning the underlying patterns and distributions present in training data. It does not store and retrieve content like a database. It synthesizes — generating new sequences that are statistically consistent with what it learned, but never existed in that exact form before.

Core model architectures:

- LLMs (GPT-4, Claude, Gemini): Billion-parameter networks that generate coherent, domain-spanning text.

- GANs: Two adversarial networks that iteratively produce photorealistic images and synthetic data.

- VAEs: Probabilistic models for controlled, variation-aware generation.

- Diffusion Models: The architecture behind DALL-E and Stable Diffusion — transforming noise into structured, high-quality images.

- Transformers: The foundational architecture enabling all of the above.

The Core Technologies Powering Each

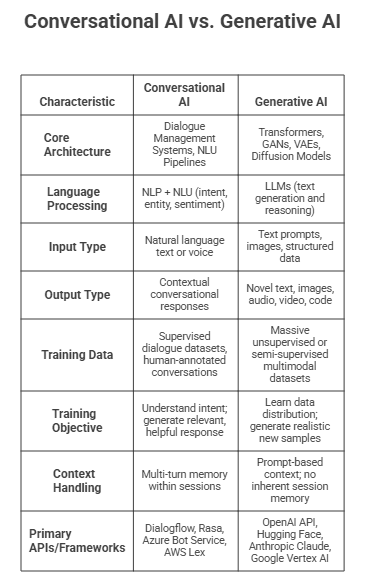

A side-by-side look at the technical building blocks that define each system:

How Conversational AI and Generative AI Are Transforming the Tech Industry

This is where the difference between these two AI paradigms stops being a theoretical distinction and starts being a business reality. Across every major sector of the technology industry, both forms of AI are reshaping operations, competitive dynamics, and value creation — but in distinctly different ways.

Transforming Software Development

Conversational AI in dev:

- AI-powered developer assistants that answer technical questions in natural language — eliminating time spent searching documentation.

- Customer-facing chatbots that handle first-tier technical support, dramatically reducing ticket volume to human engineers.

- Internal knowledge management bots that surface institutional knowledge on demand.

Generative AI in dev:

- Code generation tools like GitHub Copilot use LLMs to autocomplete functions, suggest implementations, and write boilerplate — reducing development time by measurable percentages on repetitive tasks.

- Automated test generation — identifying edge cases and writing unit tests that human developers often deprioritize under deadline pressure.

- Documentation generation — converting code into human-readable explanations automatically.

- Bug detection and refactoring suggestions that review entire codebases for anti-patterns.

The combination is particularly powerful: a developer queries a Conversational AI assistant about an architectural question, receives a context-aware dialogue to clarify requirements, and then uses Generative AI to produce the initial implementation — which is then reviewed and refined by the human engineer.

Transforming Enterprise Operations

Conversational AI:

- Scales customer support globally without proportional headcount — one well-designed Conversational AI deployment can handle thousands of simultaneous interactions.

- Enables 24/7 service availability at a fraction of the cost of human agents.

- Manages internal HR queries, IT helpdesk tickets, and onboarding processes through natural language interfaces.

For a concrete example of how this plays out in revenue operations, Ruh AI's AI SDR platform shows how Conversational AI is being deployed at the top of the sales funnel — replacing the most repetitive and scale-limited parts of B2B prospecting with autonomous agents.

Generative AI:

- Produces first drafts of internal documents, policy memos, sales proposals, and executive summaries in minutes.

- Creates marketing assets — ad copy, product descriptions, email campaigns — at scale and with personalization.

- Synthesizes data from multiple reports into coherent executive briefings.

Transforming the SaaS Landscape

The AI-first SaaS transition is perhaps the most dramatic industry shift Conversational AI and Generative AI are collectively driving. Products that were previously workflow tools are becoming intelligent systems that anticipate user needs, generate content autonomously, and communicate in natural language rather than requiring users to navigate menus and fill forms.

The competitive implication: any SaaS product that does not integrate some form of AI within the next 24–36 months risks being displaced by a newer entrant that was AI-native from day one.

Transforming Data and Analytics

Generative AI is changing how organizations interact with their own data. Instead of requiring a data analyst to write SQL queries, business users can now ask questions in plain English — a Conversational AI interface parses the intent, a Generative AI model translates it into executable code, and the results are summarized back in natural language. The entire analytics workflow, from question to insight, can happen in seconds without technical intermediaries.

Use Cases Across Key Sectors

Education and Research

Conversational AI: Adaptive tutoring bots that adjust pacing and depth to individual comprehension; dynamic quiz generation targeting specific knowledge gaps; syllabi drafting for educators; real-time assignment feedback without waiting for instructor cycles.

Generative AI: First-draft academic writing support; literature review synthesis across hundreds of papers; raw data conversion into structured narrative reports.

The dual edge: the same tool that provides personalized tutoring to a student in an under-resourced school district can enable that student to submit AI-generated work as their own — with consequences for institutional trust and long-term intellectual development. The UNESCO framework on AI in education highlights exactly this tension as a policy priority.

Healthcare

Conversational AI: Patient intake bots that collect history, symptoms, and insurance information pre-consultation; prescription query handling; appointment scheduling and no-show reduction; CBT-based mental health support between therapy sessions.

Generative AI: Clinical note generation from physician dictations; drug discovery literature synthesis; synthetic medical imaging data for radiology model training — bypassing patient privacy constraints. The NHS AI Lab and FDA's AI/ML framework both track how these deployments are scaling across healthcare systems. For a practitioner-level look at how AI employees are being embedded in clinical and administrative workflows without displacing human judgment, Ruh AI's piece on AI in healthcare — augmenting human excellence is a useful read.

Finance

Conversational AI: Natural language interfaces for account inquiries, credit queries, and mortgage status; conversational fraud alert engagement; loan pre-screening bots.

Generative AI: Automated earnings summaries and investment research briefs; synthetic transaction data for fraud detection training; personalized financial guidance at customer scale.

Ruh AI's dedicated analysis of AI employees in financial services covers how these deployments are being structured in regulated environments where accuracy and auditability are non-negotiable.

Creative Industries

Generative AI's most visible — and most debated — application domain. Writers use it to overcome writer's block and generate drafts; designers compress weeks of iteration into hours with AI-generated mockups and brand assets; marketing teams run A/B tests at scale with dozens of AI-generated copy variations; musicians use AI-generated frameworks as compositional starting points. The output belongs to humans who direct and refine it — but the ratio of human effort to finished artifact has shifted dramatically. For marketing teams specifically, Ruh AI's analysis of how AI eliminates campaign bottlenecks in marketing operations shows how the content production and campaign execution problem is being solved at the operational level.

The Pros — Why These Technologies Are Genuinely Powerful

Advantages of Conversational AI

1. Availability Without Limits Conversational AI operates continuously — 24 hours a day, 7 days a week, 365 days a year — without fatigue, sick days, shift constraints, or performance degradation. For globally distributed businesses, this is not just an efficiency gain; it is a fundamental service model change.

2. Cost Efficiency at Scale A single Conversational AI deployment can handle thousands of simultaneous conversations at a marginal cost per interaction that approaches zero as volume increases. The economics are structurally superior to human staffing for high-volume, routine interaction workloads.

3. Consistency and Quality Control Unlike human agents whose performance varies by shift, mood, and workload, Conversational AI delivers consistent response quality across every interaction — every policy applied uniformly, every escalation pathway followed correctly.

4. Enhanced Accessibility Voice-based and text-based Conversational AI interfaces make technology accessible to users who cannot operate conventional GUIs due to disability, limited literacy, or language barriers. This is not a peripheral benefit — it represents access to services for entire populations previously excluded.

5. Personalization at Depth Modern Conversational AI tracks user preferences, interaction history, and behavioral patterns to deliver experiences that feel individually tailored — without human agents needing to manually review customer records before each interaction.

6. Operational Intelligence Every interaction produces structured data about user intent, pain points, and behavior patterns — creating a continuous stream of customer intelligence that improves product and service design over time.

Advantages of Generative AI

1. Elimination of the Content Bottleneck The single most transformative advantage: Generative AI dissolves the relationship between creative output volume and human headcount. Content that once required a team of writers, designers, and data analysts can now be produced in a fraction of the time by a much smaller team with AI assistance.

2. Creative Augmentation Contrary to fears of replacement, Generative AI functions most effectively as an amplifier of human creativity. It generates initial drafts and unexpected directions that human creators then refine, redirect, and elevate — compressing the ideation phase and allowing more time for high-level creative judgment.

3. Cross-Modal Output Diversity A single Generative AI platform can produce text, images, audio, video, and code — allowing cross-disciplinary creative workflows to happen within unified toolsets rather than requiring specialist handoffs at every stage.

4. Scalable Personalization GenAI makes true 1:1 personalization of content economically viable at enterprise scale. An e-commerce platform can generate unique product descriptions, personalized email campaigns, and individualized recommendations for every customer segment — without proportionally scaling its content team. In sales, this is the principle behind AI-powered SDR platforms like Sarah from Ruh AI, which generate unique, research-backed outreach for each prospect rather than blasting templates.

5. Synthetic Data Generation In machine learning development, data is the constraint. Generative AI can produce synthetic datasets that mirror the statistical properties of real data without exposing private information — accelerating model development in healthcare, finance, and other privacy-sensitive domains.

6. Acceleration of Research and Development Generative AI condenses literature review, hypothesis generation, and preliminary analysis — compressing research cycles that previously took months into workflows that take days.

The Cons and Risks — What the Hype Often Leaves Out

This is the section most AI vendor content deliberately underweights. Understanding the limitations and risks of Conversational AI and Generative AI is not pessimism — it is due diligence. For a practical framework on navigating these risks during deployment, Ruh AI's complete guide on how organizations should use AI covers the implementation decisions that determine whether AI deployments succeed or fail in production.

Risks and Limitations of Conversational AI

1. Context Loss in Complex Conversations Despite significant advances, even sophisticated Conversational AI systems struggle to maintain coherent context across very long or highly complex exchanges. When a conversation branches across multiple topics or spans many turns, systems can lose track of earlier context — producing responses that feel inconsistent or contradictory.

2. Emotional Intelligence Gaps Sentiment analysis remains an imperfect science. Nuanced emotional states — layered sarcasm, complicated grief, culturally specific communication norms — are frequently misread. In high-stakes interactions (mental health support, bereavement services, crisis response), misreading emotional context is not just suboptimal; it can be actively harmful.

3. Privacy and Data Retention Risks Users of enterprise Conversational AI systems frequently share sensitive personal information — health details, financial circumstances, relationship issues — in the belief that their data is protected. Without rigorous data governance, conversation logs can become a privacy liability. Users may not be fully informed about how their conversational data is stored, used, or whether it may be used to further train AI models.

4. Scripted Ceiling for Edge Cases Without generative capabilities, purely Conversational AI systems have an inherent ceiling: they cannot handle queries they have not been trained to anticipate. Edge cases that fall outside training distribution receive either failed responses or graceless escalation — eroding user trust precisely when the user most needs help.

5. Dependency and Deskilling Organizations that over-deploy Conversational AI without maintaining human fallback capacity risk losing the institutional knowledge required to handle complex cases. When the AI fails — and all systems fail — the human expertise to manage escalation may have atrophied.

Risks and Limitations of Generative AI

1. Hallucinations — The Confidence of Fiction GenAI produces factually incorrect content with the same fluency and confidence as accurate content. The model cannot distinguish what it knows from what it is fabricating. In creative contexts this is an error; in medical, legal, or financial contexts it can cause direct harm. Hallucinated academic citations — plausible-sounding references that do not exist — are a well-documented and particularly damaging example.

2. Bias Amplification Models trained on human-generated data inherit human bias — racial stereotypes, gender assumptions, political skews — and can amplify and codify them into outputs at scale. Some LLMs have demonstrated measurable political biases on complex or ambiguous questions, not by design, but because their training data reflected those biases statistically.

3. Academic Integrity and Plagiarism at Scale GenAI can mimic human writing well enough to evade casual detection — and at arbitrary speed and volume. Detection tools like DetectGPT, GPTZero, and AICheatCheck are improving, but it remains a perpetual arms race. Discipline-specific content (mathematical proofs, simulation models, computer code) presents detection challenges that general text-based tools handle poorly.

4. Intellectual Property and Copyright Ambiguity Many generative models were trained on scraped copyrighted material without explicit permission. The legality is being actively litigated — the U.S. Copyright Office's AI policy guidance and ongoing federal cases are shaping what protection, if any, applies to AI-generated outputs. Who owns AI-generated content — the user, the model provider, or the creators whose work trained it — remains legally unresolved in most jurisdictions. AI outputs that closely resemble training data may constitute derivative works, creating infringement liability for commercial deployers.

5. Over-Reliance and Skill Erosion Students who generate papers without writing them may graduate with credentials but without capability. Junior professionals who rely on AI for every first draft may never develop the independent reasoning that creates senior-level expertise. Mentorship — the tacit, contextual knowledge transferred between experienced and developing professionals — cannot be replicated by a language model.

6. Misinformation at Scale Producing convincing disinformation once required a team. Now it requires a prompt. The MIT Media Lab's research on AI-generated misinformation and Stanford Internet Observatory both document the accelerating implications for elections, public health communication, and institutional trust.

The Honest Comparison — Key Differences Side by Side

The Hybrid Future — RAG, Agentic AI, and the "AND" Approach

The industry has stopped debating Conversational AI versus Generative AI. The most capable systems combine both — and the results are substantially more powerful than either approach alone.

Retrieval-Augmented Generation (RAG) — Grounding GenAI in Truth

RAG is the most significant hybrid innovation in enterprise AI today. It directly addresses GenAI's most dangerous flaw: hallucination. First formally introduced in the 2020 Lewis et al. paper from Facebook AI Research, RAG has since become foundational to enterprise AI deployments from AWS to Google Cloud to Microsoft Azure.

Standard generative models rely on static training data. When queried on recent, proprietary, or highly specific information, they can only generate text that seems plausible — which means fabrication is a real risk. RAG fixes this by shifting the model's role from creator of information to summarizer of verified facts.

The four-step RAG workflow:

- Retrieval: User query is converted into a search and sent to a trusted document repository.

- Capture: Relevant verified documents are returned.

- Augmentation: The LLM receives both the query and the retrieved documents.

- Grounded Output: The model summarizes the source material rather than generating from memory.

RAG is an open-book exam for AI — instead of answering from memory, the system hands the model its reference materials and asks it to explain them.

RAG grounds AI responses in live enterprise data — internal documentation, patient records, financial filings, product specs — producing predictable, compliant, hallucination-resistant outputs suitable for high-stakes deployment in healthcare, finance, and legal contexts.

Agentic AI — The Third Category

The convergence is also producing Agentic AI: systems that don't just respond or create, but autonomously plan and execute multi-step workflows. An agentic system receives a high-level objective, decomposes it into subtasks, retrieves and synthesizes information across sources, generates a structured output, and flags gaps for human review — with minimal manual intervention. This is only possible when CAI's intent management is fused with GenAI's synthesis capability. Understanding how to design these agents so they behave predictably — and don't fail in ways that undermine trust — is covered in depth in Ruh AI's analysis of AI agents that refuse commands and the fatal design flaws behind unstable production deployments.

In practice today:

- Intelligent Virtual Agents handling end-to-end customer journeys without escalation — like Ruh AI's approach to customer journey mapping with AI, where dialogue intelligence and generation are combined to adapt the journey in real time.

- Automated research summarization across multiple live data sources.

- AI-driven dev pipelines moving from requirements to implementation, tests, and documentation. Teams working in this space will find Ruh AI's guide to prompt engineering for production AI agents directly applicable to the workflow orchestration layer.

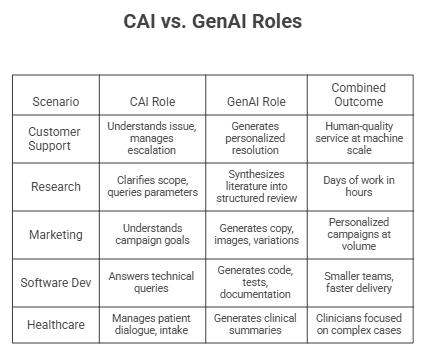

How the Combination Makes Everything Easier

How Ruh AI Is Putting It All Into Practice

Ruh AI is a direct example of the hybrid architecture this article describes — a platform where Conversational AI and Generative AI are integrated by design, not assembled from disconnected tools.

Sarah — The AI SDR

The clearest illustration is Sarah, Ruh AI's AI Sales Development Representative. Sarah uses Conversational AI to handle multi-turn prospect dialogue — qualifying intent, managing objections, maintaining context — while simultaneously using Generative AI to produce personalized, research-backed outreach for each account. The Ruh AI SDR platform operationalizes this at the top of the sales funnel, replacing the most repetitive part of B2B prospecting with an agent that runs continuously. Their analysis of AI-driven cold outreach in 2026 covers how this shift is playing out in practice.

Sales, Marketing, Finance, and Healthcare

Ruh AI applies the same integrated logic across sectors. In marketing, they address the campaign bottleneck — where GenAI produces volume but without conversational grounding produces volume without relevance — through AI-driven marketing operations and AI-powered customer journey mapping. In regulated industries, their sector-specific work on AI in financial services and AI in healthcare reflects the same principle: AI augments rather than replaces, and operates within defined accountability boundaries.

Building AI Agents That Actually Work — Ruh AI's Technical Depth

Most AI deployments fail not from picking the wrong model but from poor agent architecture. Ruh AI has documented the fatal design flaws that cause production AI agents to fail and published a complete organizational AI implementation guide that bridges conceptual understanding and operational execution. For teams building agents: their RLHF guide for self-improving AI agents covers how to make systems improve through deployment, and their prompt engineering guide for production AI agents addresses the design patterns that separate reliable production systems from fragile demos. The infrastructure that holds all of this together is explored in their analysis of AI in MLOps.

Explore the Ruh AI blog for applied coverage of these topics, or reach out via the Ruh AI contact page to discuss implementation.

Responsible Use — Ethical Guardrails the Industry Cannot Ignore

Regulatory pressure, reputational risk, and legal liability are converging to make ethical AI governance a business imperative, not a CSR exercise. The NIST AI Risk Management Framework and the EU AI Act are the two most consequential frameworks shaping how organizations must act.

Transparent Disclosure: Users have a right to know when they are interacting with AI — in customer communications, journalism, marketing, and academic submissions. This norm is increasingly codified in law.

Data Privacy: Every Conversational AI deployment must define what data is collected, retained, and whether it feeds model retraining. The GDPR's Article 22 and CCPA impose direct obligations here. Generative AI adds further complexity: creators whose work trained these models were rarely informed or compensated, and legal frameworks to address this are still forming.

Bias Auditing: Bias encoded in training data becomes bias encoded in outputs — at machine scale. In hiring, lending, healthcare, and legal contexts, this is not a theoretical concern. Regular, independent audits are not optional.

IP Compliance: Organizations deploying GenAI for commercial content must assess training data provenance. The U.S. Copyright Office's AI guidance and active litigation are rapidly reshaping what liability looks like.

Accountability: When AI-generated content causes harm, responsibility must be assigned clearly — to the deploying organization, the model provider, or the approving human. Building accountability frameworks before incidents occur is the only viable approach.

Human Skill Preservation: The deepest long-term risk is gradual: AI replacing the learning process rather than supporting it. Institutional guidelines must distinguish between AI as an accelerator and AI as a substitute for developing genuine human capability.

Final Thoughts

The difference between Conversational AI and Generative AI is not merely a technical distinction. It is a window into two fundamentally different theories of what machines can do for us — and two different challenges we face in deploying them responsibly.

Conversational AI was born from the need to make machines accessible and interactive — to bridge the gap between human language and machine logic in a way that felt natural, useful, and scalable. It has delivered on that promise: billions of daily interactions across customer service, healthcare, education, and enterprise operations now pass through Conversational AI systems that would have seemed science fiction thirty years ago.

Generative AI was born from the need to make machines creative and productive — to compress the distance between human imagination and artifact production in a way that multiplied rather than replaced human output. It has delivered on that promise too: content, code, images, and data that would have taken teams of specialists weeks to produce can now be generated in hours by systems accessible to non-specialists.

But the most important story is not the difference between them. It is what happens when they are combined — when Conversational AI's contextual intelligence is unified with Generative AI's creative capability under the governance of architectural innovations like RAG. The result is AI that is simultaneously accessible, capable, and grounded in verifiable fact.

The organizations — and the individuals — who will lead in the AI era are not those who choose between these technologies. They are those who understand both deeply enough to combine them strategically, govern them responsibly, and preserve the human judgment and creativity that no model, however powerful, has yet replicated.

The machine is talking. The machine is creating. But the decisions about what it says and what it makes are, for now, still ours.

Frequently Asked Questions

Q: Is ChatGPT conversational AI or generative AI?

Ans: ChatGPT is both — and understanding why reveals something important about where the two technologies are headed. Its core engine is a Generative AI system (GPT-4, a Large Language Model). But it operates in a conversational interface with multi-turn dialogue management. ChatGPT is perhaps the most visible example of what happens when Generative AI is wrapped in a Conversational AI framework: a system that can both create original content and engage in contextually coherent extended dialogue.

Q: What is the primary difference between conversational AI and generative AI in terms of objective?

Ans: Conversational AI's primary objective is facilitating interactive, contextually coherent dialogue — it is engineered to understand and respond. Generative AI's primary objective is autonomous content creation — it is engineered to learn patterns and produce original artifacts. One resolves interactions; the other creates outputs.

Q: How does RAG reduce hallucinations in generative AI?

Ans: RAG replaces the model's reliance on its own training memory with real-time retrieval of verified documents. Instead of generating an answer from probabilistic patterns — where the model may "fill in" information it does not actually have — RAG provides the model with the actual source material and asks it to summarize. As long as the correct answer exists in the connected document repository, the model can produce a factually grounded response.

Q: What are the biggest risks of using generative AI in academic research?

Ans: The primary risks are: (1) hallucination — AI generating plausible-sounding but fabricated citations, statistics, or claims; (2) plagiarism — AI-generated text submitted as original human work; (3) bias in outputs reflecting biases in training data; (4) data privacy exposure when sensitive research data is inputted into commercial AI platforms; and (5) intellectual property complications around content generated from models trained on copyrighted academic material.

Q: Can conversational AI and generative AI replace human workers?

Ans: Neither is a wholesale replacement for human workers — and the evidence suggests that the most effective deployments augment rather than replace human capability. Conversational AI handles high-volume, routine interaction workloads, freeing human agents for complex cases requiring genuine empathy and judgment. Generative AI handles volume production of content and code, freeing human creatives and engineers for higher-order decisions, strategy, and quality oversight. The risk is not that these systems replace humans, but that organizations use them as an excuse to eliminate the human expertise needed to manage, govern, and improve them.

Q: Which is better for my business — conversational AI or generative AI?

Ans: The question is almost always false. Most substantive business applications benefit from both. The right question is: Where in your operations is the constraint? If you are losing customers due to slow or inconsistent service response, Conversational AI addresses that. If your team is bottlenecked by content production volume, Generative AI addresses that. If both are true — and for most businesses they are — a hybrid architecture combining both is likely the right direction.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure