Jump to section:

TL;DR / Summary

In 2026, the gap between open-source and proprietary AI workforce platforms has essentially closed on raw performance — but the right choice still hinges on your organization's engineering maturity, compliance obligations, total cost of ownership, and long-term AI roadmap. Open-source wins on flexibility and cost control; proprietary wins on speed-to-value and enterprise support. Most future-proof organizations are choosing both.

Ready to see how it works:

- Why the Open-Source vs. Proprietary Debate Resurfaced in 2026

- What "AI Workforce Platform" Actually Means in 2026

- The Open-Source AI Workforce Platform Landscape

- The Proprietary AI Workforce Platform Landscape

- Performance Is Now a Draw — What Actually Differentiates Them

- Total Cost of Ownership: The Real Numbers

- Security, Compliance, and Data Sovereignty in 2026

- When to Choose Open-Source

- When to Choose Proprietary

- The Hybrid Model: Why Most Enterprises Will Choose Both

- How Ruh AI Is Navigating the Open-Source vs. Proprietary Divide

- The 2026 Decision Framework: A Practical Checklist

- Frequently Asked Questions

Why the Open-Source vs. Proprietary Debate Resurfaced in 2026

For most of the last decade, the enterprise AI conversation was dominated by proprietary vendors. OpenAI, Google, and Anthropic set the pace, and organizations followed with API subscriptions, SaaS licensing deals, and vendor lock-in agreements. The assumption was simple: proprietary models were smarter, safer, and more reliable — and they were worth paying for.

That assumption cracked in January 2025, when DeepSeek released its R1 model — an open-source reasoning engine that matched GPT-4-class performance at a fraction of the training cost. By the end of 2025, the MMLU benchmark gap between open-source and proprietary models had narrowed from 17.5 percentage points to just 0.3. The performance parity was no longer theoretical. It was measurable.

At the same time, the enterprise AI category was rapidly evolving from "AI tools" to "AI workforce platforms" — full-stack systems designed not just to generate content or analyze data, but to autonomously execute work: scheduling, hiring, task routing, compliance monitoring, content production, customer interaction, and more. The stakes for choosing the wrong platform architecture rose dramatically.

This is the context of 2026's central enterprise AI question: should your AI workforce platform be built on open-source infrastructure, proprietary systems, or a deliberate hybrid?

This guide gives you a clear, evidence-based framework to make that decision.

What "AI Workforce Platform" Actually Means in 2026

Before comparing open-source and proprietary options, it's worth defining the category. An AI workforce platform in 2026 is more than an LLM integration or a chatbot layer. It typically includes:

- AI agents that can autonomously complete multi-step tasks without human intervention — running processes, generating outputs, and closing loops while your human team is offline

- Orchestration infrastructure to manage workflows, memory, and tool use across agents

- Data connectors that integrate with HRIS, CRM, ERP, and productivity tools

- Governance and compliance layers to monitor AI decisions, maintain audit trails, and enforce policy

- A model layer — either proprietary APIs, fine-tuned open-source models, or a hybrid routing system

According to Forrester's 2026 predictions, AI agents are no longer a future consideration — they are actively changing business models and workplace culture today. Leading platforms including Adobe, SAP, Salesforce, Workday, and ServiceNow have already woven AI agents into their core product architectures.

The question is no longer whether to adopt an AI workforce platform — it's how to build one that lasts.

The Open-Source AI Workforce Platform Landscape

The open-source AI ecosystem matured dramatically between 2024 and 2026. What was once a patchwork of experimental libraries is now a credible enterprise stack.

Leading Open-Source Agent Frameworks

LangGraph (by LangChain) is widely considered the most production-ready open-source orchestration framework for complex, stateful AI workflows. It enables cyclical graphs — meaning agents can loop, evaluate, retry, and branch — which is essential for real workforce automation tasks. According to Langfuse's 2025 framework comparison, LangGraph offers "the most control and best production readiness for complex workflows."

CrewAI excels at multi-agent collaboration — scenarios where a "Planner" agent coordinates a "Researcher," "Writer," and "Reviewer" in parallel. Its approachable syntax and built-in memory modules — which allow agents to retain context across sessions and tasks — have made it the go-to framework for teams that need to move quickly. CrewAI is especially well-suited for parallelized, role-based workforce tasks.

AutoGen (Microsoft Research) takes an asynchronous, conversation-driven approach to agent orchestration. Because agents communicate as non-blocking participants, AutoGen handles long-running tasks gracefully — critical in workforce platforms where tasks may span hours or days.

Haystack, LlamaIndex, and Mastra round out the mid-tier, each offering specialized strengths in retrieval-augmented generation, knowledge management, and TypeScript-native agent development respectively.

Leading Open-Source Models

On the model side, Meta's Llama 3.x, Mistral Large, DeepSeek V3.2, Alibaba's Qwen, and Google's Gemma families have collectively democratized frontier-class AI. According to AIMojo's 2026 open-source AI state analysis, 89% of organizations using AI already leverage open-source models in some form, and Chinese labs alone are shipping new top-performing models every 4–6 weeks.

For workforce platforms specifically, the appeal of open-source models includes:

- Zero per-token API costs after self-hosting setup

- Fine-tuning capability to encode domain-specific expertise

- Data residency — sensitive employee or customer data never leaves your infrastructure

- Model portability across cloud providers and on-premise environments

The Proprietary AI Workforce Platform Landscape

Proprietary platforms offer something open-source cannot easily replicate: deep integration, enterprise SLAs, and a single vendor relationship that simplifies procurement, support, and accountability.

Major Proprietary Workforce AI Platforms

Microsoft 365 Copilot remains the most widely deployed AI workforce tool in enterprise settings. Embedded into Word, Excel, Teams, PowerPoint, and Outlook, Copilot leverages Microsoft's multi-model infrastructure including GPT-4o, Phi, and third-party models routed through Azure AI Foundry. The addition of Copilot Studio enables low-code agent building for non-developers, while the A2A (Agent-to-Agent) protocol allows cross-platform agent communication. Enterprises report an average ROI of 116% with Microsoft Copilot deployments.

Workday's Enterprise AI Platform has become a reference architecture for AI-native HR and workforce management. Backed by over 1,800 enterprise customers in retail and hospitality alone, Workday's AI layer includes Paradox for conversational recruiting, an Agent System of Record for tracking autonomous actions, and deep integrations with scheduling, payroll, and performance data. Josh Bersin's March 2026 analysis describes Workday's new AI strategy as "bold, integrated, and genuinely workforce-native."

SAP Joule is embedded across SAP's cloud suite and accessed via natural language — letting HR managers, finance teams, and operations staff query and act on SAP S/4HANA data without SQL or BI dashboards. The NRF 2026 showcase positioned SAP, alongside Microsoft and Workday, as turning agentic AI into the operating system for enterprise operations.

Salesforce AgentForce, ServiceNow Now Assist, and Oracle HCM AI complete the top tier, each offering domain-specific workforce automation embedded in their respective platforms.

What Proprietary Platforms Do Best

- Fast deployment: Enterprise customers can activate workforce AI features in days, not months

- Turnkey compliance: Vendors handle data processing agreements, SOC 2/ISO certifications, and regional compliance

- Vendor support: Dedicated enterprise support teams, SLAs, and roadmap alignment

- Ecosystem integrations: Native connectors to hundreds of enterprise tools without custom development

Performance Is Now a Draw — What Actually Differentiates Them

The single most important shift in 2026 is that raw model performance is no longer a meaningful differentiator between open-source and proprietary AI workforce platforms.

The MMLU benchmark gap between leading open-source and proprietary models collapsed to 0.3 percentage points by the end of 2025. In many specialized benchmarks — coding, structured reasoning, document extraction — open-source models like DeepSeek V3.2 and Qwen 2.5 actually outperform proprietary alternatives on a cost-adjusted basis.

This means the real differentiators in 2026 are:

Domain adaptation — which approach lets you more effectively fine-tune for your specific workflows?

Total cost of ownership — not licensing alone, but infrastructure, talent, maintenance, and compliance costs

Data control — where does your workforce data live, and who can access it?

Integration depth — how natively does the platform connect to your existing tech stack?

Governance and auditability — can you explain every AI decision when regulators ask?

Engineering team capability — can your team build, maintain, and iterate on the platform?

IT Pro's analysis of Linux Foundation data confirms that 51% of firms using open-source AI record positive ROI versus 41% of those on proprietary models — but this gap is largely explained by the higher engineering maturity of organizations that successfully self-host open-source platforms.

Total Cost of Ownership: The Real Numbers

The open-source pricing myth is dangerous: while open-source carries zero licensing fees, the total cost of ownership often exceeds proprietary solutions in years one and two. This surprises many decision-makers who equate "free software" with "free platform."

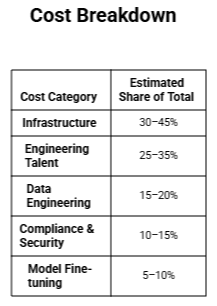

According to the SoftwareSeni TCO framework, 85% of organizations misestimate their AI project costs by more than 10% — and most underestimate by 30–40%. The reason: vendor pricing only covers licensing. The real bill includes:

Open-Source TCO Breakdown

Key insight: VentureBeat's 2025 TCO analysis found that organizations need to honestly assess their engineering capability for model fine-tuning, hosting, and maintenance before choosing an open-source path. Infrastructure costs alone represent 30–45% of total spend — a figure that shocks teams accustomed to SaaS subscription budgeting.

Proprietary TCO Breakdown

Proprietary platforms charge through licensing, per-seat fees, consumption-based API pricing, or enterprise contract bundles. While these fees are higher on a unit basis, they consolidate many hidden open-source costs:

- No dedicated MLOps engineers required for model hosting

- Compliance and security maintained by the vendor

- Integration costs lower due to native connectors

- Faster time-to-value reduces the "lost opportunity" cost of delayed deployment

The crossover point: Most analyses find that well-resourced engineering teams reach open-source TCO parity with proprietary platforms after 18–24 months of operation, as amortized development investments decrease. For organizations with weaker engineering capacity, the crossover may never arrive.

Security, Compliance, and Data Sovereignty in 2026

2026 is the year compliance complexity became a genuine platform selection driver. Two regulatory milestones in particular have reshaped how enterprises evaluate AI infrastructure:

The EU AI Act Takes Full Effect

The EU AI Act's high-risk AI system obligations apply from August 2, 2026, covering AI used in employment, recruitment, task allocation, monitoring, promotion, and termination decisions. Workforce platforms fall squarely in this category. Organizations deploying AI agents for HR tasks must maintain detailed technical documentation, conduct conformity assessments, and ensure human oversight mechanisms are in place.

Secureprivacy's 2026 compliance overview notes that this creates fundamentally different compliance burdens depending on your platform type:

- Proprietary platforms: Vendors typically provide compliance documentation, data processing agreements, and model cards — but organizations remain legally responsible for appropriate use

- Open-source platforms: Organizations assume full responsibility for compliance documentation, auditability, and technical safeguards — requiring dedicated legal and technical resources

Data Sovereignty and Cross-Border Transfers

AI Magicx's 2026 data sovereignty analysis identifies a critical risk: cloud-based proprietary AI systems may now be legally non-compliant in markets with strict data localization requirements. At least 34 countries have enacted or strengthened data localization laws. The collapse of the EU-U.S. Data Privacy Framework in late 2025 left organizations without a clear legal mechanism for transferring EU employee data to U.S.-based AI services.

This is a significant advantage for self-hosted open-source platforms — when data never leaves your own infrastructure, cross-border transfer restrictions simply don't apply.

Open-Source Security Risks

Open-source is not a security free lunch. The OpenSSF's cybersecurity report highlights that open-source AI introduces risks including unknown contributors, unvetted model weights, potential data poisoning, and adversarial attacks. The DeepSeek R1 controversy — which raised national security concerns following its January 2025 release — illustrates how geopolitically complex open-source AI provenance can become.

Microsoft's April 2026 Agent Governance Toolkit — an open-source runtime security framework for AI agents — represents the industry's response to these gaps, providing organizations with tools to audit agent behavior, enforce access controls, and maintain compliance trails.

When to Choose Open-Source

Open-source AI workforce platforms are the right choice when:

1. Data sovereignty is non-negotiable. Industries like healthcare, financial services, defense, and government often cannot send employee or operational data to third-party API endpoints. Open-source, self-hosted models ensure sensitive data stays on your infrastructure.

2. You have a capable ML/AI engineering team. Self-hosting, fine-tuning, and maintaining open-source models requires dedicated MLOps expertise. If you have this team, open-source gives you an unmatched customization ceiling.

3. Your use case is highly specialized. Generic proprietary models are optimized for general tasks. If your workforce platform needs to understand industry-specific terminology, proprietary processes, or niche document types, fine-tuning an open-source model on your internal data often outperforms any out-of-the-box proprietary solution.

4. You're optimizing for long-term cost at scale. For high-volume AI tasks — processing thousands of resumes, generating hundreds of daily reports, routing millions of customer interactions — self-hosted open-source models eliminate per-token API costs that compound rapidly at scale.

5. You want vendor independence. Proprietary platforms change pricing, deprecate APIs, and shift product strategy. Open-source gives you full control over your model layer, making you immune to vendor lock-in.

When to Choose Proprietary

Proprietary AI workforce platforms are the right choice when:

1. Speed-to-value is the primary constraint. Enterprise proprietary platforms can be activated in days. Open-source stacks typically require weeks to months of infrastructure setup, model selection, and integration work before they deliver business value. For a structured approach to AI adoption regardless of which path you choose, see Ruh AI's complete AI implementation guide.

2. Your team has limited AI engineering capability. If you don't have MLOps engineers, AI infrastructure specialists, or model evaluation expertise on staff, proprietary platforms offload the complexity that would otherwise become a bottleneck.

3. You need deep integration with existing enterprise software. If your workforce platform needs to read from and write to Workday, SAP, Salesforce, or Microsoft 365 natively, proprietary platforms with purpose-built connectors save months of integration work.

4. Regulatory accountability matters. Proprietary vendors provide contractual commitments, compliance documentation, and shared responsibility models that are difficult to replicate with open-source stacks. For regulated industries, this paper trail has real legal value.

5. You're in the 500–5,000 employee range. Analysis from multiple sources confirms that most mid-size companies find proprietary platforms more cost-effective due to lower talent requirements and faster time-to-value, despite higher per-use costs.

The Hybrid Model: Why Most Enterprises Will Choose Both

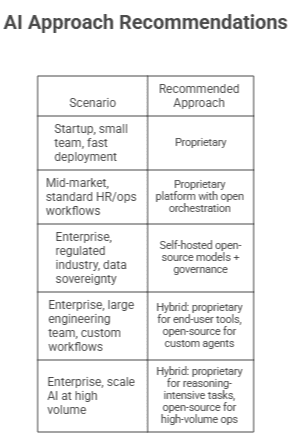

The most important insight of 2026 is that the open-source vs. proprietary choice is not binary. Leading enterprises increasingly deploy strategic hybrid architectures that use each approach where it has a genuine advantage.

A typical enterprise hybrid stack in 2026 looks like:

- Proprietary platform layer (e.g., Microsoft 365 Copilot, Workday AI) for end-user-facing workforce tools that benefit from deep native integration and zero infrastructure management

- Open-source orchestration (e.g., LangGraph, CrewAI) for custom agentic workflows that go beyond what proprietary platforms support out of the box

- Fine-tuned open-source models for domain-specific tasks — fed by internal data — deployed on private cloud or on-premise infrastructure for compliance-sensitive use cases

- Proprietary frontier models via API (GPT-4o, Claude 3.x, Gemini) for high-reasoning tasks where the absolute best performance justifies the cost

Forrester's 2026 enterprise software predictions confirm this pattern: vendors adopting the open Model Context Protocol (MCP) standard for AI agent collaboration have significantly higher probability of early, enterprise-wide adoption, because MCP allows open-source and proprietary agents to interoperate seamlessly.

The World Economic Forum's January 2026 analysis frames this well: open-source enables experimentation and cost control, while proprietary systems ensure scalability, governance, and performance consistency. The enterprises that will outperform are those that build the operational intelligence to deploy the right model for the right task.

How Ruh AI Is Navigating the Open-Source vs. Proprietary Divide

At Ruh AI, we've built our entire content intelligence platform around the conviction that the future of AI-powered work is not a single platform or a single model — it's a coordinated system of specialized agents, each optimized for the task at hand.

Our approach reflects the hybrid model that leading enterprises are converging on in 2026:

For transparency and adaptability, Ruh AI's core workflows leverage open-source orchestration frameworks that allow us to route tasks to the most appropriate model — whether that's a fine-tuned domain model for SEO analysis, a frontier reasoning model for strategy generation, or a fast, low-cost model for high-volume content operations.

For enterprise-grade reliability, our platform integrates proprietary APIs and connectors where they deliver provable value — particularly for deep integration with enterprise content systems, analytics platforms, and publishing workflows.

For data control, we design every client workflow with data sovereignty as a first principle. Sensitive brand data, proprietary research, and competitive intelligence stay within agreed-upon infrastructure boundaries — not routed through third-party model providers without explicit client consent.

In practice, this looks like purpose-built AI agents that operate autonomously within defined parameters. Take Sarah, Ruh AI's AI SDR — a fully autonomous sales development agent that qualifies leads, personalizes outreach, and books meetings without requiring a human in every loop. Sarah is a direct expression of the hybrid architecture at work: proprietary integrations for CRM and calendar connectivity, open-source reasoning for personalization logic, and a governance layer that keeps every action auditable. Our broader AI SDR platform demonstrates how workforce AI can extend into revenue operations, not just content and productivity.

The Rapid Innovation case study illustrates what this looks like at scale: a growth-stage company using Ruh AI's hybrid agent stack to fully automate their marketing operations — delivering pipeline results that previously required a full-time team.

The result is a workforce AI platform that gives our clients the flexibility of open-source and the reliability of proprietary systems — without asking them to sacrifice one for the other.

As the open-source vs. proprietary debate continues to evolve, Ruh AI's architecture is designed to adapt: swapping models as performance benchmarks shift, adopting new compliance standards as regulations evolve, and expanding agent capabilities as the open-source ecosystem matures. Explore our full suite of AI workforce tools or browse the Ruh AI blog for deeper dives into the technology powering the next generation of enterprise AI.

Interested in seeing how Ruh AI's hybrid AI workforce approach could apply to your operations? Explore our platform to learn more.

The 2026 Decision Framework: A Practical Checklist

Use this checklist to guide your AI workforce platform decision. Score each factor based on your organization's situation.

Organizational Readiness Questions

Engineering Capability

- Do you have dedicated MLOps or AI infrastructure engineers on staff?

- Can your team evaluate model quality, monitor drift, and manage fine-tuning pipelines?

- Do you have existing DevOps infrastructure for GPU/compute provisioning?

If yes to 2+ → Open-source is viable. If no to 2+ → Lean proprietary.

Compliance and Data Requirements

- Do you operate in a regulated industry (healthcare, finance, government, defense)?

- Are you subject to EU AI Act high-risk AI obligations?

- Do you have data residency requirements that prohibit third-party API processing?

If yes to 2+ → Self-hosted open-source is likely required for compliance. Evaluate proprietary vendors with regional data centers as an alternative.

Budget and Timeline

- Do you need AI workforce capabilities operational within 90 days?

- Is your team optimizing for long-term cost over a 3+ year horizon?

- Do you have budget for dedicated AI engineering staff?

Fast timeline + limited engineering → Proprietary. Long horizon + engineering capacity → Open-source. Both + scale → Hybrid.

Customization Requirements

- Does your workflow require domain-specific language, proprietary processes, or niche document understanding?

- Will off-the-shelf models perform adequately without fine-tuning?

- Do you need to embed proprietary competitive intelligence or internal knowledge?

High customization need → Open-source fine-tuning. Standard workflows → Proprietary.

The Decision Matrix

Building the AI Workforce That Lasts

The open-source vs. proprietary AI workforce platform debate in 2026 is ultimately a false binary. The organizations that will outperform are not those who pick a side — they're those who build the judgment to deploy the right approach for the right task.

Open-source delivers when your needs are specific, your data is sensitive, your engineering team is capable, and your time horizon is long. Proprietary delivers when your need is urgent, your workflows are standard, your team is lean, and your integration requirements demand vendor-native solutions. The hybrid model — increasingly, the norm among enterprises that have moved past first-generation AI deployments — delivers the best of both.

What matters most in 2026 is not which platform wins the benchmark war. It's whether your AI workforce infrastructure is flexible enough to adapt as the landscape shifts — and sophisticated enough to align AI decisions with the people, processes, and governance structures that make organizations actually work. If you're mapping out your organization's AI adoption path, start with Ruh AI's complete implementation guide for organizations using AI — a practical framework for making exactly these decisions.

The time to build that foundation is now.

Frequently Asked Questions

Q: Is open-source AI actually as good as proprietary AI in 2026?

A: For most workforce platform use cases, yes. The performance gap between leading open-source models (Llama 3.x, Mistral Large, DeepSeek V3.2) and proprietary models (GPT-4o, Claude 3.5, Gemini 1.5) has narrowed to less than 1 percentage point on standard benchmarks. The meaningful differences are no longer about raw intelligence — they're about integration, support, compliance tooling, and total cost of ownership.

Q: What is the total cost of ownership for open-source AI workforce platforms?

A: Significantly higher than most organizations expect. Infrastructure costs alone represent 30–45% of total spend, and engineering talent adds another 25–35%. Organizations routinely misestimate their AI project costs by 30–40%. The open-source advantage over proprietary typically materializes after 18–24 months of operation, once upfront development costs are amortized.

Q: Does the EU AI Act affect my choice of AI workforce platform?

A: Absolutely. The EU AI Act's high-risk AI obligations apply to AI used in employment decisions, and take full effect August 2, 2026. Both open-source and proprietary platforms must meet these requirements, but the compliance burden falls primarily on the deploying organization, not the platform vendor. Open-source deployments require organizations to produce their own compliance documentation and technical safeguards.

Q: Can I switch from proprietary to open-source (or vice versa) mid-journey?

A: Yes, but it requires careful architectural planning. Organizations that start with proprietary platforms and want to add open-source layers benefit from adopting orchestration standards like MCP (Model Context Protocol) from the start — these allow agents from different platforms to interoperate. Full migration from one approach to another typically requires 3–6 months of parallel operation.

Q: What are the biggest security risks with open-source AI workforce platforms?

A: The primary risks include unvetted model weights, supply chain vulnerabilities from unknown contributors, adversarial attacks, and the absence of a vendor security team monitoring threats. The OWASP Top 10 for Agentic Applications (published December 2025) provides the most comprehensive taxonomy of AI agent risks. Organizations should pair open-source deployments with the Microsoft Agent Governance Toolkit or equivalent runtime security tooling.

Q: How do I evaluate whether my team is ready for open-source AI infrastructure?

A: Assess three things: (1) Do you have engineers comfortable with GPU provisioning, container orchestration, and model serving frameworks like vLLM or Ollama? (2) Can your team evaluate model quality systematically, not just by gut feel? (3) Do you have a monitoring strategy for model drift and inference quality? If any of these are missing, a proprietary platform bridge — while you build these capabilities — is often the smarter path.

Q: Which companies use hybrid AI workforce approaches successfully?

A: Most of the major enterprise tech vendors model hybrid themselves. NVIDIA's enterprise AI agent platform — adopted by Adobe, SAP, Salesforce, ServiceNow, and others — is built on open-source components with proprietary integration layers. Microsoft's Copilot now routes across multiple models (including open-source alternatives) depending on the task. This "model-agnostic" architecture is the emerging standard for sophisticated enterprise deployments.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure