Jump to section:

TL : DR / Summary:

Most of the AI content online treats "AI Agents" and "Agentic AI" as synonyms. They're not. Using them interchangeably is like calling a screwdriver and a power drill "the same tool because they both turn screws." One is a single-purpose instrument. The other is an autonomous system that picks its own tools, builds the shelf, and orders replacement parts when stock runs low.

This distinction isn't just semantic. It determines what you build, how you govern it, what it costs you, and — critically — what it can actually do inside a real organization.

At Ruh AI, we've spent considerable time working at both ends of this spectrum — building focused AI agents that execute with precision, and architecting agentic systems that coordinate across entire sales and revenue workflows. That practical experience is what makes the line between these two paradigms so clear to us. And it's why we think most teams are either over-engineering simple problems or under-building complex ones.

This blog cuts through the noise. No vague definitions. No fluffy use cases. Just a precise, technically grounded breakdown of what these two things are, how they work, where they break, and when you should reach for one over the other — with a front-row seat to how Ruh AI applies both in the real world.

Ready to see how it all works? Here’s a breakdown of the key elements:

- Meet the Specialist: What an AI Agent Is and Why It Works the Way It Does

- Meet the Strategist: What Agentic AI Is and Why It's a Different Animal Entirely

- Under the Hood: Where AI Agents and Agentic AI Architecturally Part Ways

- Same Problem, Two Worlds: What Each One Actually Does When You Put Them to Work

- The Upside of Keeping It Simple: Why AI Agents Are Underrated

- Where AI Agents Hit a Wall: The Limitations That Matter in Production

- The Case for Agentic AI: What You Unlock When You Hand Over Strategic Control

- The Real Cost of Agentic AI: Complexity, Risk, and Governance Overhead

- You Don't Need a Hammer When a Scalpel Will Do: When to Choose an AI Agent

- When the Whole Orchestra Is the Point: When to Choose Agentic AI

- How Ruh AI Sits at the Intersection — and Why That Matters

- The Part Nobody Wants to Talk About: Governance, Risk, and What Happens When It Goes Wrong

- The Honest Takeaway: Neither Is Better — One Is Right for Your Problem

- FAQ

Meet the Specialist: What an AI Agent Is and Why It Works the Way It Does

Strip away the marketing language and an AI Agent is this: a single autonomous software unit wired to execute one well-defined task. It perceives an input, processes it through an LLM, and produces an output or triggers an action. That's the loop. It doesn't extend beyond it.

If you want to understand the full taxonomy before going deeper, Ruh AI's breakdown of seven types of AI agents is worth reading first — it maps out exactly how different agent architectures differ in capability, autonomy, and memory before you ever pick a framework.

The Internal Anatomy

INPUT TRIGGER │ ▼ ┌─────────────────────────────┐ │ AI AGENT │ │ [Perception Unit] │ ← Reads input (text, data, event) │ [LLM Brain - One Model] │ ← GPT-4, Claude, Gemini, etc. │ [Defined Tool Set / APIs] │ ← CRM, Calendar, Database, etc. │ [Output / Action Unit] │ ← Response, API call, file, alert └─────────────────────────────┘ │ ▼ TASK COMPLETE (session ends)

Three critical constraints define every AI agent:

One LLM. One brain. One job. There's no reasoning across multiple contexts. As IBM Research notes, LLMs powering agents are rapidly improving at analyzing a task and formulating a plan — but within the scope they're given.

Defined tools only. The agent can only use the tools it was explicitly connected to at build time. OpenAI's practical guide to building agents underscores this: an LLM agent functions like a seasoned investigator evaluating context — but only within its predefined toolkit. For a full roundup of what tooling is available in 2026, Ruh AI's list of top 10 AI agent tools covers the landscape comprehensively.

Limited, session-based memory. When the session ends, most AI agents start fresh. They don't carry forward lessons, preferences, or context from previous interactions — a limitation that becomes a real architectural problem at scale, as we'll cover in the memory section below.

What "Reactive" Actually Means

An AI agent does not think ahead. It waits for a trigger — a user message, a system event, a scheduled cron job — and then it responds. According to Gartner's 2025 AI Hype Cycle, AI agents are now at the Peak of Inflated Expectations — underscoring both their rising relevance and the need to understand their real boundaries.

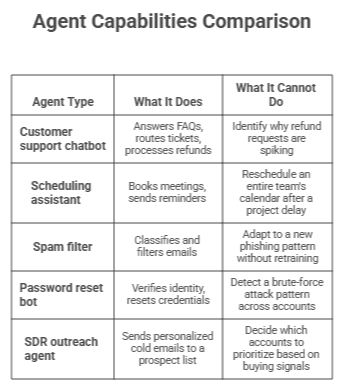

Real AI Agent Examples

2. Meet the Strategist: What Agentic AI Is and Why It's a Different Animal Entirely

Agentic AI is not a bigger, smarter AI agent. It's an entirely different class of system. Where an AI agent is a task-executor, Agentic AI is a goal-achiever — a system that can receive a high-level objective, autonomously figure out how to reach it, coordinate multiple agents and tools to get there, and adapt when things go sideways.

As MIT Sloan Management Review explains, this emerging class of AI integrates with other software systems to complete tasks independently or with minimal human supervision — and represents a fundamental shift from reactive chatbots to proactive actors.

This is the terrain Ruh AI operates in. Rather than deploying a chatbot that waits for a sales rep to ask a question, Ruh AI's approach to agentic sales intelligence means the system is continuously perceiving prospect signals, reasoning about the best next action, and orchestrating outreach — all without a human needing to initiate every step.

The Four-Step Loop That Powers Everything

┌──────────────────────────────────────────────┐

│ PERCEIVE → REASON → ACT → LEARN │

│ ↑ │ │

│ └────────────────────────┘ │

│ (continuous loop) │

└──────────────────────────────────────────────┘

Perceive: Gather live data — user inputs, databases, APIs, monitoring feeds, web searches. The system actively reads its environment.

Reason: The orchestration layer processes this data, weighs options, and builds an action plan.

Act: Execute the planned steps — writing emails, querying databases, triggering workflows, calling APIs.

Learn: Store the outcome in persistent memory and use it to refine future decisions. This is where Ruh AI's approach to AI agent memory systems becomes critical — without robust memory architecture, an agentic system can't improve across sessions.

The Orchestration Layer: The Actual Brain

IBM's breakdown of agentic architecture describes it as a "conductor" model that oversees tasks, decisions, and supervises other, simpler agents. Without the orchestration layer, you don't have Agentic AI — you have a group of AI agents that happen to exist in the same system.

For a practitioner's breakdown of how orchestration actually works in production, Ruh AI's AI agent orchestration guide covers the coordination patterns, task routing logic, and failure-handling mechanisms that separate real agentic systems from loosely-coupled agent chains.

Microsoft's Azure Architecture Center offers additional detail on how multi-agent orchestration patterns work at the infrastructure level.

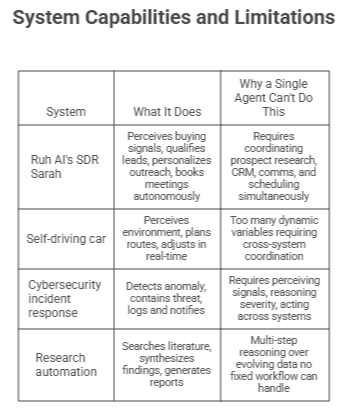

Real Agentic AI Examples

3. Under the Hood: Where AI Agents and Agentic AI Architecturally Part Ways

The differences aren't about scale — they're architectural. According to Microsoft's guidance on single vs. multi-agent architectures, software systems often start as single-agent and naturally evolve — though that evolution carries real tradeoffs in scalability, complexity, and adaptability.

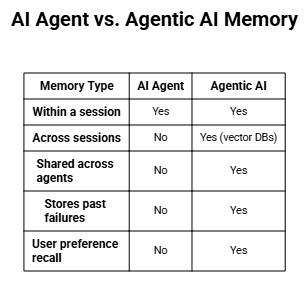

Memory: The Biggest Practical Differentiator

Memory is where most enterprise AI deployments quietly fail. An agent that can't remember what it learned yesterday will keep making the same mistakes — and in a sales or customer-facing context, that compounds fast. Ruh AI's deep-dive on AI agent memory systems walks through exactly how episodic, semantic, and procedural memory layers are built and maintained in a production agentic system.

Agentic AI typically uses vector databases as its long-term memory store. Pinecone's guide on RAG is the clearest explainer of how this works — past outcomes are stored as high-dimensional vectors and retrieved semantically, not just by keyword.

Popular options include Pinecone for fully managed cloud RAG, Weaviate for hybrid semantic search, Milvus for large-scale distributed workloads, and FAISS for raw ANN performance. For detailed comparisons: AI Multiple's RAG vector DB guide, Edlitera's Pinecone vs Weaviate vs Qdrant breakdown, and ServicesGround's 2025 agentic AI memory analysis.

The Full Architecture Comparison

The conductor analogy holds: a traditional AI agent is a musician — skilled at one instrument, plays when signaled. Agentic AI is the full orchestra management system — coordinating musicians, reading the room, adjusting the tempo, and filing the performance report afterward. Ruh AI's orchestration guide shows exactly what this looks like in a revenue-context deployment — where the "musicians" are specialized agents handling research, outreach, qualification, and scheduling, and the orchestration layer decides who plays when.

IBM's LLM Agent Orchestration tutorial breaks down exactly how profile, memory, planning, and action work as coordinated components at the framework level.

4. Same Problem, Two Worlds: What Each One Actually Does When You Put Them to Work

Let's walk through a real scenario — one that mirrors exactly the kind of problem Ruh AI was built to solve: a sales team that needs to work a list of 500 inbound leads.

AI Agent Response

SDR AI Agent:

- Receives lead list from CRM trigger

- Pulls contact info for lead #1

- Generates personalized cold email using template

- Sends email via connected email tool

- Logs "sent" in CRM

- Moves to lead #2

- Repeats until list is exhausted

- Session ends.

Fast. Consistent. Cheap at scale. Completely appropriate for high-volume outreach execution.

But what happens when 60% of those leads don't respond after two touches?

Agentic AI Response (How Ruh AI's SDR Sarah Works)

Ruh AI Agentic Orchestration Layer:

1. PERCEIVE:

→ Monitors email opens, reply rates, LinkedIn activity

→ Detects: "Lead segment A (Series B SaaS) has 34% open

rate but 0% reply rate after 2 emails"

2. REASON:

→ Queries CRM enrichment: these leads have a VP of Sales

as the decision-maker, not the founder

→ Identifies messaging mismatch: emails are founder-framed

→ Builds revised strategy:

(a) Switch messaging angle to pipeline efficiency ROI

(b) Add LinkedIn touchpoint before next email

(c) Deprioritize leads with <30 days company tenure

3. ACT (routes to specialized agents):

→ Research Agent: Pulls fresh LinkedIn & news signals

for top 50 unresponsive leads

→ Messaging Agent: Rewrites sequences with new angle

→ Outreach Agent: Executes revised sequence on schedule

→ Qualification Agent: Scores leads by updated ICP fit

4. LEARN:

→ Stores: "VP Sales persona → pipeline ROI frame =

higher reply rate"

→ Updates persona-to-messaging mapping in memory

→ Feeds result into future sequence decisions

The AI agent executed perfectly on the task it was given. Ruh AI's SDR Sarah didn't just execute — she perceived what wasn't working, reasoned about why, adapted the strategy, re-coordinated, and learned from the outcome. Without a single human touching the workflow mid-flight.

This is the core insight McKinsey highlights: AI agents are "reactive and isolated." Agentic systems operate as "autonomous, goal-driven" — a fundamentally different mode of operation.

5. The Upside of Keeping It Simple: Why AI Agents Are Underrated

In the rush to deploy "agentic" everything, AI agents often get dismissed as unsophisticated. That's a mistake. Ruh AI uses focused, single-purpose agents as execution layers within its agentic architecture — and they're excellent at what they do.

They're fast and purpose-built. An AI agent optimized for one task will outperform a generalist system every time. As OpenAI's agent guide notes: if you can write a function to handle the task, do that — and if an agent is the right call, keep it focused. Ruh AI's top 10 AI agent tools breakdown shows exactly which focused tools excel for specific execution tasks.

They're cheap to deploy at scale. Thousands of outreach sequences, qualification checks, or data enrichment calls at a fraction of human labor cost — consistent quality, 24/7 availability.

They're predictable and auditable. NIST's AI Risk Management Framework emphasizes auditability as a foundation of trustworthy AI. Agents make that significantly easier than agentic systems — which is why regulated industries often start here.

They integrate easily. Single-purpose, composable agent units you can swap without touching the rest of your stack. Microsoft Agent Framework is built around this principle.

Low implementation risk. Shorter build cycles, simpler infrastructure, easier rollback. A bad AI agent is easy to fix. A bad agentic system is a different kind of headache entirely.

6. Where AI Agents Hit a Wall: The Limitations That Matter in Production

Scope blindness. An AI agent handles what you put in front of it — and nothing else. Gartner warns that even single-agent deployments suffer from brittleness in unfamiliar scenarios. In a sales context, a scope-blind agent will keep sending the same dead sequence to unresponsive leads — burning your domain and your pipeline.

Fragmentation risk at scale. Deploy five AI agents across SDR outreach, lead scoring, CRM enrichment, follow-up sequencing, and meeting booking without an orchestration layer and you get five agents pulling in different directions — inconsistent data, duplicate touches, missed handoffs. McKinsey calls this "agent sprawl".

Memory amnesia. Every session starts from scratch. If your outreach agent doesn't know that a prospect already spoke to a colleague last month, it's going to make that call awkward. This is precisely why Ruh AI treats AI agent memory systems as a first-class architectural concern — not an afterthought. RAG-powered vector memory is what closes this gap.

Brittle edge-case handling. When inputs fall outside predefined parameters, most agents fail or hallucinate. NIST's AI 600-1 identifies this brittleness as a core technical risk in AI deployments.

Reactive, never proactive. You get what you ask for. The agent will never tell you that your messaging is off, that a competitor just released a feature, or that a lead went dark because they switched jobs. You have to already know to ask.

7. The Case for Agentic AI: What You Unlock When You Hand Over Strategic Control

True end-to-end automation. McKinsey projects agentic systems could unlock $2.6 to $4.4 trillion annually in value. In the sales and revenue context, that means a full pipeline — from ICP identification to booked meeting — running autonomously.

Proactive problem identification. MIT Sloan's research confirms that the shift from reactive to proactive AI is one of the defining traits of the agentic paradigm. Ruh AI's SDR Sarah doesn't wait for a sales rep to notice a drop in reply rates — she identifies it, adapts her strategy, and re-engages with a better approach before the problem compounds.

Persistent learning without retraining. Pinecone's RAG documentation describes vector memory as an external "long-term memory" layer. In Ruh AI's implementation, this means Sarah gets smarter about which messaging angles work for which buyer personas — across every sequence she runs, indefinitely. Ruh AI's deep-dive on AI agent memory systems explains the architecture behind this kind of cross-session learning.

Dynamic adaptation in real-time. A prospect went cold because their company entered a hiring freeze? An agentic system detects this from signal monitoring, pauses that account, and re-routes effort to warmer opportunities. IBM's agentic architecture explainer frames this as the core capability of the Perceive-Reason-Act-Learn loop.

Scales with complexity. Microsoft's multi-agent architecture guidance covers how orchestration patterns allow horizontal scaling. For Ruh AI, this means the same agentic architecture that works for a 50-person sales team scales to an enterprise SDR org without rebuilding from scratch — just adding specialized agents and updating routing logic.

Cross-silo coordination. One system. Full pipeline picture — from first touch to closed deal.

8. The Real Cost of Agentic AI: Complexity, Risk, and Governance Overhead

Implementation is genuinely complex. McKinsey's security playbook is clear: organizations must upgrade AI policies, IAM, and third-party risk management frameworks before they deploy — not after. This is a significant reason why most companies struggle to go from agentic prototype to production-grade system.

Hallucinations that cascade. Gartner confirms that multi-agentic workflows create compounded hallucination risk. A plausible-but-wrong qualification decision by one agent can cause incorrect outreach by the next agent down the chain — and by the time a human reviews it, you've sent three off-target emails to a key account.

Infinite loops are a real threat. Without proper safeguards, agents enter failure cycles. Microsoft's orchestration documentation covers infinite loop detection as a built-in architectural requirement. Ruh AI's orchestration guide addresses this specifically — including how to build circuit breakers into multi-agent pipelines.

The attack surface is wider. McKinsey's security analysis flags "chained vulnerabilities" as a new risk class: a flaw in one agent cascades to others. In a sales context, this could mean a compromised enrichment agent feeding bad data into every downstream decision.

Accountability is murky. MIT's AI Risk Repository identifies autonomous AI accountability as an unresolved governance question. Organizations deploying agentic systems need clear ownership models for what happens when the system makes a wrong call.

Governance overhead is non-negotiable. NIST's AI Governance Framework and NIST AI 600-1 are unambiguous: HITL checkpoints, RBAC, audit logs, and adaptive compliance aren't optional. If you're exploring what a governed agentic deployment looks like for your team, Ruh AI's contact page is a good starting point — this is exactly the kind of architecture conversation we have with enterprise buyers.

9. You Don't Need a Hammer When a Scalpel Will Do: When to Choose an AI Agent

Use an AI Agent when all of the following are true:

The task is clearly scoped and repeatable. If you can write a 10-step runbook for how a human does it, you can build an agent for it. OpenAI's building guide is direct: prioritize workflows that have previously resisted automation due to friction with traditional methods. Ruh AI's seven types of AI agents includes a useful taxonomy for matching agent type to task complexity.

The input/output relationship is consistent. Agents thrive on predictable mappings. Judgment across wildly different scenarios is asking too much of a rule-based system.

Volume and speed are the primary value drivers. If the business case is "we need to execute 10,000 outreach touches without adding headcount," an AI agent is the right tool.

The scope lives within one system or domain. Microsoft's single vs. multi-agent guidance is the right reference for making that architectural call.

You need fast deployment and fast ROI. AI agents can be built and deployed in days to weeks.

Predictability and auditability are critical. NIST's AI RMF provides the governance scaffolding that makes agents viable in regulated environments.

Best Fit Industries for AI Agents

- Sales execution: High-volume outreach, CRM data enrichment, follow-up sequencing

- Customer service: Tier-1 support, FAQ handling, complaint routing

- IT helpdesks: Password resets, access requests, software provisioning

- E-commerce: Order tracking, return processing, shipping notifications

- Financial services: Balance inquiries, fraud alerts, payment confirmations

10. When the Whole Orchestra Is the Point: When to Choose Agentic AI

Use Agentic AI when the following are true:

The goal is broad and multi-step. IBM's agentic architecture explainer outlines the planning module as essential for goals that require dynamic — not predetermined — execution paths. "Book 20 qualified meetings this month" is a goal. "Send email #4" is a task.

The system needs to be proactive, not reactive. MIT Sloan calls this the defining characteristic of the agentic era. Ruh AI's AI SDR doesn't wait to be told when to follow up — it monitors the conditions and initiates the right action at the right moment.

Cross-system coordination is required. When a workflow touches your CRM, email platform, LinkedIn, prospect database, and meeting scheduler — simultaneously — no single agent can manage that. The orchestration layer is the only viable solution. Ruh AI's orchestration guide breaks down how to design these coordination layers without them becoming a maintenance liability.

Real-time adaptation is critical. Prospect signals change. Competitors launch. Buying windows open and close. McKinsey notes that function-specific agentic deployments — exactly the kind Ruh AI builds for revenue teams — are where the highest ROI is being realized.

Long-term memory and continuity drive value. Persistent memory means the system gets smarter with every sequence it runs. Pinecone's RAG documentation and Ruh AI's AI agent memory systems breakdown explain how this architecture is built.

The ROI justifies the investment. A fully autonomous SDR pipeline that runs 24/7 without headcount, learns from every interaction, and adapts strategy in real-time justifies the agentic architecture. A simple follow-up email sequence does not.

Best Fit Industries for Agentic AI

- Sales & Revenue: Autonomous SDR pipelines, multi-touch outreach orchestration, pipeline management

- Enterprise operations: End-to-end process automation, employee lifecycle management

- Cybersecurity: Autonomous threat detection, triage, containment, incident reporting

- Healthcare: Patient journey coordination across clinical, administrative, insurance systems

- Supply chain: Autonomous demand forecasting, supplier switching, logistics rerouting

- Research & Development: Literature synthesis, hypothesis generation, experimental design

Choosing Your Agentic AI Framework

11. How Ruh AI Sits at the Intersection — and Why That Matters

Most AI companies sell you one thing and call it everything. A chatbot becomes an "AI agent." A prompt wrapper becomes "agentic AI." Ruh AI operates differently — because the team building it has lived through the distinction.

Here's what Ruh AI's approach actually looks like in practice:

The Execution Layer: Focused AI Agents Doing Specific Jobs

Within Ruh AI's architecture, purpose-built AI agents handle discrete, high-volume tasks with precision:

- A research agent that pulls and synthesizes prospect signals from LinkedIn, news, CRM history, and intent data

- A messaging agent that writes personalized outreach sequences calibrated to persona, industry, and stage

- A scheduling agent that manages meeting booking, calendar coordination, and reminder logic

- A qualification agent that scores inbound leads against ICP criteria and routes them accordingly

Each of these agents is fast, reliable, and auditable. Each does one job exceptionally well. You can see the full capabilities of these individual components by exploring Ruh AI's AI SDR page.

The Intelligence Layer: Agentic Orchestration That Connects It All

What makes Ruh AI's SDR Sarah different from a collection of AI tools is the orchestration layer sitting above those agents. This is where the agentic behavior lives:

- Perceiving prospect signals continuously — opens, clicks, LinkedIn activity, company news, funding events

- Reasoning about which accounts to prioritize, which messaging angles to run, which sequences to pause

- Coordinating the research, messaging, outreach, and scheduling agents in the right sequence with the right context

- Learning from every interaction — what messaging worked for which persona, which sequences convert, which accounts are worth doubling down on

This is precisely the architecture Ruh AI describes in its AI agent orchestration guide — not as theory, but as the actual system powering Sarah.

The Memory Layer: What Makes It Get Smarter Over Time

The piece that most agentic deployments miss is memory. Ruh AI's deep-dive on AI agent memory systems explains how the system maintains:

- Episodic memory — what happened with this specific prospect, on which touchpoints, with what outcome

- Semantic memory — what generally works for this persona type, industry vertical, or deal size

- Procedural memory — which sequence patterns and timing cadences drive the best conversion rates

Together, these memory types mean Sarah doesn't just execute — she evolves. Every sequence she runs makes the next one smarter.

The Result: Autonomous Sales Development That Actually Works

The outcome isn't a tool you have to manage. It's a function that runs. Revenue teams using Ruh AI don't think about prompting or tuning individual agents — they define ICP and goals, and the agentic system figures out the rest.

If this is the kind of system you're trying to build or evaluate for your own sales motion, Ruh AI's team is available to walk you through it. The architecture questions are often the most useful ones to work through before you commit to an approach.

12. The Part Nobody Wants to Talk About: Governance, Risk, and What Happens When It Goes Wrong

Autonomy and risk scale together. McKinsey's security analysis is unambiguous: strong governance is non-negotiable. MIT Sloan research is equally direct — a rogue AI agent making a wrong decision in a high-stakes context can cause significant damage.

The Three Layers of Governance

Model Layer: NIST's AI 600-1 framework provides structured guidance on governing generative AI outputs — from content filtering to output validation and incident disclosure.

Orchestration Layer: Implement infinite loop detection and role-based access control (RBAC). Microsoft's orchestration pattern documentation and Ruh AI's orchestration guide both cover how to build these safeguards into multi-agent pipelines without sacrificing throughput.

Tool Layer: Every tool the agent can call is a potential vector for damage. McKinsey's playbook recommends augmenting IAM with input/output guardrails to prevent adversarial prompt manipulation.

Human-in-the-Loop Is Not a Weakness

IBM's HITL explainer makes the case clearly: embedding human oversight into critical decision points is how organizations reduce risk and maintain accountability. WitnessAI's enterprise guide explains HITL as a direct mechanism for meeting regulatory obligations.

HITL checkpoints are how you calibrate how much autonomy to extend to a system based on demonstrated reliability. New agentic deployments start with low autonomy and HITL at high-stakes decision points. As the system proves itself, the checkpoints loosen. Lumenova's HITL oversight analysis provides a useful framework for deciding between in-the-loop, on-the-loop, and out-of-the-loop oversight models.

For implementation references: Parseur's 2026 HITL guide, Holistic AI's HITL overview, WorkOS's HITL architecture guide, and SuperAnnotate's evaluation loop explainer.

What Good Governance Looks Like in Practice

Every action is logged with:

- Timestamp + Agent ID + Decision path

- Tool called / API triggered / Output produced

- Human approval status (if required)

High-stakes triggers pause and route to a human:

- Sending external communications to named accounts

- CRM data modification above a threshold

- Escalation to an executive contact

- Any action outside the defined ICP scope

Anomaly detection runs continuously:

- Infinite loop detection (fail after N retries)

- Unusual outreach frequency (spam risk monitoring)

- Access attempts outside RBAC scope

- Hallucination detection before message send

McKinsey projects that by 2026, nearly half of all enterprise AI governance frameworks will include real-time monitoring and adaptive compliance as standard requirements. For comprehensive academic treatment, MIT Press's research on AI governance risk and NIST's AI RMF 1.0 are the authoritative references.

13. The Honest Takeaway: Neither Is Better — One Is Right for Your Problem

AI Agents are the right tool when you have a clearly defined, high-volume, repeatable task that lives in one system and needs to be done faster and cheaper than humans can do it. They're underrated, underutilized, and genuinely excellent within their scope. Ruh AI uses them extensively — as the precise execution units inside a larger agentic system.

Agentic AI is the right tool when you have a complex goal that spans multiple systems, requires autonomous multi-step planning, and creates enough value to justify the engineering and governance investment. Most things called "agentic" are just chains of agents with no real orchestration. McKinsey's research confirms: fewer than 10% of AI use cases deployed make it past the pilot stage — and the gap between expectation and reality is 42%.

The organizations getting this right — including the sales teams using Ruh AI — understand that AI Agents and Agentic AI aren't competing. They're complementary layers. Agents provide the execution muscle. Agentic AI provides the strategic nervous system. Together, they form an autonomous operation that can perceive, reason, act, and learn at a scale no human team can match.

The question isn't which is better. The question is: what does your workflow actually need?

If you're trying to answer that question for a revenue or sales motion, the Ruh AI blog is a useful place to keep reading — and the Ruh AI team is a useful call to have before you commit to an architecture.

Frequently Asked Questions

Q: Are AI agents part of agentic AI, or are they separate?

Ans: Both. Individual AI agents can be standalone systems or the specialized "workers" inside an agentic system where the orchestration layer coordinates them. IBM explains it clearly: not all AI agents are agentic — it depends on the orchestration framework. Ruh AI's seven types of AI agents maps out the full spectrum from simple reactive agents to fully agentic systems.

Q: What's the minimum viable setup for an agentic AI system?

Ans: At minimum: at least two specialized agents, an orchestration layer with dynamic task routing, a shared memory mechanism, and a logging system for audit trails. Without all four, you have connected agents — not true agentic AI. Ruh AI's orchestration guide is a practical reference for what this minimum architecture actually looks like.

Q: Which is more expensive to build and maintain?

Ans: Agentic AI is significantly more expensive — in engineering time, infrastructure (vector databases, orchestration frameworks, monitoring), and governance overhead. The cost-benefit calculus favors agents for narrow tasks and agentic systems for complex, high-value end-to-end workflows. For teams evaluating this for sales use cases, Ruh AI's AI SDR is a purpose-built agentic system that removes that infrastructure burden entirely.

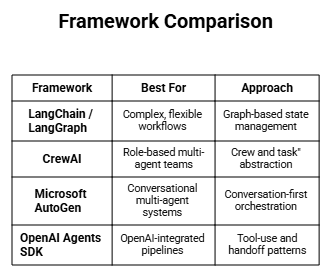

Q: What frameworks are used to build agentic AI systems?

Ans: LangChain (modular agent chaining), CrewAI (role-based multi-agent teams), Microsoft AutoGen (conversation-first orchestration), and OpenAI's Agents SDK. Ruh AI's top 10 AI agent tools breakdown includes an updated evaluation of these frameworks alongside the specialized tools that integrate with them.

Q: What are vector databases and why do agentic systems need them?

Ans: Vector databases (Pinecone, Milvus, Weaviate, FAISS) store information as high-dimensional numerical vectors, enabling semantic retrieval — not just keyword matching. This is how agentic AI achieves persistent memory. AI Multiple's RAG vector DB guide covers performance and cost tradeoffs across six platforms. For a sales-specific explanation of why memory matters so much, Ruh AI's AI agent memory systems article is the right starting point.

Q: How do you prevent an agentic AI system from going rogue?

Ans: Layer your defenses: RBAC limits what each agent can touch, HITL checkpoints require human approval for high-stakes actions, infinite loop detection kills runaway workflows, and audit logging makes every decision traceable. NIST's AI RMF provides the industry standard framework. Ruh AI's orchestration guide covers the practical implementation of these safeguards in a production agentic pipeline.

Q: Is "autonomous AI" the same as "agentic AI"?

Ans: Largely yes, but with nuance. "Autonomous AI" is the property; "Agentic AI" is the architecture that enables it through orchestration, persistent memory, and multi-step reasoning. All agentic AI is autonomous; not all autonomous AI is agentic. MIT's agentic AI overview draws this distinction clearly. Ruh AI's blog covers this and related architectural topics in depth across multiple articles.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure