Jump to section:

TL : DR / Summary:

On the morning of March 31, 2026, one of the biggest proprietary source code exposures in AI industry history unfolded — not through a cyberattack, not through espionage, but through a single misconfigured build file. Anthropic, the company behind Claude and one of the most closely watched AI labs in the world, accidentally published the complete internal source code of Claude Code to the public npm registry.

By the time Anthropic pulled the package at around 08:00 UTC, the internet had already made the code permanently irretrievable. What followed became one of the most extraordinary sequences of events in modern software history: a record-breaking GitHub repository, a DMCA war, a concurrent malware attack, and a legal puzzle that nobody in the AI industry knows how to solve.

Here is everything that happened, why it happened, what was inside, and what the damage really looks like.

Ready to break it down? Here's what's covered:

- What Is the Anthropic Claude Code Leak and Why Does It Matter?

- How the Anthropic Claude Code Vulnerability Happened: A Single Missing Config Line

- What the Leaked Claude Code Source Code Actually Exposed

- Controversial Internal Features the Anthropic Claude Code Leak Revealed

- The Concurrent Axios npm Supply-Chain Attack: A Separate but Critical Security Event

- How the Anthropic Claude Code Leak Spread: The Fastest-Growing GitHub Repository in History

- The Legal Puzzle: Can Anthropic DMCA the Claude Code Python Rewrite?

- How Much Harm Did the Anthropic Claude Code Leak Actually Cause?

- What Measures Has Anthropic Taken Following the Claude Code Source Leak?

- What the Anthropic Claude Code Leak Means for the Broader AI Agent Industry

- Frequently Asked Questions About the Anthropic Claude Code Leak

What Is the Anthropic Claude Code Leak and Why Does It Matter?

Claude Code is Anthropic's flagship AI coding agent — a command-line tool that lets developers interact with Claude directly from their terminal to edit files, execute commands, manage Git workflows, and run complex multi-step engineering tasks autonomously. It is not simply a wrapper around an API. It is a sophisticated agentic harness — an orchestration layer of tools, memory systems, permission logic, and multi-agent coordination built on top of the Claude model.

That harness, which VentureBeat describes as tied to an annualized recurring revenue of $2.5 billion for Claude Code alone, is now fully public. And the community that got access spent the next 24 hours taking it apart piece by piece.

The leak matters far beyond Anthropic. As the AI agent ecosystem matures — with platforms like Ruh.ai building enterprise-grade agentic automation on top of the same foundational models — what happens to Claude Code's proprietary architecture has direct implications for every team building or adopting AI agents in 2026. The harness is, in many ways, the product.

How the Anthropic Claude Code Vulnerability Happened: A Single Missing Config Line

The npm Packaging Error That Exposed Everything

At approximately 04:00 UTC on March 31, 2026, Anthropic pushed version 2.1.88 of @anthropic-ai/claude-code to the public npm registry. Bundled with that routine update was a 59.8 MB JavaScript source map file — a debugging artifact that is never supposed to ship with a production release.

The source map file did not contain the code directly. As The Register confirmed, the map file contained a reference that pointed to a zip archive (src.zip) hosted on Anthropic's own Cloudflare R2 cloud storage bucket — publicly accessible, no authentication required. Anyone who followed the link could download the complete source.

The Two Compounding Technical Failures Behind the Leak

A missing .npmignore entry. As software engineer Gabriel Anhaia wrote in a widely cited post-incident analysis: "A single misconfigured .npmignore or files field in package.json can expose everything." A release team member failed to add *.map to the project's .npmignore file — or the files field in package.json was not configured to exclude debug artifacts. Either way, the 59.8 MB map file slipped through.

A known Bun toolchain bug. Claude Code is built using Bun, a JavaScript runtime that generates source maps by default unless explicitly disabled. A known open bug (oven-sh/bun#28001, filed March 11, 2026) had been reported: source maps were appearing in production builds even when configurations said they should be off. Anthropic's build pipeline very likely triggered this bug.

As Boris Cherny, a Claude Code engineer at Anthropic, confirmed, it was "plain developer error, not a tooling bug" — though the Bun issue remains an open contributing factor acknowledged across the community.

Anthropic's official statement, shared with BleepingComputer, Fortune, The Register, and others, was consistent: "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again."

Nobody hacked anything. The code was simply there.

Why This Anthropic Leak Is More Alarming: It Has Happened Before

Fortune reported that a nearly identical source-map leak occurred with an earlier Claude Code version in February 2025 — 13 months earlier. No public post-mortem was ever released after that incident, and no fix was shipped sufficient to prevent the recurrence. The March 31 event was also Anthropic's second major data exposure in a single week: just days prior, roughly 3,000 internal files — including a draft blog post about an unreleased model — had been left accessible via a publicly facing CMS.

What the Leaked Claude Code Source Code Actually Exposed

The leaked archive contained ~512,000 lines of TypeScript across approximately 1,906 files — the complete agentic harness. CNBC confirmed the scope, noting that the leak gave competitors and developers a direct blueprint for how one of the most sophisticated AI coding tools in the world actually works under the hood.

Key components exposed included:

- The Query Engine (~46,000 lines): the largest single module, handling all LLM API calls, streaming, caching, and orchestration

- ~40 permission-gated tools: file read/write, bash execution, web fetch, LSP integration, and more

- Multi-agent orchestration system: a coordinator that spawns parallel sub-agents sharing a prompt cache, reducing token costs significantly

- Three-layer persistent memory architecture: a self-healing memory system where the agent treats its own memory as a "hint" and verifies all context against the actual codebase before acting — as VentureBeat's deep analysis detailed

- Internal system prompts — compiled and publicly analyzed within hours of the leak

- Internal model performance benchmarks: Capybara (internal codename for a Claude 4.6 variant) at version 8 had a 29–30% false claims rate, a regression from 16.7% at v4 — precisely the kind of data competitors should never have access to

Unreleased Claude Code Features Found Hidden in the Source

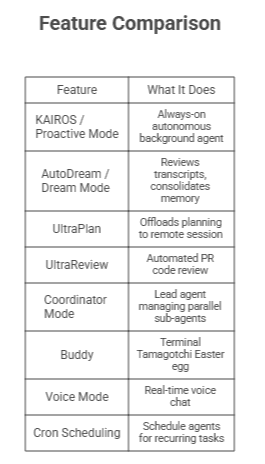

The community uncovered dozens of unreleased feature flags gated behind configuration switches. Axios reported that Anthropic confirmed some were fully built but not yet shipped:

As Alex Kim's deep technical analysis concluded: "The real damage isn't the code. It's the feature flags. KAIROS, the anti-distillation mechanisms: these are product roadmap details that competitors can now see and react to. The code can be refactored. The strategic surprise can't be un-leaked."

If you're exploring what these kinds of autonomous agent architectures look like in a managed, enterprise-ready form, Ruh.ai has covered the broader AI agent landscape extensively — including a detailed breakdown of OpenClaw vs ClawdBot vs Moltbot, the key open-source agents that emerged from and were influenced by the same ecosystem.

Controversial Internal Features the Anthropic Claude Code Leak Revealed

Undercover Mode: Claude's Hidden Identity System in Open-Source Repos

Among the most debated discoveries was a ~90-line file called undercover.ts. As The Register's follow-up deep-dive confirmed, this mode activates automatically when Anthropic employees (USER_TYPE === 'ant') use Claude Code to contribute to external or public repositories. When active, it instructs Claude to:

- Never reference internal codenames (Capybara, Fennec, Tengu, Numbat) in commits or PR descriptions

- Strip Co-Authored-By attribution lines from Git commits

- Never identify itself as "Claude Code" or as an AI in any public-facing commit context

- Follow the directive embedded in the system prompt: "Do not blow your cover"

Critically, the mode has no force-off switch. If Claude Code cannot confirm it is operating inside an internal Anthropic repository, it stays undercover by default — a one-way door with no override.

Anthropic's defenders argue this was a product security measure: preventing unreleased internal codenames and project names from accidentally appearing in public commit histories. The critics' counter is harder to dismiss: the side effect is that AI-authored code contributed to open-source projects carries no disclosure, and the tool was specifically built to guarantee that outcome. As one highly upvoted Hacker News comment captured: if a tool is willing to conceal its own identity in commits, what else is it willing to conceal?

This is also precisely why transparency in AI agent design matters. Platforms built for business automation, like Ruh.ai's ClawdBot, have explored what it means to build AI agents that operate autonomously in business contexts — and the accountability and disclosure questions that come with it.

Anti-Distillation: Poisoning Competitors' Training Data

The ANTI_DISTILLATION_CC flag was confirmed in the source by Alex Kim's analysis. When triggered, it injects fake tool call definitions into API traffic, effectively poisoning any data a competitor might try to collect from API logs for model distillation. A separate cryptographic obfuscation technique also prevents eavesdroppers from capturing Claude's internal chain-of-thought reasoning.

Internal Anthropic Model Codenames Confirmed

The leak confirmed four internal model codenames, as reported by VentureBeat:

- Capybara — a Claude 4.6 variant

- Fennec — Opus 4.6

- Numbat — an unreleased model still in testing

- Tengu — referenced throughout, including in Undercover Mode

Frustration Detection: Silent Emotional Monitoring

A regex-based system that scans user input for profanity to silently log when a user becomes frustrated with Claude's performance — confirmed real, logging emotional friction invisibly without user awareness.

The Concurrent Axios npm Supply-Chain Attack: A Separate but Critical Security Event

A crucial detail that many coverage pieces buried deserves its own section. On the same morning as the Claude Code leak — between 00:21 and 03:29 UTC — a completely separate and unrelated npm supply-chain attack was active against the axios HTTP library.

As StepSecurity first identified and The Register confirmed, malicious versions axios@1.14.1 and axios@0.30.4 were published by attackers who had hijacked a lead axios maintainer's npm account. The malicious versions injected a hidden dependency (plain-crypto-js) that silently deployed a cross-platform Remote Access Trojan (RAT) targeting macOS, Windows, and Linux. Google's Threat Intelligence Group later attributed the attack to UNC1069, a suspected North Korean threat actor.

Developers who installed or updated Claude Code via npm during that specific three-hour window may have inadvertently pulled the malicious axios version alongside it. The two events were coincidental, not connected — but the overlap made a dangerous morning significantly worse.

If you updated Claude Code via npm on March 31 before ~03:30 UTC:

- Search your lockfiles (package-lock.json, yarn.lock, bun.lockb) for axios@1.14.1, axios@0.30.4, or plain-crypto-js

- If found, treat the affected machine as fully compromised — rotate all secrets and credentials

- Switch to Anthropic's native installer going forward: curl -fsSL https://claude.ai/install.sh | bash

- Follow Malwarebytes' complete remediation guidance for full cleanup steps

How the Anthropic Claude Code Leak Spread: The Fastest-Growing GitHub Repository in History

At 04:23 UTC, Chaofan Shou, an intern at Solayer Labs, posted a direct download link on X. As Decrypt reported, 16 million people descended on the thread. Within two hours, the code had been mirrored across GitHub with 41,500+ forks.

Anthropic began issuing DMCA takedown notices against direct mirrors. GitHub complied immediately. But two factors made containment practically impossible:

Decentralized mirrors. The account @gitlawb uploaded the code to Gitlawb, a decentralized git platform, with a simple message: "Will never be taken down." DMCA enforcement has no reliable reach over decentralized infrastructure.

claw-code — and the open-source agent wave it triggered. Korean developer Sigrid Jin (@instructkr) — previously featured by the Wall Street Journal for consuming 25 billion Claude Code tokens — woke at 4 a.m. to the news. Using an AI orchestration tool called oh-my-codex, he rebuilt Claude Code's core architecture from scratch in Python before sunrise. The repository, claw-code, contained no original TypeScript — it was a clean-room rewrite reimplementing architectural patterns without copying Anthropic's proprietary source.

As Layer5's comprehensive analysis documented, claw-code reached 50,000 GitHub stars in approximately two hours and 100,000 stars within one day — the fastest-growing repository in GitHub history. A Rust port (Claurst) followed within 24 hours. A stripped version with telemetry removed and all experimental features unlocked was uploaded to IPFS.

This moment — one developer rebuilding 512,000 lines of proprietary code overnight using an AI tool — is exactly what Ruh.ai's analysis of OpenClaw as an AI assistant had pointed toward: the architectural patterns behind agentic systems are rapidly becoming the shared foundation of an open ecosystem, whether the original builders intended that or not.

The Legal Puzzle: Can Anthropic DMCA the Claude Code Python Rewrite?

Anthropic's DMCA campaign removed direct TypeScript mirrors from GitHub, but the legal picture around claw-code is genuinely unresolved, as Engineers Codex thoroughly analyzed.

Why DMCA may not apply to the rewrite. A complete rewrite into a new programming language is generally treated as a new creative work — potentially a "transformative derivative" — which may fall outside standard DMCA reach. Additionally, because Anthropic published the code themselves via npm rather than having it stolen, the "anti-circumvention" protections of the DMCA likely don't apply.

The AI copyright catch-22. Anthropic's CEO has implied that significant portions of Claude Code were written by Claude. As Decrypt noted, the DC Circuit upheld in March 2025 that AI-generated work does not carry automatic copyright protection. As VentureBeat's enterprise security analysis noted, Gartner flagged that Claude Code is roughly 90% AI-generated per Anthropic's own public disclosures — meaning the copyright claim may be substantially weaker than it appears.

The strategic contradiction. If Anthropic sues the Python rewrite's creator and argues that an AI-generated transformative output infringes their copyright, they risk undermining their own "fair use" defense in the separate training-data copyright cases the entire AI industry relies on. As Layer5 put it: the clean-room rewrite strategy pioneered by claw-code creates a legal template that could reshape how code IP functions across the AI era.

At the time of writing, claw-code remains live. Direct TypeScript mirrors have been removed. The legal status of clean-room AI rewrites has never been tested in court.

How Much Harm Did the Anthropic Claude Code Leak Actually Cause?

Competitive Intelligence: The Most Permanent Damage

As Axios reported, competitors including Cursor, GitHub Copilot, and Windsurf now have a detailed blueprint for building a production-grade AI agent harness — along with Anthropic's internal benchmarks showing exactly where its models are still struggling. Capybara v8's 29–30% false claims rate, the refactoring aggression problem, the anti-distillation countermeasures — none of this should be visible to a competitor. You can refactor code in a week; you cannot un-leak a roadmap.

For enterprises and AI platforms watching this space — including teams building on top of agentic AI infrastructure like Ruh.ai's AI SDR — the lesson is that the orchestration layer itself is now becoming a competitive and open battleground, not a proprietary moat.

Reputational Damage at a Sensitive Moment

Fortune confirmed that Anthropic is preparing for an IPO, with enterprise customers accounting for roughly 80% of Claude Code's revenue. Enterprise customers pay partly for the assurance that their vendor's technology is proprietary and protected. The Undercover Mode discovery adds further friction — not because it proves wrongdoing, but because it generates questions a "safety-first" lab is poorly positioned to answer cleanly.

The Register's follow-up investigation documented additional concerns: persistent default telemetry collecting user ID, session ID, email, platform type, and active feature gates — and the broader scope of system access Claude Code exercises over installed machines.

What Was NOT Exposed in the Claude Code Leak

Anthropic was consistent across all its statements: no customer data, no user credentials, no model weights were involved. The leak was of the agentic harness code — the engineering scaffolding — not the underlying AI model or anything that directly compromises users' accounts or data. BleepingComputer confirmed this directly from Anthropic's official statement.

Increased Attack Surface for Security Researchers and Bad Actors

VentureBeat's enterprise security deep-dive and The Hacker News both flagged a serious secondary consequence: because the leak revealed the exact orchestration logic for Hooks and MCP servers, attackers can now design malicious repositories tailored to trick Claude Code into running background commands or exfiltrating data before a trust prompt appears. Security firm Straiker mapped three specific attack compositions that became practical — not theoretical — the moment the implementation was legible.

Code Permanence: Why This Cannot Be Contained

512,000 lines of Claude Code are permanently in the wild. Decentralized mirrors, IPFS archives, torrents, and thousands of individual copies ensure that even aggressive DMCA enforcement cannot retract the exposure. As Decrypt concluded: "Anthropic didn't mean to open-source Claude Code. But on Tuesday, the company effectively did — and not even an army of lawyers can put that toothpaste back in the tube."

What Measures Has Anthropic Taken Following the Claude Code Source Leak?

Anthropic's confirmed public actions to date:

- ~08:00 UTC on March 31: Removed version 2.1.88 from the npm registry — approximately four hours after the push went live

- Public statement across major outlets confirming human error, denying it was a security breach, and committing to rolling out preventive measures

- Active DMCA campaign against GitHub mirrors hosting the original TypeScript — documented publicly via GitHub's DMCA log

- Recommendation to use the native installer (curl -fsSL https://claude.ai/install.sh | bash) instead of npm going forward

What has not happened: a detailed technical post-mortem. The February 2025 incident also produced no public accounting. Given that the same mistake recurred 13 months later, the developer community — and enterprise buyers evaluating Anthropic ahead of its IPO — are right to notice the absence.

What the Anthropic Claude Code Leak Means for the Broader AI Agent Industry

The Claude Code leak is, at its core, a story about how fragile the concept of "proprietary AI software" has become in an age of AI-assisted development. The architectural moat — the carefully engineered harness of tools, memory, orchestration, and permission systems — can now be reimplemented in a new language overnight by a single developer with an AI orchestration tool and a few hours before sunrise.

As Layer5's analysis noted: "When orchestration architecture is no longer secret, differentiation moves entirely to model capabilities and user experience." And as Gergely Orosz of The Pragmatic Engineer put it: the models are the moat. The shell around them, apparently, is not.

For any team building agentic AI systems — whether as a developer deploying Claude, a business adopting AI automation, or a platform like Ruh.ai building on top of these models — this incident is a signal: the orchestration patterns are converging into open knowledge. The competitive advantage going forward is in how you apply them, govern them, and build trust around them — not in keeping the wiring private.

For developers, this leak is also a reminder that build pipeline hygiene — specifically .npmignore configuration and source map management — is a security-critical discipline. A single unchecked line in a config file exposed the crown jewels of one of the world's most valuable AI products.

Frequently Asked Questions About the Anthropic Claude Code Leak

Was the Anthropic Claude Code leak a hack?

Ans: No. Anthropic confirmed it was a packaging error — a debug file was accidentally included in a production npm release. No external attacker was involved, and no systems were breached.

Was any customer data exposed in the Claude Code leak?

Ans: No. Anthropic explicitly confirmed that no user credentials, personal information, or model weights were exposed. Only the harness source code was leaked.

What is claw-code and is it legal?

Ans: claw-code is a clean-room Python rewrite of Claude Code's architecture built by Sigrid Jin using oh-my-codex. Its legal status is genuinely unresolved — it may qualify as a transformative derivative work outside DMCA reach, but this has never been tested in court.

Is it safe to use Claude Code after the leak?

Ans: Using Claude Code itself is safe. However, if you updated via npm between 00:21 and 03:29 UTC on March 31, 2026, check your lockfiles for the malicious axios versions (1.14.1 or 0.30.4) due to a separate, unrelated supply-chain attack active during that window.

What was Undercover Mode in Claude Code?

Ans: A feature found in undercover.ts that activates automatically for Anthropic employees when contributing to external repos. It prevents internal codenames from appearing in commits and strips AI attribution from Git history. It has no force-off switch.

How does this affect businesses building with AI agents?

Ans: The leaked orchestration architecture — agent memory, multi-agent coordination, permission gating, tool design — is now public knowledge. For businesses adopting agentic AI, this accelerates open-source alternatives and raises new questions about IP, governance, and vendor trust. Explore how Ruh.ai approaches agentic AI for business automation or get in touch with the team to discuss how managed AI agent platforms compare to building on exposed open-source patterns. You can also see how Sarah, Ruh.ai's autonomous AI SDR, applies these same principles in a governed, enterprise-ready context.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure