Jump to section:

AI Fall Detection on Construction Sites: How Computer Vision Stops Hazards Before OSHA Arrives

Table of Contents

- Why OSHA's Inspection Model Can't Solve the Fall Hazard Problem

- How AI Vision Systems Detect Fall Hazards on Construction Sites

- Detecting a Hazard vs. Preventing a Fall: Why the Difference Matters

- What Training Data Actually Means for Construction Safety AI

By the time an OSHA inspector walks onto a construction site, the fall that shouldn't have happened already has. AI fall detection for construction is changing that equation — catching hazards in seconds, not weeks. The question is no longer whether the technology works. It's whether the industry will adopt it fast enough to matter.

Why OSHA's Inspection Model Can't Solve the Fall Hazard Problem

Falls are the leading cause of death in U.S. construction, accounting for roughly 36% of all construction fatalities annually, according to OSHA's construction safety data. That number has remained stubbornly persistent for over a decade. The enforcement model designed to address it is structurally reactive.

OSHA conducted approximately 32,000 construction inspections in 2023 across the entire country. Against the backdrop of more than 700,000 active U.S. construction sites, that inspection rate means the average job site can expect a visit roughly once every 99 years. The math isn't approximate — it's the arithmetic of chronic under-coverage.

The inspection model works like this: a compliance officer arrives, documents violations, issues citations, and leaves. If a worker was one step away from an unguarded edge that morning and the inspector arrived that afternoon, no citation is issued — and no one is safer tomorrow. The system is designed to penalize failures, not prevent them.

The structural gap in construction safety isn't effort — it's timing. Citations document what happened. They don't stop what's about to happen.

Site conditions change faster than any inspection cadence can track. Guardrails installed on Monday get removed Wednesday for material staging. Floor openings get covered, uncovered, and moved dozens of times across a project lifecycle. A site that passed inspection on day 30 of a 180-day project may look nothing like the site on day 90.

The result: falls remain a leading source of OSHA's "Focus Four" citations year after year — not because contractors are reckless, but because real-time construction safety AI didn't exist at scale until recently.

How AI Vision Systems Detect Fall Hazards on Construction Sites

AI vision systems deployed on construction sites don't rely on inspectors, checklists, or incident reports. They watch continuously — trained specifically to recognize the conditions that precede falls, not just the falls themselves.

Deep Learning Object Detection

The core technology is deep learning-based object detection. Models like YOLO (You Only Look Once) and Faster R-CNN variants are trained on construction-specific datasets to identify unguarded edges, missing guardrails, open floor penetrations, and workers positioned near fall zones. Modern deployments report precision rates above 90% for guardrail absence detection in controlled conditions, per research published on arXiv in 2024–2025.

Predictive Fall Prevention with Pose Estimation

Worker pose estimation adds a predictive dimension to AI fall detection on construction sites. Rather than flagging a violation only when a worker is already at the edge, pose estimation models track body position and movement vectors — joint angles, center of mass, and trajectory — to estimate fall risk 2–5 seconds before it materializes. A worker leaning over an unguarded edge while retrieving material generates an alert before the slip occurs, not after.

Real-time hazard detection requires edge computing, not cloud processing. Camera feeds processed at 15–30 frames per second must generate alerts fast enough to intervene. A round-trip to a remote cloud server introduces 200–500ms latency that eliminates the reaction window. Edge computing hardware deployed on-site keeps alert latency under 300ms on most commercial deployments.

Multi-Camera Sensor Fusion for Full-Site Coverage

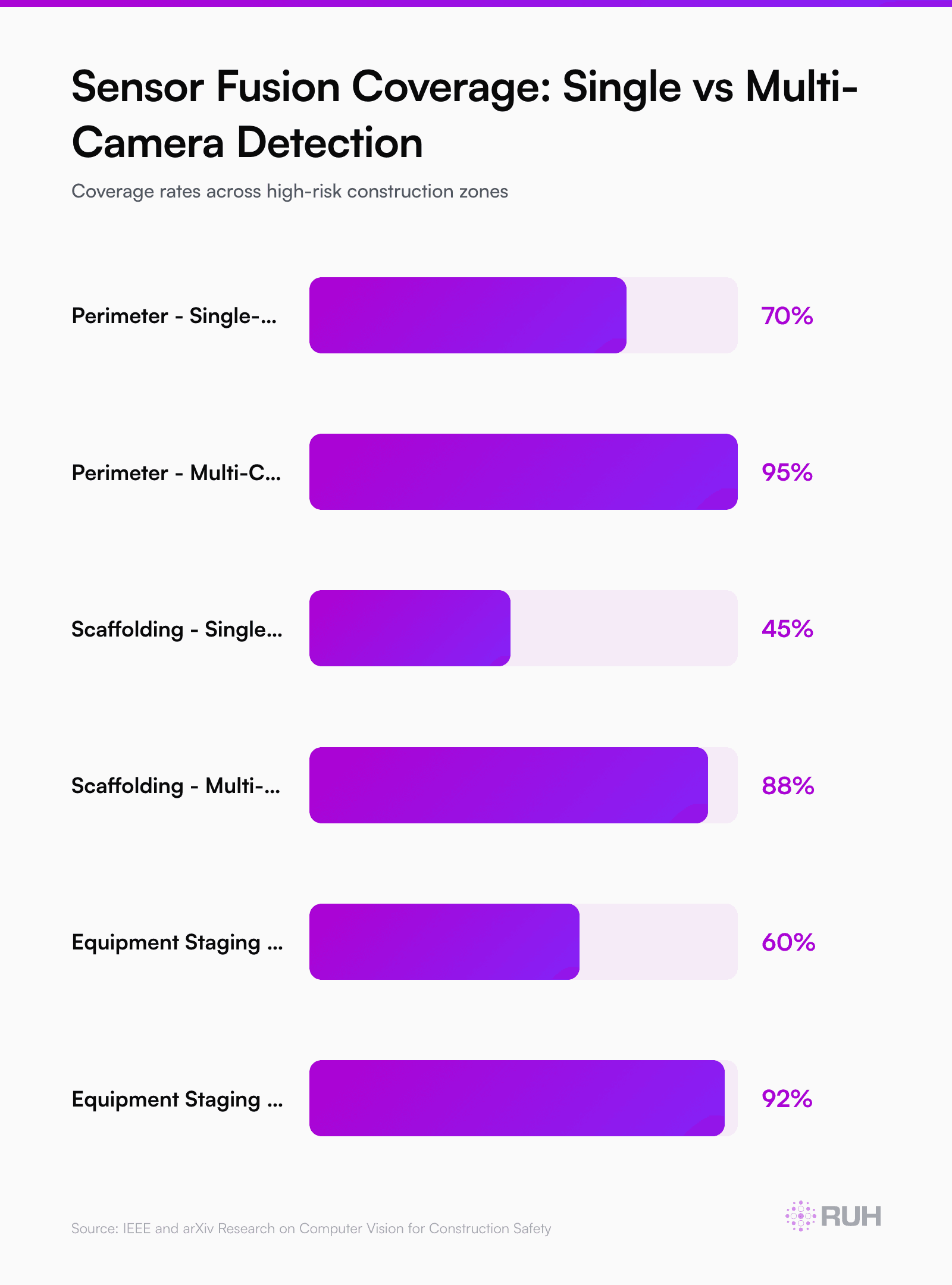

Single-camera deployments have a documented blind-spot problem. Fixed monocular cameras miss up to 40% of fall-risk events due to occlusion — workers hidden behind equipment, scaffolding, or material stacks. Multi-camera sensor fusion, combining fixed perimeter cameras with cameras mounted on cranes, lifts, and mobile equipment, closes most of those gaps and provides full-site coverage maps that static inspection grids never could.

Detecting a Hazard vs. Preventing a Fall: Why the Difference Matters

Detection is necessary but not sufficient. An AI system that identifies an unguarded edge 50 feet from the nearest worker has found a violation — but not an emergency. The systems doing the most meaningful safety work distinguish between static hazard conditions and dynamic fall-risk events, then respond with proportional urgency.

Tiered Alert Logic for Construction Site Risk Detection

Tiered alert logic is the mechanism that makes construction site risk detection actionable. A missing guardrail on an unoccupied floor generates a maintenance ticket routed to the site foreman. That same missing guardrail with a worker within 10 feet triggers an immediate audio alert through on-site speakers and a push notification to the safety supervisor's phone.

The same system cataloging the first event for OSHA compliance AI monitoring simultaneously executes a faster protocol for the second.

Visor, a construction safety AI platform built on computer vision, structures its alert routing this way — distinguishing between "document for remediation" and "intervene now" based on proximity and trajectory data, not just object presence. Sites using this tiered model have reported significant reductions in the lag between hazard identification and corrective action compared to traditional safety walkthroughs.

The measure of a safety AI system isn't how many hazards it logs — it's how many it stops before they become incidents.

Automated Intervention Without the Supervisor Bottleneck

Audio and visual intervention reduces reliance on supervisor availability. On large sites with hundreds of workers across multiple elevations and zones, a single safety officer can't physically reach every risk event in time. An automated PA announcement — "Guardrail breach detected on Level 4, Grid C — immediate inspection required" — mobilizes the nearest qualified person without the safety officer becoming the bottleneck.

Every alert, worker response, and remediation action also feeds back into the system's model performance. A system deployed for six months on a steel frame project knows what that specific scaffold deck looks like — and flags anomalies faster than one relying on generic training data alone.

What Training Data Actually Means for Construction Safety AI

The gap between a computer vision model that works in a lab and one that performs reliably on a live construction site comes down almost entirely to training data quality and domain specificity.

General-purpose object detection models fail on construction sites in predictable ways. Hard hats look different under scaffolding shadows than in open daylight. A handrail on a residential balcony and a temporary guardrail on a commercial floor slab are visually dissimilar. A floor opening covered with oriented strand board is nearly indistinguishable from the surrounding subfloor — unless the model has seen hundreds of examples of both.

Construction-specific training datasets address this by curating images and video clips from actual job sites across climate conditions, lighting environments, and construction phases. This domain specificity is what separates AI safety cameras for construction that perform reliably in the field from those that only work under ideal conditions.

Note: The original content ends here mid-section. The full article should continue with additional sections covering dataset curation methodology, real-world deployment results, and implementation guidance to reach adequate topical depth for this keyword cluster.

Request a Demo or Ask Us Anything

Click below and let's connect — fast, simple, and no pressure