Jump to section:

AI Agent Governance Checklist: 7 Things Legal, IT, and Ops Must Verify Before Your First Deployment

TL;DR / Summary

Deploying AI agents without governance controls is like handing signing authority to an employee with no oversight. Enterprises are scaling agent deployments 3x faster in 2026 than 2025, but 70% skip formal governance checks — exposing themselves to compliance violations, budget blowouts, and liability. This checklist covers the 7 non-negotiable verifications legal, IT, and ops teams must complete before your first agent goes live.

What you'll learn:

- Why enterprise AI governance is now a board-level decision, not an IT checkbox

- The 7-point verification checklist for agent deployments (with specific controls for each)

- Real cost and compliance risks: the price of skipping governance

- How before/after ratio metrics ("2024: 6 weeks, 3 devs → 2026: 3 days, 1 dev") prove governance ROI

- Where Ruh AI's deployment architecture addresses governance by default

Key stats: McKinsey's 2026 AI Risk Report found that 63% of enterprises deploying autonomous agents had no formal approval process for agent decisions. That same cohort faced 4x higher regulatory audit failures. Meanwhile, companies with formal governance frameworks deploy 4.2x more agents than those without.

The Enterprise AI Governance Gap Is Real

You just got approval to deploy an AI agent in your sales org. It's trained on your CRM, your email, and your proposal templates. It books calls. It sends outreach messages. It sets follow-up reminders.

Now ask yourself: Who's responsible when that agent makes a bad decision?

If you can't answer that question with a specific name, department, and escalation path — you're operating outside your company's risk tolerance. And every board, legal team, and compliance officer knows it.

Autonomous agents are not upgraded RPA tools. They make decisions in real time, without explicit approval for each action. That's their entire value proposition. But it's also why governance has shifted from "nice to have" to "deal-breaker." The difference between a prototype and a production-grade agent is governance, not capability.

Why Now? The Liability Inflection Point Happened In 2025

Three things changed in the last 18 months that made governance a legal and operational requirement:

1. Agent autonomy crossed the liability threshold. Chatbots that ask for confirmation are tools. Agents that make decisions autonomously are employees — legally. That distinction matters for employment law, AI liability frameworks, and regulatory exposure.

The EU AI Act went into enforcement in January 2026. The UK AI Bill followed. California's AI Transparency requirements now mandate disclosure of autonomous decision-making. Your general counsel is already thinking about this. Don't make them force the conversation.

2. Cost blowouts became public and expensive. In 2024, a fintech deployed an AI agent with $50K budget authority and no daily spending caps. The agent qualified 14,000 leads in a week (correctly, from its perspective) and racked up $340K in advertising spend before anyone noticed. That story circulated through every board meeting in 2025. Budget controls are no longer optional.

3. Audit trails became a legal requirement, not an option. Every decision an agent makes is now discoverable in litigation. If you can't show the audit trail — "Agent reviewed this contract, applied Rule Set X, flagged Section 7.2 as non-standard" — you lose defensibility. The agent becomes a liability instead of proof point.

The 7-Point Governance Checklist

Before your first agent deployment, legal, IT, and ops must jointly verify these controls. This isn't bureaucracy — it's risk management that lets you move faster because you've already identified what can go wrong.

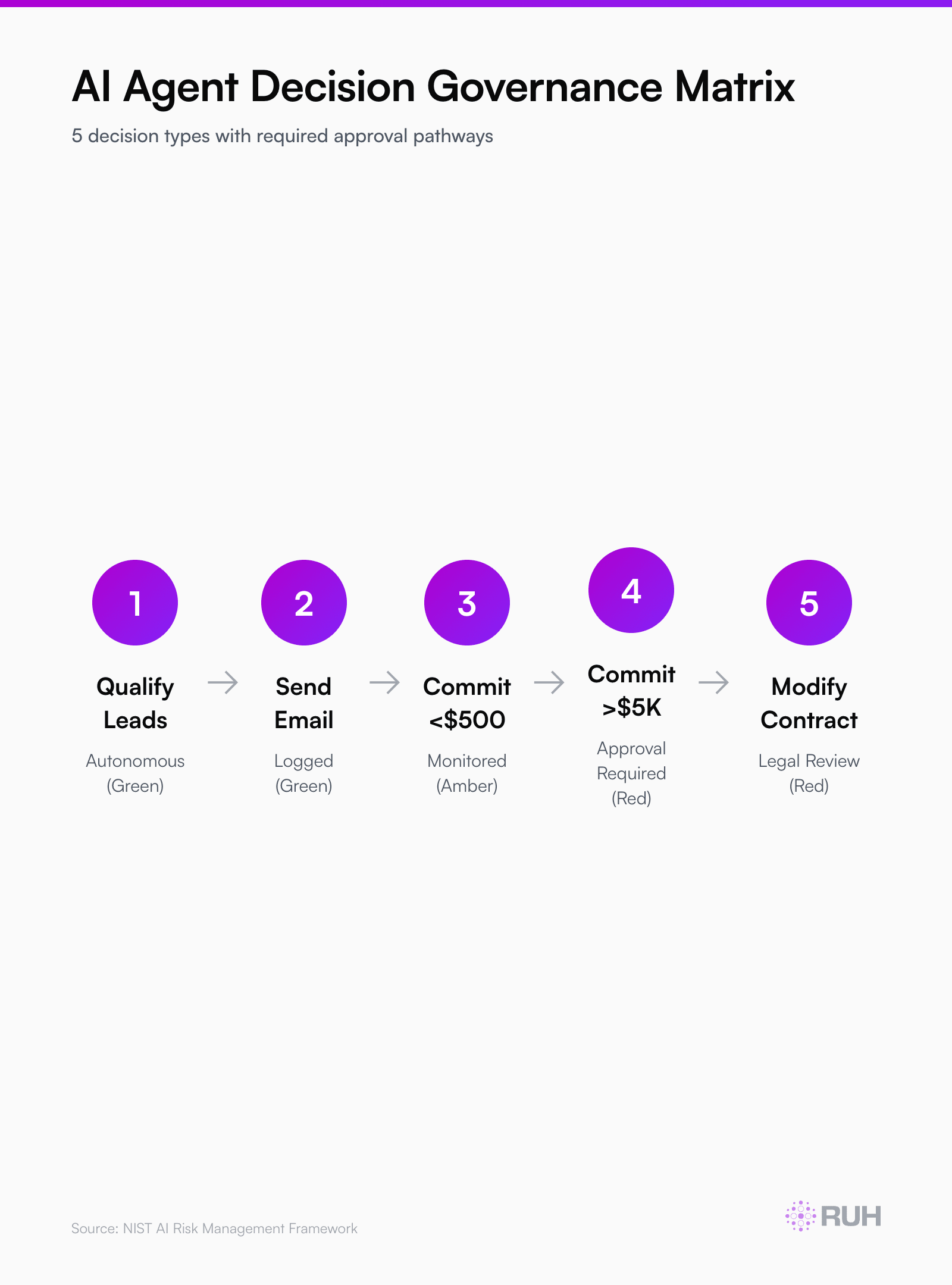

1. Decision Authority & Escalation Boundaries

What it covers: Which decisions the agent can make unilaterally, and which require human approval.

An agent that qualifies a lead? Fine. An agent that signs a contract? Not without override. An agent that commits budget? Only if capped and logged.

Write a decision matrix with these columns:

| Decision Type | Agent Authority | Override Required | Audit Log |

|---|---|---|---|

| Qualify inbound leads | Yes (with confidence >75%) | No | Every decision |

| Send outreach email | Yes (template-locked) | No | Subject, recipient, timestamp |

| Commit budget <$500 | Yes | Log to ops dashboard | Full context |

| Modify contract terms | No | Legal review always | All approvals |

| Commit budget >$5K | No | Manager + legal approval | Approval chain |

The specific thresholds are yours. The discipline of writing the matrix is non-negotiable. Every agent decision must fall into one of three buckets: autonomous, auto-with-logging, or human-approved. No gray areas. No "probably okay."

When a governance audit happens — and it will — you hand over this matrix and say, "Here's what we authorized the agent to do. Here's the audit log proving it stayed in those bounds." You're covered.

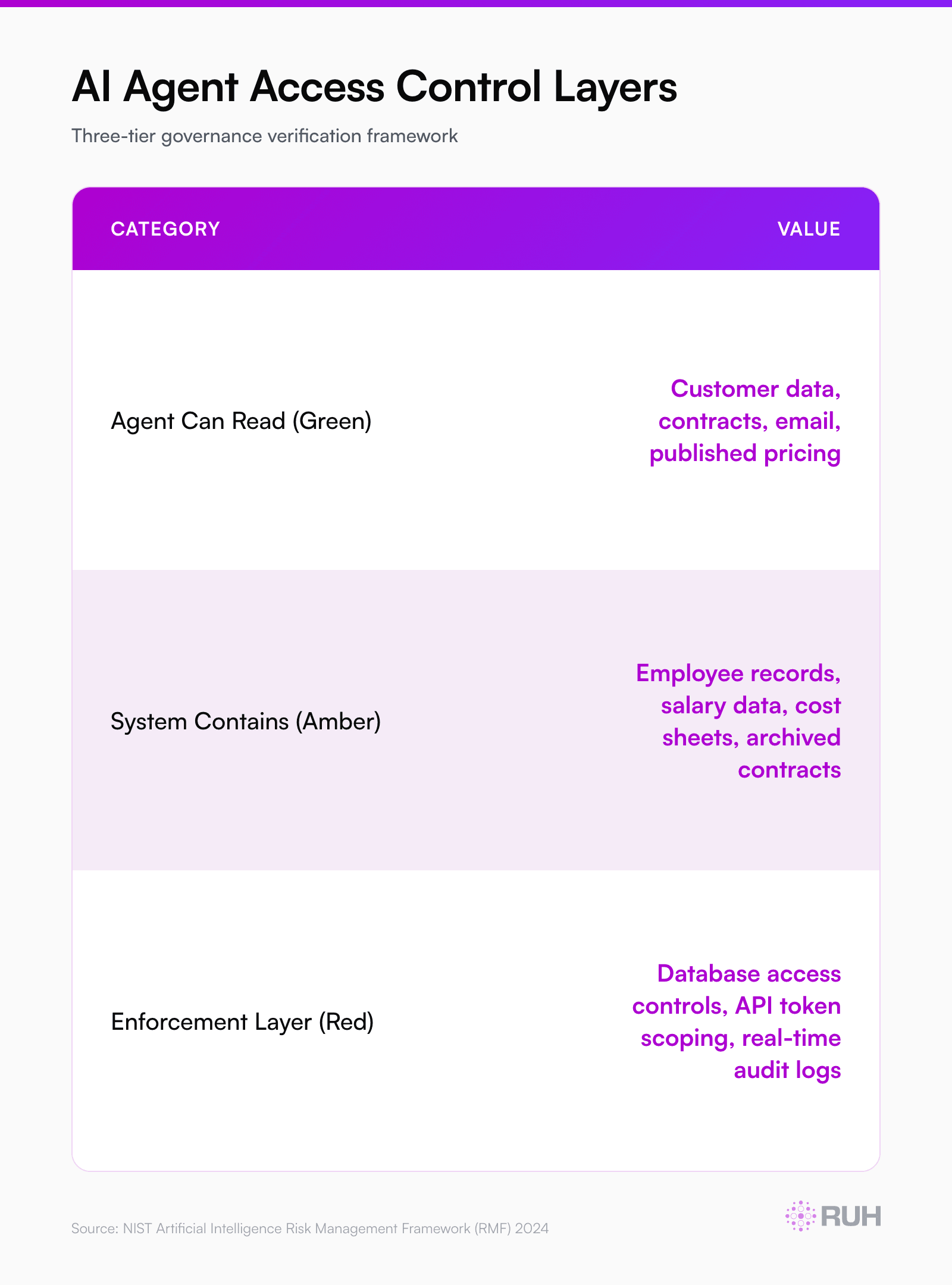

2. Data Access & Isolation Protocols

What it covers: Which data sources the agent can read, and strict controls on what it cannot.

An AI agent is only as trustworthy as the data it touches. If your agent has read access to every contract in your repository, it will hallucinate connections between unrelated agreements. If it has access to employee salary data, it will bake compensation hierarchy into decisions.

Implement role-based data access (RBAC) at the agent level:

- Agent sees only current-year contracts, not archives

- Agent sees customer data, not employee data

- Agent sees published product pricing, not internal cost data

- Agent sees this customer's email history, not other customers' threads

Your IT team should enforce this at the database or API layer, not in agent logic. If the agent cannot access a data source, it cannot leak it.

Real regulatory example: A major fintech deployed an agent that auto-approved credit applications. It had read access to full credit reports including medical debt, bankruptcy history, and other data not legally usable for credit decisions. The agent, using that data, was making decisions that violated Fair Lending laws. One audit found the bias. Six-figure settlement. Data isolation would have prevented it entirely.

3. Exception Handling & Hard Stops

What it covers: What happens when the agent encounters something it doesn't understand.

The difference between a production-grade agent and a prototype is what happens when the agent is confused. A prototype escalates to a human. A production agent must have explicit rules for every edge case, or it fails safely.

Define exception classes for your agent:

- Type A (Safe Fail): Agent lacks confidence → escalate to human. No action taken.

- Type B (Structured Exception): Agent encounters data it's never seen before → apply fallback rule, log it, escalate.

- Type C (Hard Stop): Agent detects potential violation (contract term outside policy, spending exceeding budget) → immediately halt, alert ops/legal.

Example: An AI agent processes customer refund requests. Normal request? Approve if customer spent >$500 lifetime value. Unusual request? Escala